How to Use CSV Reader in Python: A Practical Guide

Learn how to read CSV files in Python using the built-in csv module. This guide covers csv.reader and DictReader, encoding, delimiters, headers, error handling, and performance tips for analysts and developers.

By the end of this guide, you will be able to read CSV data in Python using the csv.reader with reliable encoding, proper newline handling, and robust error checks. Expect practical, copy-pasteable examples, common pitfalls, and tips to scale from small files to larger datasets. According to MyDataTables, this built-in module offers a solid, beginner-friendly path for data analysts and developers learning how to use csv reader python.

Primer on Python's csv Module and Reader

The built-in csv module in Python provides lightweight, robust tools for reading and writing CSV data without loading the entire file into memory. The csv.reader function returns each row as a list of strings, preserving the order of columns. This makes it ideal for quick parsing, transformation, or streaming tasks on modest-sized datasets. Before you start, ensure you have Python 3.x installed and a sample CSV file to practice with.

Key setup patterns are:

- Always open CSV files with newline='' to prevent Python from translating newline characters on Windows.

- Explicitly specify an encoding, typically utf-8, to avoid decoding errors with non-ASCII data.

- Use a with block to guarantee the file is closed automatically.

Here's a minimal example:

import csv

with open('data.csv', newline='', encoding='utf-8') as f:

reader = csv.reader(f)

for row in reader:

print(row)This pattern reads each line as a list, where row[0] is the first column, row[1] the second, and so on. If your CSV uses a header row, you can skip it or handle it differently. You will also encounter variations for different delimiters (semi-colon, tab) and quote characters, which you can pass via the delimiter and quotechar arguments. As you grow more comfortable, you can extend this approach to basic data validation and type conversions.

note

Tools & Materials

- Python 3.x installed(3.7+ recommended; ensure your PATH is configured.)

- Sample CSV file(Use a small file for learning, then test with larger data.)

- Text editor or IDE(Helpful for editing and saving Python scripts.)

- A terminal or command prompt(For running scripts and validating outputs.)

- Optional: Jupyter or notebook(Great for interactive exploration and visualization.)

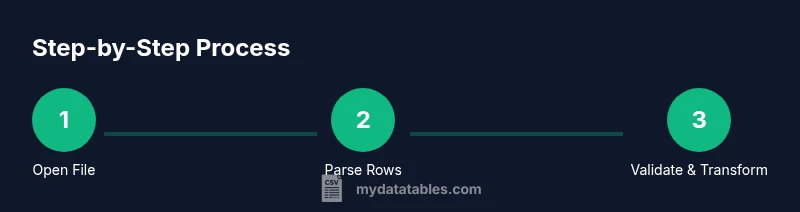

Steps

Estimated time: 15-30 minutes

- 1

Prepare your environment and sample CSV

Install Python 3.x if needed and place a sample CSV (e.g., data.csv) in a known directory. Decide on the encoding (utf-8 is standard) and confirm the file path. This step sets the foundation for predictable parsing.

Tip: Use a tiny sample first to verify column order and data types before scaling up. - 2

Open the file with safe defaults

Open the CSV using with open(..., newline='', encoding='utf-8') to ensure consistent newline handling and decoding across platforms. Create a csv.reader instance for sequential access.

Tip: Always close files automatically with the with statement to avoid resource leaks. - 3

Iterate rows with csv.reader

Loop over the reader to access each row as a list of strings. Access values by index (row[0], row[1], etc.) and perform light transformations as needed.

Tip: Validate row length before unpacking to avoid IndexError. - 4

Process and validate data types

Convert numeric fields using int/float as appropriate and handle missing values gracefully. Consider stripping whitespace to normalize inputs before conversion.

Tip: Wrap conversions in try/except to catch malformed data without breaking the loop. - 5

Use DictReader for header-based access

If your CSV has headers, prefer csv.DictReader to access fields by name (row['ColumnName']). This improves readability and reduces errors from misindexed columns.

Tip: If headers are inconsistent, supply explicit fieldnames to DictReader. - 6

Test, handle edge cases, and scale

Run tests with missing fields, extra columns, and unusual delimiters. As you scale to larger files, consider streaming and chunk processing to keep memory usage in check.

Tip: Consider writing output progressively to avoid building large intermediate structures.

People Also Ask

What is the difference between csv.reader and csv.DictReader?

csv.reader reads rows as lists of strings, while csv.DictReader yields dictionaries keyed by header names. DictReader is often clearer when you need to access specific columns by name.

Reader gives you lists; DictReader gives you dictionaries keyed by headers.

When should I use csv.reader instead of pandas?

Use csv.reader for lightweight parsing and when memory matters; it avoids loading a full DataFrame. For complex data manipulation, pandas offers powerful tools but at a higher memory cost.

If you just need simple parsing and memory efficiency, csv.reader is fine; for heavy data work, consider pandas.

How do I handle headers safely?

If your CSV has headers, use csv.DictReader to access columns by name. If you prefer csv.reader, skip or manually handle the header row to avoid misalignment.

Use DictReader to access by header names, or skip the first row when using reader.

What errors should I catch while reading CSV files?

Common errors include FileNotFoundError, UnicodeDecodeError, and csv.Error for malformed lines. Use try/except blocks and validate row lengths to handle issues gracefully.

Be ready for missing files, encoding problems, or bad lines, and catch csv.Error as needed.

Can I use a delimiter other than a comma?

Yes. Pass delimiter to csv.reader or DictReader, e.g., delimiter=';' for semicolons or delimiter='\t' for tabs. Ensure the rest of the parsing logic aligns with the chosen format.

Absolutely—adjust the delimiter as needed for your CSV format.

Is there a performance difference between csv and pandas?

csv parsing with the built-in module is typically faster for tiny reads and uses less memory than loading a full DataFrame. For complex pipelines, pandas provides richer functionality but at a higher memory cost.

Raw csv is lighter; pandas is more capable but heavier.

Watch Video

Main Points

- Adopt the newline='' and encoding='utf-8' pattern for reliability

- Choose csv.reader for simple, list-based access

- Prefer csv.DictReader when headers exist for readability

- Stream large files to maintain memory efficiency