How to Use read.csv in R: A Practical Guide

A practical, step-by-step guide to reading CSV files in R using base read.csv and tidyverse read_csv, with headers, delimiters, encodings, and performance tips for data analysis.

Learn how to read CSV data into R using base read.csv and the tidyverse read_csv, with guidance on headers, separators, encoding, and common pitfalls. This quick guide shows practical examples, how to inspect the data frame, and how to handle missing values and large files efficiently. It also points to when to prefer read_csv for speed and how to manage locale and file encoding variations.

Why CSV matters for data work in R

CSV remains a reliable and portable format for exchanging data between systems, teams, and platforms. In data science workflows, you frequently import CSV files from databases, spreadsheets, and external sources, so knowing how to read them correctly in R is essential. This guide grounds the task in real-world practice and introduces MyDataTables' perspective: CSV import is a foundational skill for any analyst or developer. According to MyDataTables, CSV continues to be the most interoperable plain-text format for sharing datasets, and getting import right reduces downstream cleanup. The examples below show how to check the resulting structure with str() and head(), identify column types, and spot potential problems like missing values or inconsistent encodings early. The goal is to enable a smooth transition from file to analysis, with robust handling of edge cases and varying file origins. Understanding these basics sets up more advanced tasks, such as streaming large files, programmatic cleaning, and reproducible workflows.

Base R read.csv: syntax, defaults, and common parameters

Base R ships with read.csv, a convenience wrapper around read.table tailored for comma-separated values. Its defaults assume a header row and comma delimiter, and it returns a data.frame by default. Key arguments include file, header, sep, stringsAsFactors, na.strings, and dec for decimal separator. Practical examples help illustrate:

# Basic import with safe defaults

df <- read.csv("path/to/file.csv", header = TRUE, stringsAsFactors = FALSE, na.strings = c("", "NA"))After reading, inspect with dim(df), str(df), and head(df) to confirm the shape and types. Note that older R versions default stringsAsFactors = TRUE, which can unexpectedly convert text data into factors; modern R releases default to FALSE, but you should verify your environment. For large files, consider reading in chunks or using readr for speed, then convert to a data.frame if needed.

Using readr::read_csv: speed and type inference

read_csv from the readr package is designed for speed and convenience. It reads columns with automatic type inference and returns a tibble, which prints more compactly in the console and plays nicely with tidyverse pipelines. Example:

library(readr)

df <- read_csv("path/to/file.csv")read_csv infers column types, treats blank fields as NA by default, and reports parsing problems prominently. If you need to customize parsing, use locale() to control encoding and decimal marks, or specify col_types to force specific types. When dealing with inconsistent encodings, read_csv often handles UTF-8 best, and locale(encoding = "UTF-8") can help with non-ASCII text.

Important options: header, sep, encoding, na_strings, and colClasses

Base read.csv allows explicit control over headers, separators, and missing-value representations. Examples:

data <- read.csv("data.csv", header = TRUE, sep = ",", fileEncoding = "UTF-8", na.strings = c("", "NA"))If you prefer the tidyverse approach, read_delim and read_csv2 offer flexible separators and locales. In read_csv, use col_names to set or skip headers, and col_types to specify types. For example, to import a semicolon-delimited file with explicit column types:

library(readr)

df <- read_csv("data_semicolon.csv", col_names = TRUE, col_types = cols(

id = col_integer(),

name = col_character(),

value = col_double()

))Keep in mind decimal separators and encoding can vary by region; using fileEncoding or locale() helps ensure numbers parse correctly.

Practical examples: reading a simple dataset and a real-world CSV

To illustrate, we’ll create a tiny temporary CSV and read it with both base R and readr. This mirrors how you’d approach a real dataset:

# Create a simple CSV for demonstration

tmp <- tempfile(fileext = ".csv")

out <- data.frame(id = 1:3, name = c("Alice", "Bob", "Chad"), score = c(85.5, 92.0, 78.3))

write.csv(out, tmp, row.names = FALSE)

# Base R

df_base <- read.csv(tmp, header = TRUE, stringsAsFactors = FALSE)

# Tidyverse

library(readr)

df_tidy <- read_csv(tmp)For a real-world file with a different delimiter or encoding, you might use:

# Semicolon-delimited with UTF-8 encoding

df_semicolon <- read.csv("survey.csv", header = TRUE, sep = ";", fileEncoding = "UTF-8");

# Or with readr

df_semicolon_tidy <- read_csv("survey.csv", locale = locale(encoding = "UTF-8"))After import, validate with summary(df) and head(df) to spot unexpected types or missing values early.

Performance tips for large CSV files

Reading large CSVs can become a bottleneck if you don’t optimize. A common rule is to prefer readr::read_csv over base read.csv for speed and robust parsing, especially on large files. If you need even faster performance, consider data.table::fread, which is highly optimized for big data, or read_csv_chunked for processing in chunks. Additionally, predefine column types with col_types (readr) or colClasses (base) to prevent repetitive type guessing and reduce memory footprint. When streaming isn’t possible, process in chunks and write intermediate results to disk to avoid loading the entire dataset into memory at once.

Authority sources and trust signals

For authoritative guidance on CSV reading in R, consult trusted sources:

- https://cran.r-project.org/web/packages/readr/read_csv.html

- https://stat.ethz.ch/R-manual/R-devel/library/utils/html/read.csv.html

- https://www.r-project.org/about.html

These sources document the behavior and options of read.csv and read_csv, and provide best practices for encoding, separators, and missing values. The MyDataTables team emphasizes following official documentation to ensure reproducible results across environments. By aligning with these sources, you can build robust CSV import scripts that scale with project needs.

Common pitfalls and debugging strategies

Even experienced users stumble over a few recurring issues. Start by validating the file encoding and ensuring UTF-8 compatibility; mismatches often produce garbled text or parsing errors. If column types are not as expected, re-import with explicit col_types (readr) or colClasses (base) and re-check; use glimpse() or str() to inspect. When headers are missing or misaligned, verify the header parameter and the actual data rows. Finally, always test on a small subset first to catch syntax or path errors before scaling up to large datasets.

Tools & Materials

- R installed(Latest stable release recommended)

- RStudio or other IDE(Optional but helpful for interactive exploration)

- Sample CSV file(Include headers and a few rows)

- Packages: readr, readxl (optional)(readr for read_csv; read.csv is base)

- Encoding awareness (UTF-8)(Check with fileEncoding/locale if needed)

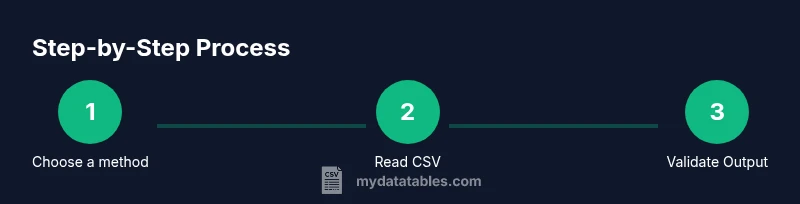

Steps

Estimated time: 25-40 minutes

- 1

Identify the dataset and file path

Locate where your CSV file lives and determine the correct path or URL. Confirm the file extension is .csv and note any potential permissions issues. This step saves time by preventing file-not-found errors later.

Tip: Use file.choose() in RStudio to pick the file visually. - 2

Install or load required packages

If you plan to use tidyverse tools, install and load readr with library(readr). For base R, you don’t need extra packages. Confirm you have write access if you plan to save results.

Tip: Run install.packages('readr') once, then library(readr) in every session. - 3

Read with base R read.csv

Run read.csv with clear headers and an explicit encoding when needed. Inspect the resulting data frame with dim(), str(), and head() to confirm structure and data types.

Tip: Set stringsAsFactors = FALSE to avoid unexpected factor conversion in older R versions. - 4

Read with read_csv (tidyverse) and compare

Load read_csv and import the same file to compare the resulting tibble to the data frame. Use str() and head() to verify column types and values match expectations.

Tip: Use locale(encoding = 'UTF-8') if the file includes non-ASCII characters. - 5

Handle column types explicitly

If automatic parsing isn’t accurate, specify types using col_types (read_csv) or colClasses (read.csv) to prevent misinterpretation (e.g., dates, factors).

Tip: For dates, you can use col_date() in readr or as.Date() after import. - 6

Validate and clean data

After import, check for missing values, outliers, or incorrect formats. Apply basic cleaning steps or store a clean version for downstream analysis.

Tip: Use summary() and anyNA() to quickly assess data health. - 7

Consider performance for large files

If the CSV is large, prefer read_csv or fread-like options and consider chunked processing to avoid memory issues. Document the approach for reproducibility.

Tip: For extremely large datasets, process in chunks (read_csv_chunked) and write intermediate results. - 8

Document and export results

Save the imported data in a trusted format (RDS, feather, or saved CSV) and document the import parameters used for future replication.

Tip: Record fileEncoding, delimiter, and col_types as metadata.

People Also Ask

What is the difference between read.csv and read_csv?

read.csv is base R and returns a data.frame with defaults suited for comma-delimited files. read_csv is part of the tidyverse and returns a tibble with faster parsing and automatic type inference. The choice depends on your workflow and performance needs.

Base R read.csv is the classic option; read_csv is faster and works best with tidyverse workflows.

When should I use base R vs tidyverse for reading CSVs?

If you’re already using tidyverse tools, read_csv offers smoother integration. For small scripts or minimal dependencies, read.csv suffices. For performance with large datasets, consider read_csv or data.table’s fread.

If you’re in a tidyverse project, use read_csv; otherwise base read.csv can work well for simple tasks.

How do I handle missing values after reading a CSV?

Treat missing values with na.strings in base R or na in read_csv. After import, use is.na and summary to understand gaps, then impute or remove as appropriate for your analysis.

Identify missing data with is.na and decide on imputation or removal after import.

How can I specify column types during import?

In base R, use colClasses; in read_csv, use col_types to lock down date, numeric, and character types. This prevents mis-typed columns and speeds up parsing.

Use colClasses or col_types to ensure columns have the right data types during import.

What about different encodings in CSV files?

If a file isn’t UTF-8, set the encoding explicitly with fileEncoding in base R or locale(encoding) in readr. This helps preserve non-ASCII characters.

Set the encoding explicitly to avoid garbled characters.

Why does read.csv sometimes convert strings to factors?

Older R defaults used stringsAsFactors = TRUE. Newer R versions default to FALSE, but legacy scripts may still depend on factors. Check your session options and set stringsAsFactors = FALSE if needed.

Older scripts may convert strings to factors; check your R version and options.

Watch Video

Main Points

- Choose the right import function for size and needs

- Explicitly set headers and delimiters

- Inspect data structure immediately after import

- Control data types to prevent downstream errors

- Handle encodings and missing values early