How to Save a DataFrame as CSV in R

Learn how to save a dataframe as CSV in R using base R write.csv, readr's write_csv, and data.table's fwrite. This guide covers encoding, delimiters, and common pitfalls for analysts.

Goal: save a dataframe as CSV in R. Base R users should run write.csv(df, 'path/file.csv', row.names=FALSE) for a straightforward export. For faster performance on large data, use readr::write_csv(df, 'path/file.csv'). Ensure the target directory exists, specify UTF-8 encoding when needed, and verify the file by re-reading it to confirm data integrity.

Why Saving DataFrames to CSV Matters in R

Exporting dataframes to CSV is a foundational skill in data analysis and reproducible workflows. CSV files are widely supported by databases, analytics platforms, and office software, making it easy to share results with teammates who use different tools. According to MyDataTables, CSV export remains a practical default for exchanging tabular data between R and other environments. This chapter explains how to choose a CSV export method that balances simplicity, speed, and reliability, while maintaining data integrity and reproducibility across teams.

Base R: write.csv for Simple Exports

Base R provides a straightforward path to save a dataframe with the write.csv function. The typical pattern is write.csv(your_df, 'path/file.csv', row.names = FALSE). Setting row.names to FALSE avoids adding an extra, unintended column of row indices. You can also control missing values with na = '' to produce cleaner exports in downstream tools. If your workflow involves Windows environments, you may want to set fileEncoding = 'UTF-8' to ensure consistent encoding across platforms. While simple, write.csv is reliable for small to moderately sized datasets and maintains broad compatibility with other software.

Using readr::write_csv for Speed and Robustness

The readr package (part of the tidyverse) offers a faster and often more robust CSV writer with write_csv(df, 'path/file.csv'). It is particularly beneficial for large data frames and streaming pipelines. write_csv writes data without including row names by default, and it handles typical encoding concerns gracefully. If you need explicit control over encoding, you can still ensure UTF-8 by setting locale options or using write_lines after serializing to a character vector. For practical purposes, readr::write_csv is a strong default when performance matters.

Data.table: fwrite for Large DataFrames

When exporting very large dataframes, data.table::fwrite is a common choice due to its speed and efficiency. The syntax is straightforward: fwrite(your_df, 'path/file.csv', row.names = FALSE). Like write_csv, fwrite aims to minimize overhead and maximize throughput. It also handles large datasets more predictably in memory-constrained environments. If you already use data.table in your analysis, fwrite can be a natural extension for writing results to disk without extra conversion steps.

Handling Data Types, NA, and Row Names

CSV exports must preserve important data characteristics. Be mindful of how factors are serialized (often as their underlying integer codes unless you convert to character), how NA values are represented, and whether to include row names. The common practice is to set row.names = FALSE to avoid an extra index column, and to convert factors to characters if you want human-readable labels. For NA handling, using na = '' in base R or ensuring missing values map correctly in your downstream tools will prevent misinterpretation.

Encoding, Delimiters, and File Integrity

UTF-8 encoding is the de facto standard for CSV files in multi-tool workflows. When exporting, confirm the encoding used by your environment to avoid garbled characters, especially for non-English data. Delimiters beyond the comma are sometimes necessary (e.g., semicolon in locales where comma is decimal). In those cases, prefer write_delim or the appropriate parameter (sep) in base R. After export, re-reading the file with a compatible function validates that the data structure and values remain intact.

Verifying Exports and Debugging

Always verify the saved file by re-importing it into R or another tool. For base R, read.csv('path/file.csv') confirms structure and types, while readr::read_csv and data.table::fread provide fast re-imports for validation. If the import shows unexpected column counts or type changes, examine the export step for row.names, NA representation, and encoding. This quick sanity check is essential for reliable downstream analysis.

Performance Tips for Large DataFrames

For very large datasets, prefer data.table::fwrite or readr::write_csv over base write.csv for speed and stability. Pre-allocate or sample rows if testing export logic, and consider writing in chunks if the data is too large to fit memory during serialization. Using absolute paths and confirming write permissions before starting avoids common I/O errors. Finally, keep a consistent export schema (column order, data types) to simplify downstream processing.

Tools & Materials

- R installed (base R)(Essential for all exports)

- RStudio or alternative IDE(Helpful for interactive workflow)

- DataFrame in memory(The dataframe you want to save)

- Target directory path(Must exist or be creatable)

- readr package (optional)(Use if you prefer write_csv)

- data.table package (optional)(Use if you prefer fwrite)

- UTF-8 encoding awareness(Set encoding when needed)

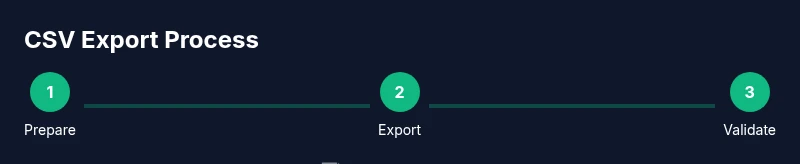

Steps

Estimated time: Total time: 15-25 minutes

- 1

Prepare your R environment

Load your dataframe into memory and ensure the target directory exists. If you are using RStudio, set your working directory to the folder where you want the CSV saved. This prevents path errors during export.

Tip: Use getwd() to check your current directory and setwd('path') to change it. - 2

Choose your export method

Decide between base R write.csv, readr::write_csv, or data.table::fwrite based on dataset size and performance needs. For small datasets, write.csv works fine; for large datasets, fwrite or write_csv is typically faster.

Tip: If you plan to share with non-R users, prefer write.csv for broad compatibility. - 3

Export with base R (simple case)

Run write.csv(df, 'path/file.csv', row.names = FALSE). This avoids adding a separate index column. Consider setting na = '' if you want empty strings for missing values.

Tip: Always verify the result by re-importing with read.csv. - 4

Export with readr for speed

If you have readr installed, use readr::write_csv(df, 'path/file.csv') for faster serialization on large data. It avoids row names by default and handles UTF-8 well.

Tip: Combine with locale settings if you need specific decimal or thousands separators. - 5

Export with data.table for very large datasets

When performance is critical, data.table::fwrite(df, 'path/file.csv', row.names = FALSE) is a robust choice. It is optimized for speed and memory usage.

Tip: If you already use data.table, this avoids extra conversion steps. - 6

Validate and store metadata

After exporting, read the file back with a fast importer (readr::read_csv, data.table::fread, or base read.csv) to ensure structure and values are preserved. Document encoding and delimiter rules for future reproducibility.

Tip: Keep a small ‘export log’ with the file path, method, and date.

People Also Ask

What is the difference between write.csv and write.csv2?

write.csv uses a comma as the separator and is widely compatible. write.csv2 uses a semicolon as a delimiter in some locales. For standard CSV, use write.csv unless you need a semicolon separator due to locale rules.

write.csv uses a comma, which is standard for CSV. write.csv2 uses a semicolon for certain locales.

Can I append data to an existing CSV file instead of overwriting it?

Base R write.csv overwrites by default. To append, you typically read the existing file, combine the data, and write again, or use data.table::fwrite in a streaming fashion. Consider whether appending is appropriate for your workflow.

You generally overwrite when exporting a complete dataset. Appending requires extra steps, such as reading the existing file first.

How should NA values be represented in the CSV?

In base R, use na = '' to write empty strings for missing values. readr typically handles NAs automatically, but you can customize via na argument in other readers. Consistent NA representation is key for downstream analysis.

Use empty fields for missing values to keep compatibility across tools.

Is UTF-8 encoding necessary for CSV exports?

UTF-8 is the most portable encoding for CSV exports, especially when data contains non-ASCII characters. If you encounter garbled text, re-export with UTF-8 and verify by re-importing.

Yes, UTF-8 helps with cross-tool compatibility.

Which method is fastest for large DataFrames?

For large datasets, data.table::fwrite or readr::write_csv generally outperform base write.csv in speed and memory efficiency. Choose based on your existing workflow and dependencies.

For big data, use fwrite or write_csv for speed.

Watch Video

Main Points

- Use the simplest export method that meets your needs.

- Prefer UTF-8 encoding for cross-tool compatibility.

- Verify exports by re-reading to catch issues early.

- Choose the export function based on dataset size and performance.

- Document metadata to ensure reproducibility.