Who Uses CSV Files: Practical Roles, Use Cases, and Tips

Discover who uses CSV files in industries and roles, from analysts to developers. This guide explains practical workflows, use cases, and tips for CSV data in real-world data work.

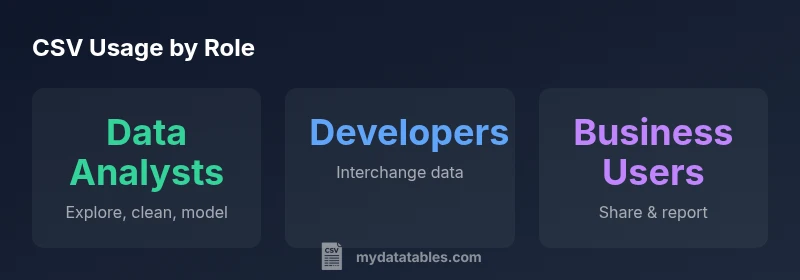

CSV files are used by a broad mix of professionals, including data analysts, software developers, business users, scientists, educators, and IT admins. They rely on CSV for reliable data interchange, lightweight storage, and easy sharing across tools. According to MyDataTables, CSV remains a foundational format because its simplicity, human readability, and broad compatibility enable quick prototyping and seamless collaboration across teams.

Who Uses CSV Files: A Global Roster

CSV files are a foundational format used by a broad spectrum of professionals around the world. In practice, data analysts, software developers, business users, scientists, educators, and IT administrators rely on CSV to store, share, and move tabular data. The reasons are simple: CSV is human-readable, lightweight, and supported by nearly every data tool—from spreadsheets to databases to modern programming languages. In 2026, the MyDataTables team consistently sees CSV embedded in cross-functional workflows, from quick ad-hoc analyses to recurring reporting pipelines. Across industries such as finance, healthcare, manufacturing, and government, CSV eases data handoffs and reduces friction when teams must collaborate without specialized formats. The universality of CSV means a single file can be opened, edited, and re-exported by analysts in Excel, developers in code editors, and business users in reporting dashboards. This ubiquity is exactly why millions of CSV files circulate daily.

Primary Roles: Data Analysts to Business Users

In most organizations, the primary users of CSV files span several roles. Data analysts routinely clean, transform, and summarize CSV data to support dashboards and decision-making. Software developers exchange datasets between applications, APIs, and data stores using CSV as a lightweight interop format. Business users leverage CSV for quick data sharing and lightweight reporting when formal data pipelines are not yet established. Researchers and scientists rely on CSV for reproducible data collection and sharing results with collaborators. Educators and students use CSV to teach data literacy and experiment with datasets. IT admins handle data migrations and backups where CSV remains a practical transport format. Across these roles, the common thread is simplicity, speed, and broad compatibility with existing tools like Excel, Google Sheets, Python, and SQL-based workflows.

Common Use Cases in Real World

Real-world CSV use cases center on accessibility and interoperability. Teams use CSV to transfer data between systems with minimal friction—exporting from a CRM, importing into a data warehouse, or sharing a dataset with partners. Prototyping new analyses often starts with a CSV file because it’s easy to inspect, edit, and iterate without heavy setup. CSV also serves as a portable data format for backups and versioning in lightweight pipelines, where small teams rely on reproducible CSV artifacts rather than full database dumps. In educational or training contexts, instructors distribute datasets in CSV for simplicity and consistency. However, CSV’s limitations—such as handling nested data or very large files—mean it should be paired with other formats or tooling when needed. The MyDataTables analysis notes that many organizations maintain CSV alongside more robust formats to balance speed and scalability.

How CSV Fits Into Modern Data Workflows

CSV remains a versatile entry point in modern data workflows. Analysts import CSVs into Excel or pandas, perform initial exploration, and then push results into dashboards or databases. Developers use CSV for quick data interchange between microservices, tests, or configuration-like data in scripts. In data science, CSVs are a common dataset starter for model experiments or data cleaning pipelines. Cloud storage and ETL tools frequently support CSV as a first-step ingestion format, followed by conversion to columnar formats (e.g., Parquet) for scalability. CSV’s enduring value lies in its ubiquity: almost every language and platform has robust CSV support, enabling teams to collaborate without changing toolchains. Yet the ease of use comes with caveats—encoding, quoting, and line endings require careful handling to avoid subtle data corruption.

Pitfalls and Best Practices

Despite its strengths, CSV can trip teams if not managed carefully. Always agree on encoding (UTF-8 is standard) to prevent garbled characters in international data. Choose a delimiter thoughtfully (comma is common, but semicolon or tab can be safer in locales that use the comma as a decimal separator). Ensure a clear header row and consistent column counts to avoid misalignment in downstream tools. Use quotes for fields containing delimiters or newlines, and validate that special characters are correctly escaped. When sharing CSVs, include metadata about encoding, delimiter, and line endings to minimize interpretation errors. Finally, prefer programmatic parsing over manual editing in spreadsheets for large datasets, as human edits can introduce inconsistencies that propagate downstream.

When CSV Is Not the Best Choice

CSV shines for simplicity, but it isn’t ideal for every scenario. Large datasets may exceed memory limits or file size constraints in some tools; in such cases, consider columnar or binary formats (e.g., Parquet, Feather) that offer compression and faster analytics. Nested data or complex schemas are not well-suited to flat CSV; formats like JSON or specialized data files may be better. When data integrity, governance, or auditing is paramount, structured formats with explicit schemas and metadata may outperform ad-hoc CSVs. Still, CSV often remains the first practical choice for quick exchanges and lightweight pipelines, especially in teams that prioritize interoperability and rapid iteration.

Practical Tips for Working with CSV

- Start with a clear plan: define encoding, delimiter, header presence, and expected row count.

- Validate input with a trusted parser before loading into analytics tools.

- Normalize line endings across platforms (LF vs CRLF) to avoid split records.

- Use dedicated libraries (e.g., Python's csv or pandas read_csv, R's readr) that handle edge cases automatically.

- Maintain a small schema: declare expected columns and data types to catch inconsistencies early.

- Preserve an exact original copy with its metadata in a separate file or version control to support reproducibility.

Supporting Tools and Libraries

CSV has excellent library support across languages and platforms. Python users leverage pandas read_csv and the built-in csv module for robust parsing. R users rely on readr or data.table to load CSVs efficiently. In spreadsheets, Excel and Google Sheets remain convenient for quick edits and ad-hoc analysis. For production workflows, ETL tools and data integration platforms often ingest CSV as a first step and then convert to more scalable formats. Validation and cleaning libraries—OpenRefine, Great Expectations, or custom scripts—help enforce quality, especially when CSVs come from diverse sources.

Real-World Case Scenarios

A small marketing team collects campaign data in CSV exports from a CRM. They perform basic cleansing in pandas, join with a product catalog, and generate a weekly report in a shared Google Sheet. In a logistics company, CSV files stream from multiple regional systems into a staging area; engineers transform them into a consolidated CSV for daily dashboards, then archive the raw files for traceability. A university lab shares experimental results as CSV with collaborators; researchers import the data into R for statistical analysis and publish results with reproducible scripts. These scenarios illustrate how CSV’s simplicity can accelerate collaboration while highlighting the need for disciplined data hygiene.

Comparison of CSV usage by role and typical tooling

| Role/Persona | Common CSV Use Case | Typical Tools |

|---|---|---|

| Data analyst | Data cleaning, exploration, and reporting from CSVs | Excel, Python (pandas), R (tidyverse) |

| Software developer | Interchange datasets between systems | Python, Java, Go, scripting |

| Business user | Sharing data for quick decisions | Excel, Google Sheets, BI tools |

| IT/admin | Migration and backups of CSV-based data | CLI tools, ETL pipelines |

People Also Ask

Who benefits most from using CSV files?

CSV files benefit data-facing roles that need fast access to tabular data: analysts, developers, business users, and IT teams. Their shared format reduces friction when moving data between tools and systems.

CSV is most beneficial for analysts, developers, business users, and IT teams who need fast access to tabular data and easy cross-tool sharing.

What makes CSV a good format for data sharing?

CSV is lightweight, human-readable, and supported by nearly all data tools. Its simplicity lowers barriers to collaboration and rapid iteration, especially in environments with multiple programming languages and platforms.

CSV is lightweight, human-readable, and widely supported, making it ideal for sharing data quickly across tools.

Are there downsides to CSV compared to other formats?

Yes. CSV lacks inherent typing, handles nested data poorly, and can become unwieldy for very large datasets. It also requires careful handling of encoding, delimiters, and line endings to avoid data corruption.

CSV has no built-in data types and struggles with nested data and very large files, so be mindful of encoding and structure.

Can CSV handle large datasets effectively?

CSV can handle large datasets, but performance depends on the tool. For very large data analysis, consider chunked loading or converting to columnar formats like Parquet for scalability.

CSV can handle large data, but for big datasets, use chunked reads or switch to more scalable formats.

How do I choose the right delimiter for CSV?

If your data contains commas within fields, use a different delimiter like semicolons or tabs. Consistency across files is key, and many tools let you specify the delimiter during import.

If fields contain commas, pick semicolons or tabs and keep it consistent across files.

Is CSV suitable for sensitive data?

CSV itself offers no encryption or access controls. When handling sensitive data, apply proper security measures, consider encrypted storage, and use access controls in the hosting system.

CSV doesn't secure data by itself—use encryption and access controls when handling sensitive information.

“CSV remains an indispensable data interchange format due to its simplicity and widespread tool support. Its enduring appeal is amplified by the ability to quickly prototype and share data across teams.”

Main Points

- CSV remains universally accessible across teams

- Choose delimiter and encoding deliberately to avoid parsing errors

- Validate CSV data with tooling to ensure quality

- Use CSV for fast prototyping and quick data exchange