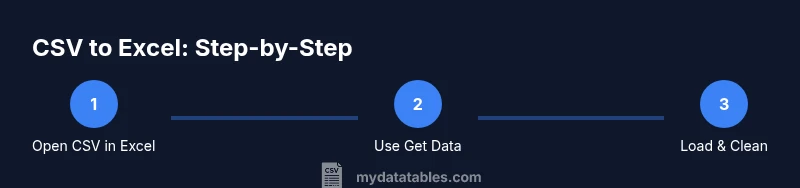

Can You Export CSV to Excel? A Practical Guide for 2026

Learn how to export CSV data to Excel with step-by-step methods, handle delimiters and encoding, and manage large files. This guide, informed by MyDataTables insights, covers best practices and troubleshooting for analysts and developers.

Open CSV files in Excel: quick route and caveats

Opening a CSV file directly in Excel is the fastest way to inspect your data. In most modern Excel versions, you can simply double-click the CSV file, drag it into an open workbook, or use the menu path File > Open to select the file. For a more controlled import, choose Data > From Text/CSV, which surfaces a preview pane where you can choose the delimiter, encoding, and how columns are split. According to MyDataTables, this approach is a common first step in data workflows because it preserves the header row and leaves the raw data unchanged in the source file. However, there are caveats: Excel may automatically infer data types, which can misinterpret dates or large numbers unless you explicitly set column data types during import. If your CSV uses a nonstandard delimiter (for example, semicolons or pipes), switch to the correct delimiter in the import dialog. Also verify the file encoding (UTF-8 is standard; mismatches can produce garbled text in headers or non-ASCII fields). After import, you can switch to the worksheet view, perform quick cleanups, and decide whether to save as .xlsx or keep the data connected as an import query for refreshable later use.

Import via Data menu (Get & Transform) for robust imports

Excel's Get & Transform (Power Query) offers a robust, repeatable way to bring CSV data into Excel. Go to Data > Get Data > From File > From Text/CSV. The preview pane shows all columns with inferred data types; you can adjust types, split columns, rename headers, and filter rows before loading. This method is especially valuable when you regularly import similar CSV files or when the source data requires normalization (for example, consolidating date formats or extracting subfields). You can choose to load the data into a new worksheet, the existing sheet, or into the Data Model for advanced analytics. Because the transformation steps are recorded, you can refresh the dataset later with a single click, which is a major time saver for ongoing reporting. MyDataTables notes that Power Query reduces errors caused by inconsistent formatting in large CSVs, making it a best practice in professional data pipelines.

Handling delimiters, encodings, and text qualifiers

Delimiters matter. If your data uses semicolons, tabs, or pipes instead of a comma, specify the correct delimiter during the import or in Power Query’s step editor. Encoding can also cause garbled characters, especially with non-English text; UTF-8 with BOM is widely supported, but double-check if you notice strange characters. Text qualifiers (usually double quotes) help Excel distinguish commas inside fields from field separators. When a field contains a comma within quotes, Excel should treat it as part of the field, not as a delimiter. If you still see split columns that shouldn’t be split, revisit the delimiter and text qualifier settings, then re-import. Finally, consider locale-specific decimal or thousands separators, as these can affect numeric imports and data validation downstream.

Importing large CSVs: performance tips

Handling large CSVs in Excel requires careful planning. Start with Get Data/From Text/CSV or Power Query instead of opening the file directly, as these paths support streaming and staged loading. Turn off automatic calculations during import to speed up the process, especially for data models. If possible, load data into the Data Model for analytics with Power Pivot, which can reduce workbook size and improve refresh times. For extremely large files, split the CSV into smaller chunks or use a database or scripting tool to pre-aggregate data before importing. On 64-bit Excel, you gain access to a larger memory space, which helps with big datasets. Always validate a sample of rows after import to ensure integrity before performing transformations.

Common issues and troubleshooting

Several issues commonly appear after CSV import. Extra blank columns often indicate trailing delimiters in the source file or misconfigured delimiter settings. Text that should be numeric can appear as text if the decimal symbol or thousands separator is misread; adjust the column data types in Power Query or after loading. Dates can shift if the source uses a different date format than Excel expects; convert or parse dates during the import or in a later step. If headers are garbled, re-check the encoding and BOM configuration. If your data contains special characters, test with UTF-8 encoding and, if needed, re-save the CSV with the correct encoding. Always keep a backup of the original CSV in case you need to re-import after any major transformation.

Example workflow: End-to-end from CSV to a clean workbook

Begin with a clean workspace by backing up the CSV. Use Data > From Text/CSV to import with the correct delimiter and encoding. Review the preview, adjust data types, and apply any necessary transformations (split, trim, replace). Load the data to a new sheet, then perform a quick cleanup: remove blank rows, standardize date formats, trim spaces, and verify numeric columns. Save as an Excel workbook (.xlsx) to preserve formats and enable future refreshes if you’re using Power Query. Finally, run a spot-check against the original CSV to confirm that critical fields match.

When to consider alternatives: CSV tools vs Excel features

Excel is convenient for quick ad-hoc imports and light data cleaning, but it isn’t always the best tool for very large CSVs or complex transformations. For heavy ETL work, consider using CSV-focused tools, scripting (Python with pandas), or a database workflow to pre-clean data before importing into Excel. MyDataTables can guide you on choosing the right approach based on file size, data complexity, and repeat import needs. If you repeatedly import the same structure, Power Query automation can save significant time and reduce errors.

Best practices for naming, headers, and data validation

Create consistent, descriptive headers and avoid special characters that Excel could misinterpret. Normalize header names (no leading spaces, consistent casing) and ensure there are no duplicate column names. After import, add data validation rules for key columns (e.g., date formats, allowable value ranges) to catch anomalies early. Keep a separate sheet with a small data dictionary describing each column’s expected data type and allowed values. Finally, document your import steps so teammates can reproduce the process exactly.