Convert Comma Separated CSV to Excel: A Practical Guide

Learn practical methods to convert comma separated CSV files into Excel workbooks. This guide covers delimiter handling, encoding, data integrity, and step-by-step workflows, with expert tips from MyDataTables to help data analysts and developers.

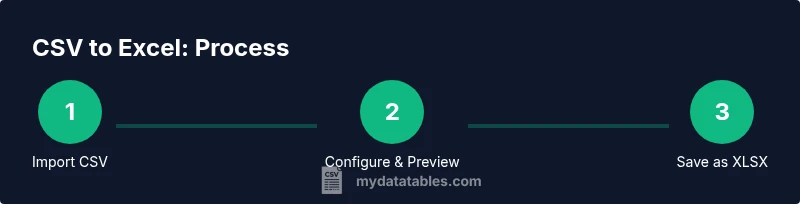

Goal: convert a comma-separated CSV to an Excel workbook. Use Excel’s Get Data -> From Text/CSV or open the CSV directly and set the delimiter to comma, then load the data and save as .xlsx. This approach preserves columns, data types, and minimizes cleanup. Alternative routes include Excel's legacy Text Import Wizard and Power Query for larger files.

Why converting CSV to Excel matters

CSV files are a universal interchange format: simple, lightweight, and easy to generate. However, Excel offers richer data handling — structured tables, data types, formatting, and analysis tools — so many users prefer working in Excel once the data is loaded. The transition from a plain comma-delimited file to a fully functional workbook can be straightforward, but pitfalls exist. Formatting, delimiter mismatches, character encoding, and locale-specific settings can cause misaligned columns or garbled text if not addressed. According to MyDataTables, ensuring a consistent comma delimiter and proper text encoding at import reduces errors and yields a clean data grid that you can sort, filter, and analyze immediately. A good CSV-to-Excel workflow also helps data analysts, developers, and business users maintain reproducible results and audit trails when sharing datasets.

CSV is best viewed as a snapshot rather than a finished dataset. When importing into Excel, you gain the ability to apply data types, create tables, apply formulas, and connect to other sources. The MyDataTables team recommends starting with a quick validation—confirm that the CSV uses comma as the delimiter (not a semicolon in some locales), confirm the file is UTF-8 if it contains non-ASCII characters, and note any quoted fields that might include delimiters. This preparation reduces the need for later cleanup and ensures the workbook reflects the original information accurately. Finally, remember that saving as .xlsx preserves Excel-specific features that CSV cannot, such as formulas, formatting, and tables.

This knowledge empowers you to approach CSV imports with confidence, knowing you can reproduce consistent results across environments and teams.

Core methods to convert CSV to Excel

There are several reliable paths to move from a comma-separated CSV to a polished Excel workbook. Each method has its ideal use case, depending on file size, complexity, and whether you need repeatable automation:

- Open directly in Excel: For small CSVs without complex data types, just double-click the file or use File > Open to view data immediately. Excel will typically parse the file into columns if the delimiter is recognized. This quick path is best for one-off, simple imports.

- Get Data / From Text/CSV (Power Query): This built-in Excel feature is ideal for larger files and repeated imports. It offers robust control over delimiter, encoding, data types, and error handling. You can also refresh the data later without re-importing the file.

- Text Import Wizard (legacy method): Some older Excel versions still expose a wizard for stepwise delimiter and data-type specification. Use this route if you need precise column settings from the outset.

- Google Sheets as a bridge: If Excel is unavailable, you can import the CSV into Google Sheets and then download as an Excel workbook. This is convenient for quick conversions or collaboration in the browser.

- Scripting and automation: For frequent conversions, automation using Power Query (in Excel), Python (pandas), or PowerShell scripts can standardize the process and ensure consistency across datasets. This approach is recommended when CSV structures vary or when you need repeatable pipelines.

MyDataTables emphasizes choosing the method that aligns with data complexity and repetition. When consistency matters, a Power Query-based import delivers the most repeatable results and minimizes manual cleanup. When speed is paramount and the CSV is small, opening in Excel remains perfectly adequate.

In practice, you may combine approaches: perform a quick open for a quick check, then switch to Power Query for the final load to a clean, formatted table. This hybrid approach leverages the strengths of both methods and reduces surprises in downstream analysis.

Understanding delimiters, encodings, and regional settings

Delimiter handling is a common pitfall when converting CSV to Excel. While a CSV stands for comma-separated values, many regions use semicolons as the default delimiter due to comma decimals (for example, some European locales). If Excel misreads the delimiter, columns collapse into a single field, producing garbled data and wasted time. Always validate the delimiter by inspecting the preview during import. In addition, character encoding matters: UTF-8 is the most universal encoding and will preserve non-ASCII characters such as accents or symbols. If your CSV includes non-Latin characters, ensure the file is saved as UTF-8 with a BOM when possible, or choose the correct encoding in the import dialog.

Quoted fields can also complicate parsing. Fields enclosed in quotation marks may contain the delimiter itself, which requires the parser to treat quotes as text qualifiers rather than field separators. Excel’s import options allow you to specify text qualifiers and to skip or treat empty columns appropriately. If a dataset includes dates, times, or special numeric formats, you’ll want Excel to interpret these values properly. Power Query provides explicit type inference steps to assign data types (text, number, date) during the import, reducing the risk of misinterpreted values.

From a broader perspective, aligning delimiter and encoding choices with standard practices reduces downstream data quality issues and makes it easier to share the resulting workbook with teammates who may use different regional settings. MyDataTables recommends establishing a small import spec (delimiter, encoding, and data types) that you apply to every CSV you convert, ensuring consistency across projects.

Practical workflow: From a comma-delimited CSV to a clean Excel workbook

When you’re ready to convert, follow a clean, repeatable workflow that minimizes errors and ensures the final workbook is analysis-ready. This workflow supports both ad hoc conversions and repeatable pipelines for ongoing data chores.

- Prepare the CSV: Confirm the delimiter is a comma and the file is encoded in UTF-8 if possible. If your dataset uses special characters, note them for proper handling during import. Back up the original CSV before making changes.

- Choose your import path: For small files, opening in Excel can be fastest. For larger or more complex files, use Get Data / From Text/CSV (Power Query) to control parsing and data types.

- Import and configure: In the import dialog, set Delimiter = , and ensure the data preview aligns with columns. For non-date numbers and leading zeros (like ZIP codes), specify appropriate column data types (text or number) to preserve values.

- Load options: Decide whether to load into a worksheet as a table or create a data model for advanced analysis. Tables are excellent for filtering and applying formulas consistently.

- Save correctly: Save the workbook as .xlsx to preserve formulas, formatting, and table structures. If you anticipate re-imports, consider saving a template with predefined query steps and data types, ready to refresh with new CSVs.

This workflow keeps your data tidy and accessible, while allowing you to scale the process to larger datasets or repeated tasks. It aligns with MyDataTables’ guidance on reproducible CSV-to-Excel conversions that support robust data analysis.

Data integrity tips: preserving types, dates, and numbers

Preserving data integrity during CSV-to-Excel conversion is critical for accurate analysis. Here are practical tips to prevent common issues:

- Use the correct data type for each column during import. Text for identifiers with leading zeros (like ZIPs), dates for date columns, and numbers for numeric fields. This prevents Excel from auto-converting values into unintended formats.

- Preserve dates by verifying the CSV’s date format and selecting the appropriate date type during import. If dates are ambiguous, import as text first, then convert with a controlled date transformation in Excel.

- Handle large numbers and scientific notation mindfully. Excel can interpret long numerics in scientific notation; import as text if you need to retain exact strings.

- Avoid mixed data types in a single column. If a column contains both numbers and text, importing as text ensures no data loss, and you can convert as needed afterward.

- Maintain encoding consistency. If you work with multilingual data, UTF-8 (with BOM when possible) helps avoid mojibake and unreadable characters.

Incorporating these practices during the initial import reduces the need for post-import cleanup and improves the reliability of downstream analyses. MyDataTables emphasizes documenting your import rules so teammates can replicate the same transformation steps across datasets.

Troubleshooting common issues

CSV-to-Excel conversions often encounter a handful of recurring challenges. Here are the most common problems and practical fixes:

- Misaligned columns after import: Confirm the delimiter and encoding in the import wizard. If necessary, try the From Text/CSV path with a preview to correct misparsing before loading.

- Garbled text or replacement characters: Check encoding. Use UTF-8 (with BOM) if the file contains non-ASCII characters. Re-import with the correct encoding if needed.

- Missing or truncated data: Ensure the source CSV isn’t corrupted and that the file isn’t opened by another program during import. Use a fresh copy for the import, and consider loading via Power Query to isolate data steps.

- Date or time misinterpretation: Explicitly set the column type to Date/Time during import, or perform a post-import conversion to the correct date format.

- Large CSV performance issues: For files with millions of rows, Power Query or a script-based approach can handle data in chunks and improve performance compared to loading into a single worksheet.

If issues persist, break the task into smaller batches and validate each batch before combining into a final workbook. This incremental approach makes anomalies easier to pinpoint and repair.

Advanced options and automation with MyDataTables tools

For repetitive CSV-to-Excel workflows, automation can save time and reduce human error. Excel’s Power Query offers repeatable import steps that you can refresh with new CSVs, producing updated workbooks with minimal manual intervention. For teams handling frequent conversions, developing a small automation pipeline using Power Query scripts or lightweight Python or PowerShell scripts can standardize the process. MyDataTables advocates building repeatable import specifications (delimiter, encoding, and data types) and documenting them in a shared guide so teammates can reproduce the same results across different projects. If your datasets vary in structure, you can design a robust template workbook with pre-defined queries that adapt to common column types, enabling faster turnaround. Finally, consider integrating these workflows with your data tooling stack to ensure consistency across CSV sources and downstream Excel analyses. MyDataTables’s guidance in CSV-to-Excel workflows emphasizes reproducibility and clarity, helping teams scale their data work confidently.

Tools & Materials

- Excel (desktop or Excel for Microsoft 365)(Power Query (Get Data) features are ideal for robust imports.)

- Alternative spreadsheet app (e.g., Google Sheets)(Useful if Excel is unavailable; can export to XLSX.)

- CSV file prepared with comma delimiters(Ensure encoding is UTF-8 if possible.)

- Text editor (optional)(Can help inspect or modify encoding or line endings.)

- Backup copy of the CSV(Protects against data loss during import adjustments.)

Steps

Estimated time: 15-25 minutes

- 1

Open a new workbook

Launch Excel and create a fresh workbook to receive the imported data. This keeps prior work unaffected and reduces the risk of overwriting important content.

Tip: Save a template with predefined import steps for future CSVs. - 2

Choose the import path

If using Power Query, go to Data > Get Data > From Text/CSV. For a quick view, you can also open the CSV directly in Excel.

Tip: Power Query is preferable for large or recurring imports. - 3

Select the CSV file

Browse to the CSV file and select it to load a preview. Verify that the data aligns with the expected columns.

Tip: If the preview looks wrong, re-open and adjust the delimiter/encoding settings. - 4

Configure delimiter and encoding

Set Delimiter = comma and choose UTF-8 encoding if available. Review the data in the preview to ensure correct column separation.

Tip: If the file uses a different encoding, select the appropriate option and re-import. - 5

Review column data types

Indicate the proper type for each column (Text for IDs with leading zeros, Date for dates, Number for numeric fields). This prevents misinterpretation after import.

Tip: Convert tricky columns after import if necessary using Text to Columns or formulas. - 6

Load the data

Choose to load as a table or as a data model, depending on your analysis needs. Tables support filtering and structured references.

Tip: Loading as a table makes formulas and pivots easier to manage. - 7

Save as Excel workbook

Save the file with a .xlsx extension to preserve formatting, formulas, and table structures.

Tip: Consider creating a template for future CSV imports with similar structure. - 8

Optional: refresh or automate

If using Power Query, you can refresh the data later with new CSVs without re-importing manually.

Tip: Document the refresh steps and keep the data source location consistent.

People Also Ask

What is the simplest way to convert a CSV to Excel?

Open the CSV in Excel or import via Get Data/From Text/CSV to apply explicit parsing rules. For a quick one-off, opening is fastest; for regular imports, use Power Query.

Open the CSV in Excel for a quick look, or use Get Data/From Text/CSV for a repeatable import.

How do I handle different delimiters (comma vs semicolon)?

In the import dialog (Data > Get Data > From Text/CSV), set Delimiter to comma or the correct delimiter for your locale. Semicolon is common in some regions.

Choose the correct delimiter in the import settings to ensure proper column separation.

How can I preserve leading zeros in ZIP codes or IDs?

Import the column as Text or convert the column to Text after importing. This keeps leading zeros intact.

Treat the ID column as text to keep the leading zeros.

What should I do for very large CSV files?

Use Power Query to load and transform data, or split the CSV into chunks and process sequentially. Power Query handles larger datasets more efficiently.

Power Query is best for large CSVs; consider chunking if needed.

Can I automate CSV to Excel conversions?

Yes. Use Power Query automation within Excel, or scripts (Python, PowerShell) to perform repeatable imports with predefined settings.

Automation is possible with Power Query or scripts for repeatable imports.

Why do dates sometimes shift when importing?

Dates can shift due to regional formats or incorrect data type inference. Set the column to Date/Time during import and/or convert after import.

Check the date format during import and convert if needed.

Is it better to use CSV or Excel for sharing data?

CSV is portable and lightweight, but Excel preserves formulas and formatting. Import CSV into Excel when analysis is needed, then save as .xlsx for sharing.

Use CSV for lightweight transfer and Excel for analysis-ready workbooks.

Watch Video

Main Points

- Use the right import method for data size and complexity.

- Set delimiter and encoding correctly to avoid misaligned data.

- Preserve data types during import to maintain data integrity.

- Save as .xlsx to keep Excel features intact.

- Document your import steps for reproducibility.