Compress CSV Files: A Practical Guide for Data Analysts

Learn how to compress CSV files efficiently with ZIP, GZIP, and other formats. This guide covers when to compress, how to choose formats, validate integrity, and automate the workflow for smaller archives and faster data transfers.

Within minutes you’ll learn how to compress CSV files effectively. This guide covers when to compress, the best methods (ZIP, GZIP, 7z), and how to preserve data integrity during archiving. You’ll gain practical, repeatable steps for reducing file sizes and speeding up transfers—based on guidance from MyDataTables.

What does it mean to compress CSV files?

CSV files are plain-text tables; compression reduces the number of bytes needed to store or transmit them without losing data. When you compress, you create an archive that contains the CSV in a smaller form, and you decompress to recover the exact original file. This is especially useful for sharing large datasets or moving data between systems with bandwidth limits. According to MyDataTables, compressing CSV files can significantly reduce transfer times and storage requirements when you use lossless formats and verify integrity after decompression. In practice, you’ll balance the compression ratio against CPU usage and time, aiming for a workflow that remains reproducible across teams.

When to compress CSV files

Not every CSV benefits equally from compression. Very small files may not justify the extra step of archiving, while multi-GB datasets consistently see benefits. Compression shines when you need to transfer data across networks, store historical logs, or share samples with teammates. Consider your environment: if your pipeline runs in the cloud, you may prefer streaming compression or chunked archiving to minimize peak memory usage. MyDataTables Analysis, 2026 notes that the suitability of compression depends on data redundancy, file structure, and downstream tooling. Plan for reproducibility by documenting the chosen format and the compression level in your project readme.

Compression formats: ZIP, GZIP, 7z, tar.gz

Several formats are commonly used for compressing CSV files. ZIP supports multiple files in one archive and is widely compatible; GZIP creates a single compressed stream and is fast for text data; 7z offers strong compression with higher CPU cost; tar.gz is a combination: tar archives libraries then compress with gzip. For CSV, lossless formats are essential to preserve exact content. Choose based on your environment: cross-platform availability, extraction speed, and whether you need multi-file archives.

Compare compression methods for CSV

When deciding how to compress a CSV, compare the common formats on a few axes: compatibility, ease of use, and the resulting file size. ZIP is ubiquitous and supports multi-file archives, making it ideal for sharing several related CSVs in one package. GZIP is fast for single large files and is often easier to stream in pipelines. 7z can achieve higher compression ratios on highly repetitive data but may require additional tooling for end users. For simple CSVs, a single-file gzip archive may be enough; for complex datasets with many related files, a ZIP archive provides better organization. Test both approaches on your actual data to see which yields the best balance of size and speed.

Command-line workflows

Command-line tools let you compress CSV files quickly without leaving the shell. Here are practical examples you can adapt:

# ZIP example (single file)

zdata.csv.zip data.csv

# GZIP example (single file)

gzip data.csv

# 7z example (multi-file or single-file)

7z a data.csv.7z data.csvThese commands create archives that you can move, store, or share efficiently. Note that the result depends on the input file’s content and the chosen compression level. For large CSVs, consider running in a batch script to automate repeated tasks, and always test decompression to confirm integrity.

Python-based workflows

If you prefer programmability, Python provides robust libraries for compressing CSV files while preserving data integrity. A simple approach uses the gzip module to produce a gzip-compressed stream from a CSV, which can be integrated into ETL pipelines:

import gzip

import shutil

with open('data.csv', 'rb') as f_in, gzip.open('data.csv.gz', 'wb') as f_out:

shutil.copyfileobj(f_in, f_out)For ZIP archives, you can use the zipfile module to add CSV files to a single archive. Programmatic compression makes it easy to layer checks, apply consistent compression levels, and version archives as part of data workflows.

Validation and integrity checks

Compression is lossless for CSV files, but you must validate that decompression yields the original data. A practical approach is to compute a checksum of the original file, then decompress and verify that the decompressed output matches the original exactly. Use md5sum or sha256sum to generate a baseline, then compare against the decompressed stream:

# Original checksum

sha256sum data.csv > data.csv.sha256

# Decompress and verify

gzip -d data.csv.gz -c | sha256sum | awk '{print $1}'Automating this check in your CI or ETL workflow guarantees that your compression step never corrupts data.

Handling very large CSV files

Large CSV files challenge memory and I/O. To avoid loading the entire file into memory, use streaming or chunked processing. When using CLI tools, prefer tools that support streaming output, or rely on file-based archives created in a streaming-friendly manner. In Python, read the CSV in chunks and write to a compressed stream incrementally. Always test partial decompression on a sample chunk to ensure there are no cross-chunk dependencies that could break parsing once decompressed.

Automation and best practices

Integrate compression into your data pipelines as an automated step rather than a manual task. Maintain a simple policy: choose a single archive format per project, document the compression level, and store the archive alongside the original data with clear metadata. Use checksums as part of the pipeline to verify integrity after decompression. Keep original CSVs as a fallback until you’ve validated the archive. MyDataTables emphasizes repeatability and traceability in CSV compression workflows.

Real-world use cases and examples

Teams frequently compress CSV files for daily exports, such as nightly product catalogs, transaction logs, or research datasets. For example, a data warehouse team might compress daily export CSVs into ZIP archives for batch loading and archive retention. A data science group could use GZIP to stream a single large CSV to a cloud storage bucket, minimizing bandwidth while preserving exact content. In both cases, a small automation script, checked into version control, improves reliability and auditability.

Next steps and maintenance

To keep your CSV compression approach effective, schedule periodic reviews of formats, compression levels, and validation checks. As data grows, revisit chunking strategies and consider alternative formats for downstream systems if needed. The MyDataTables team recommends documenting your compression policy, maintaining backups of the original CSV files, and configuring your automation to run in a controlled environment with proper role-based access. By establishing a disciplined workflow, you’ll reliably compress csv files while safeguarding data integrity and reducing storage and transfer costs.

Tools & Materials

- Computer with terminal or shell access(Mac/Linux terminal or Windows PowerShell)

- CSV file(s) to compress(Original files to archive)

- Compression utility (ZIP/GZIP/7z)(Cross-platform options: 7-Zip, WinZip, zip, gzip)

- Checksum tool (md5sum/sha256sum)(For integrity verification)

- Text editor for notes or scripts(Optional for documentation)

- Archive extraction test files(Optional to verify decompression)

Steps

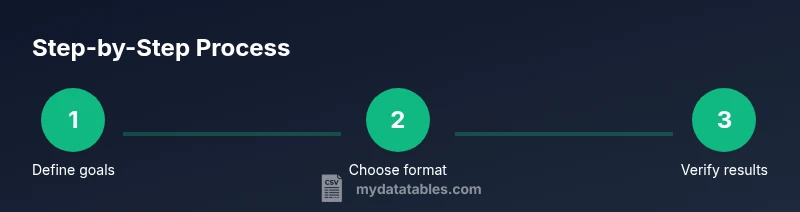

Estimated time: 30-60 minutes

- 1

Define compression goals

Clarify why you are compressing the CSV (storage, transfer, archival) and specify target outcomes (size, speed, reproducibility).

Tip: Write these goals in your project doc so teammates follow the same criteria. - 2

Select format and settings

Choose between ZIP, GZIP, or 7z based on environment, required support, and whether you need multi-file archives. Decide on compression level if the tool supports it.

Tip: Favor formats with broad tool support to maximize compatibility across teams. - 3

Prepare CSV files for archiving

Close any open CSV processes, move files to a staging folder, and ensure filenames are stable and versioned.

Tip: Keep a clean staging area to avoid mixing files from different datasets. - 4

Create the archive

Run the chosen compression command for the target CSV. Prefer archiving a single CSV per archive when possible to simplify extracting and validating.

Tip: Test both single-file and multi-file archives to see which your users prefer. - 5

Validate integrity

Compute a checksum of the original, decompress, and verify the decompressed data matches the original exactly.

Tip: Automate checksum generation in CI to catch any deviations early. - 6

Document and version

Record the archive name, date, version, and compression method in a manifest file or project documentation.

Tip: Store a copy of the manifest with the archive for future audits. - 7

Automate the process

Wrap the steps into a script or workflow to run on a schedule or as part of a data pipeline.

Tip: Use version control for your scripts and keep environments consistent. - 8

Test decompression end-to-end

On a separate environment, decompress the archive and verify the data matches the source, including a spot-check of several rows.

Tip: Automated tests save time and prevent regressions in pipelines.

People Also Ask

What is CSV compression and why use it?

CSV compression reduces file size without altering data and helps with transfer and storage. Use lossless formats and verify decompression to ensure content remains intact.

CSV compression reduces file size without changing the data, which is great for faster transfers and smaller storage. Always verify decompression.

Which formats are best for compressing CSV files?

ZIP, GZIP, and 7z are common. ZIP is widely compatible; GZIP is fast for large single files; 7z can achieve higher compression with more CPU usage.

ZIP is widely supported, gzip is fast, and 7z can be more efficient for certain data patterns.

Will compression affect CSV parsing?

No. Compression is lossless; once decompressed, the CSV content is identical to the original.

No—compression is lossless, so parsing should be identical after decompression.

How can I verify a compressed CSV decompresses correctly?

Decompress and compare checksums or perform a row-by-row comparison to ensure exact content.

Decompress and compare checksums to confirm the content matches exactly.

Are there performance considerations for large CSVs?

Yes. Large CSVs benefit from streaming or chunked processing to avoid high memory usage during compression.

Large CSVs may require streaming or chunking to minimize memory usage.

Can I automate CSV compression in a data pipeline?

Yes. Wrap the steps in a script or workflow, version control it, and include checksums for integrity.

Automating compression improves reliability and repeatability in pipelines.

Is cross-platform extraction supported for common formats?

Yes, with standard tools like unzip or gzip, most platforms can extract CSV archives consistently.

Most formats decompress across Windows, macOS, and Linux with the right tools.

Watch Video

Main Points

- Choose a lossless compression format for CSV files

- Always verify integrity after compression

- Integrate compression into automated pipelines

- Keep originals as backups until validation completes

- Document compression decisions for reproducibility