How big can a CSV file be: limits, strategies, and practical tips

Discover how big a CSV file can be, with practical limits from Excel, Google Sheets, and scripting, plus strategies to handle very large CSV datasets efficiently.

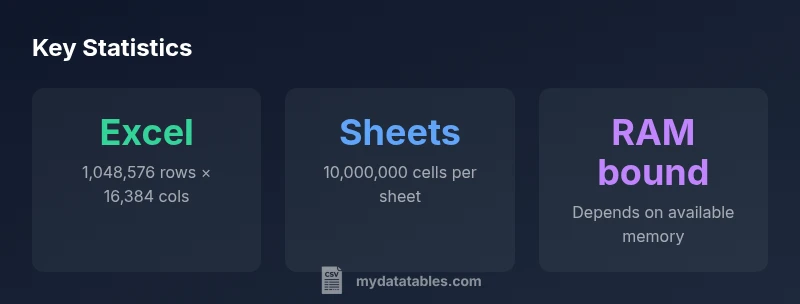

There's no single universal size limit for CSV files. Practical bounds come from the software you use, memory, and disk I/O. For example, Excel supports up to 1,048,576 rows and 16,384 columns; Google Sheets caps at 10 million cells per sheet; many editors fail beyond tens of millions of lines. For big data workflows, streaming or chunking helps avoid memory pressure.

How big can a csv file be? Defining the question

If you are asking how big can a csv file be, the honest answer is that there is no universal maximum. The size depends on the tool you use, the memory available to your process, and the disk I/O bandwidth at hand. In practice, most data practitioners try not to load an entire very large CSV into memory at once; instead, they adopt streaming, chunking, or incremental parsing strategies. The phrase how big can a csv file be captures a spectrum—from modest files a few megabytes to multi-gigabyte datasets that require distributed processing. When planning a workflow, define the upper bound by your downstream systems: a data warehouse, a BI dashboard, or a data-cleaning pipeline. I.e., how big can a csv file be? It depends on your stack, your hardware, and your tolerance for latency.

Practical limits by popular tools

Popular tools impose concrete caps that shape how you design pipelines. Excel fans will hit a hard ceiling of 1,048,576 rows and 16,384 columns per worksheet, which translates into limited data per sheet and potential performance drops as you approach the limits. Google Sheets offers a different constraint: up to 10 million cells per sheet, with columns capped around 18,278. When using scripting languages such as Python or R, you generally fall back on memory and processing power rather than a fixed ceiling; libraries like pandas read_csv support reading in chunks, enabling you to process datasets that exceed available RAM. Finally, many desktop editors and database import tools vary widely in what they can load in one go, often requiring chunking or streaming for reliability.

Memory and processing constraints in practice

Large CSVs stress memory and I/O in three main ways: loading the file into memory, performing transformations, and writing out results. If the file size approaches several gigabytes, most single-machine workflows will struggle unless you work in chunks or stream data row by row. Chunked processing means reading the file in fixed-size blocks, processing each block, and aggregating results. Streaming parsers can detect line boundaries efficiently, preventing partial reads and reducing peak memory usage. In distributed environments, you can distribute the parsing load across nodes, achieving near-linear improvements in throughput. The key takeaway is to plan for memory usage and avoid loading the entire file at once when possible.

How spreadsheets handle large CSVs

Spreadsheets are friendly for small to medium datasets but can become unwieldy as size grows. Excel’s two-dimensional grid is precise but bounded by 1,048,576 rows and 16,384 columns, which is enough for most standard analytics tasks but not for big data. Google Sheets focuses on collaboration and cloud-based storage, but it caps at 10 million cells per sheet, which can be quickly exhausted by wide, tall data. For these reasons, many teams export CSVs into a database or use a data processing pipeline that increments results, rather than attempting to open giant CSVs directly in a spreadsheet.

Streaming, chunking, and incremental processing

When you suspect a file is too large for one-shot loading, adopt streaming techniques. In Python, use the csv module or pandas with chunksize to process rows in portions, reducing peak memory usage. In SQL-based workflows, load CSVs into staging tables in chunks, then perform set-based transformations. For R, use readr with n_max or data.table fread in a chunking pattern. For cloud-based tools, consider serverless or distributed analytics that can read from storage and compute across partitions. These approaches preserve throughput while staying within memory limits.

Data quality, encoding, and line endings

CSV size is not the only consideration; encoding and line endings can complicate parsing, especially when files are large and sourced from diverse systems. Always use a consistent encoding such as UTF-8 and standardize line endings (LF or CRLF) to avoid misinterpretation of data during import. When headers are present, validate that they are unique and stable, as inconsistent headers can multiply processing time in subsequent steps. For data quality, chunk-based validation keeps operations fast and reduces the risk of cascading errors in large pipelines.

Real-world workflows: import/export pipelines

In production, CSVs often serve as intermediaries between data sources and destinations. A common setup is to stage data in a data lake or data warehouse, read the CSV in streaming fashion, perform transformations in a processing engine (such as Spark or a SQL engine), and write results back to a target system. This approach scales better than attempting to load giant files into a single tool. Testing with progressively larger samples helps identify bottlenecks before you hit scale, and maintaining robust error handling ensures quality across iterations.

Practical tips to avoid hitting hard limits

Plan early by estimating the maximum file size you realistically need to handle. Prefer chunked reads, streaming parsers, and database ingestion, especially for multi-GB CSVs. Use compression (gzip) to move data faster when streaming from storage and decompress on the fly during processing. Validate encoding and clean data before heavy transforms to prevent repeated passes. Finally, document your tooling choices and memory estimates so teams can reproduce results as data scales.

CSV size limits across popular tools

| Tool | Max Rows | Max Columns |

|---|---|---|

| Excel (Windows/macOS) | 1048576 | 16384 |

| Google Sheets | N/A (10,000,000 cells total) | 18278 |

| LibreOffice Calc | Varies by version | Varies |

| Python CSV readers (pandas, csv module) | Memory-dependent | Memory-dependent |

People Also Ask

Is there a maximum file size for CSVs?

There is no universal maximum for CSV files. Limits come from software and hardware; some apps impose explicit caps, while others stream data.

There isn't a universal max. It depends on the tools and hardware you use.

Can I import a CSV larger than Excel's row limit?

Excel has a hard row limit of 1,048,576 rows per sheet. For larger data, split the CSV or import into a database and query subsets.

Yes, but Excel has a fixed limit; consider splitting or using a database.

What is the best way to manage huge CSVs in Python?

Read the file in chunks using pandas read_csv with the chunksize parameter or use the csv module iterators to process line by line.

Process large CSVs in chunks or streaming when using Python.

Does Google Sheets handle large CSVs?

Google Sheets caps at 10 million cells per sheet; large or wide datasets can quickly exhaust this limit and slow performance.

Sheets has a strict cell limit; large CSVs may require alternatives.

What are practical tips to avoid hitting limits in CSV workflows?

Use databases for large imports, chunk reading, validate encoding, and test with smaller samples before scaling.

Chunk reads and database imports prevent hitting size limits.

“There is no universal size limit for CSV files; success depends on available memory, processing power, and the software you choose. Plan for streaming large data rather than trying to load everything at once.”

Main Points

- Understand tool limits before loading

- Plan for memory and I/O constraints when loading large CSVs

- Use streaming or chunking for very large datasets

- Be aware of spreadsheet-specific caps for Excel and Sheets

- For large-scale data, consider database import or chunked processing