Combine Multiple CSV Files into One: A Practical How-To Guide

Learn how to combine multiple CSV files into one using Python, shell, or built-in tools. This guide covers headers, encoding, validation, and practical workflows for reliable, scalable data merging.

Goal: learn how to combine multiple CSV files into one. This guide covers manual concatenation, scripting approaches (Python, PowerShell, and shell), and best practices for headers, encoding, and data validation. By the end you’ll know when to append, how to align columns, and how to verify the merged result for accuracy and consistency.

Understanding the Goal: Why you might need to combine multiple csv files into one

In data projects, you often collect information from separate sources or time periods as individual CSV files. Merging them into a single file makes analysis simpler and helps ensure consistency across your dataset. The core objective is to preserve all records while aligning the schema (columns, data types, and encoding). When you combine multiple CSV files into one, you create a streamlined data source that can feed dashboards, models, or reports. According to MyDataTables, a well-executed merge reduces duplication, minimizes manual re-entry, and improves reproducibility for future workflows. This article guides you through methods for different scales, from a quick manual concat to robust, repeatable scripts that handle headers and encoding automatically.

Common Scenarios and Why Merging Helps

You might merge CSV files for quarterly sales, log files, survey results, or export batches from a data warehouse. The benefits include easier filtering, unified reporting, and faster downstream processing. However, diffrent sources often come with mismatched headers, varying column orders, or inconsistent data types. Planning the merge with these challenges in mind saves time later. LSI keywords to consider as you design your workflow include: CSV concatenation, headers alignment, data validation, encoding UTF-8, and large CSV handling. A thoughtful approach balances simplicity with reliability, especially when file counts rise from a handful to dozens or hundreds.

Approaches at a Glance: Manual vs Automated

There are three primary paths to merge CSV files. Manual concatenation works for small, uniform datasets and quick ad-hoc needs. Scripting—via Python, PowerShell, or shell commands—scales to larger file sets and complex schemas. Dedicated CSV tools simplify some tasks but may require learning a new interface. Each approach has trade-offs: manual methods are fast for tiny jobs but brittle; scripts are robust and repeatable but demand setup; specialized tools can be easiest for non-coders but might lack flexibility. The right choice depends on file size, required repeatability, and your comfort with programming.

Preparing Input Data: Headers, Delimiters, and Encoding

Before merging, inspect each input file for header presence and consistency. Decide whether to preserve the header from the first file or propagate a merged header. Uniform delimiters (commas for CSV), consistent quoting rules, and the same encoding (UTF-8 is recommended) prevent subtle data corruption. If some files use a different delimiter (for example, semicolons), you may need to normalize them before merging. Tools like head and tail on the command line can help verify headers quickly, while a quick preview with a spreadsheet viewer confirms column alignment.

Handling Headers and Column Alignment

A core challenge is aligning columns when input files have the same data but different orders or extra fields. A practical approach is to create a merged header that covers the union of all columns, then map each file’s columns into that structure. Unknown or missing values should be filled with a neutral placeholder (e.g., empty string or null) to keep schema consistent. When columns are renamed across files, standardize names before the merge. Consistent data types across corresponding columns prevent downstream errors during analysis.

Practical Examples: Python, PowerShell, and Bash

Code examples show how to merge without losing data. In Python, you can concatenate dataframes from all CSVs and write a single output. In PowerShell, Import-Csv allows you to combine multiple files, then Export-Csv saves the merged result. In Bash, you can use shell utilities to concatenate while dropping repeated headers from subsequent files. These approaches support different environments (cross-platform Python, Windows PowerShell, and Unix-like shells) to fit your stack.

Validation After Merge: Quick sanity checks

After merging, validate row counts, a sample of records, and the presence of all expected columns. Compare aggregates from the source files with the merged output to confirm no data was dropped or duplicated unintentionally. Automated checks—such as comparing row counts or performing a checksum per file—help detect mismatches. If you detect discrepancies, re-run the merge with a minimal, test subset to isolate issues before processing all data.

Handling Large CSV Files: Performance considerations

When merging large CSVs, streaming and chunked processing avoid exhausting memory. Techniques include reading and writing in chunks, using generators in Python, or piping data through tools that support streaming. For extremely large datasets, consider alternate storage formats (Parquet) for faster downstream analytics, or a database ETL process if your workflow requires frequent incremental updates. Always benchmark on a representative subset before scaling up to the full data volume.

Troubleshooting and Common Pitfalls

Common issues include mismatched headers, inconsistent data types, encoding mismatches, and accidental header duplication. Always back up inputs before merging. If you see unexpected nulls, re-check the alignment of columns and ensure you aren’t concatenating while an extra header is included in the stream. Document every step of the process so future you or teammates can reproduce the merge with confidence.

Tools & Materials

- Python 3.x(Download from python.org and ensure it's on your PATH)

- Pandas library(Install via pip: pip install pandas)

- PowerShell or Bash terminal(Use PowerShell on Windows; Bash on macOS/Linux)

- Input CSV files(Two or more files to merge)

- Text editor(For editing scripts and templates)

- UTF-8 encoding awareness(Check encoding of source files and outputs)

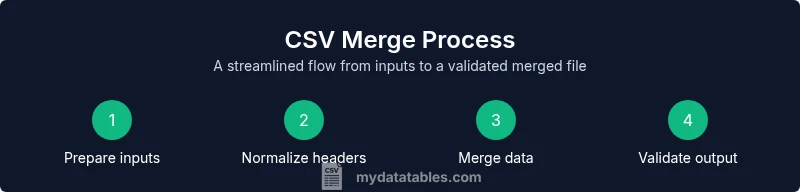

Steps

Estimated time: 30-60 minutes for a small to medium merge; 1-2 hours for larger, repeated merges

- 1

Prepare input files

Gather all CSVs to be merged in a single folder and verify that they are accessible. Decide whether to keep a single header (from the first file) or to synthesize a merged header. This ensures your downstream steps map columns correctly.

Tip: Label files clearly (e.g., data_q1.csv, data_q2.csv) to simplify ordering. - 2

Choose your merge method

Decide between a manual concatenation for small datasets or a scripted approach for larger, variable schemas. Scripts scale, reduce error risk, and support repeatability across updates.

Tip: If you’re new to scripting, start with a small test set to verify behavior before full merge. - 3

Normalize headers and encoding

Ensure all files share the same header names and encoding (UTF-8 preferred). If headers differ, map each column to a common schema before merging.

Tip: Create a reference header, then align each file to it before the merge. - 4

Merge files while preserving data integrity

Append rows from each file in a consistent order. If a file has missing columns, fill with nulls to preserve schema shape.

Tip: Test with a small subset to confirm that all columns align and no data is lost. - 5

Validate the merged output

Check row counts, sample records, and spot-check key fields. Compare sums, unique identifiers, or hashes to ensure integrity across sources.

Tip: Run a quick script that compares a few aggregates against the source files. - 6

Document and save the pipeline

Capture the exact commands or scripts used, input file names, and output location. Version control the scripts to enable reproducibility.

Tip: Include a README with the schema, encoding, and any edge-case notes. - 7

Handle edge cases and scale up

Prepare for new inputs by parameterizing file paths and headers. Re-run the merge on a larger batch only after confirming stability on the test set.

Tip: Use a logging mechanism to track successes and any anomalies during merges. - 8

Plan for incremental updates

If new CSVs will arrive regularly, design the pipeline to merge only new files, or to append incremental data while avoiding duplicates.

Tip: Store a manifest of processed files to prevent re-processing.

People Also Ask

What is the best method to merge CSV files for a small dataset?

For a small dataset with identical headers, simple manual concatenation or a quick script is usually enough. Ensure the header line is not duplicated and verify the final row count matches expectations.

For small datasets with identical headers, a quick manual merge or small script works well, just check the header and final row count.

How do I handle differing headers across input files?

Create a unified schema that covers all columns, map each file to that schema, and fill missing fields with nulls. This preserves all data while maintaining a consistent output format.

Unify headers into one schema, map each file to it, and fill missing fields with nulls.

What about different encodings or delimiters?

Prefer UTF-8 encoding and commas as delimiters. If files use other encodings or delimiters, normalize them prior to merging to avoid data corruption.

Use UTF-8 with comma delimiters; normalize others before merging.

Can I merge files in place without creating duplicates?

Yes. Track processed files and use incremental merges or de-duplication logic based on a unique key. This helps avoid re-merging the same data.

Yes, use incremental merges and de-duplication using a unique key to avoid duplicates.

How can I verify the merge result efficiently?

Perform row-count checks, spot-check fields, and compare aggregates against the sum of input files. Automated tests help ensure reliability for ongoing tasks.

Count rows, check samples, and compare aggregates to confirm accuracy.

What if input files are very large?

Use chunked processing or streaming approaches to avoid memory issues. Consider alternative formats (like Parquet) for heavy analytics workloads.

For very large files, process in chunks and consider formats like Parquet for analytics.

Is there a recommended tool for non-developers?

Yes. Several GUI tools offer CSV merge capabilities; however, ensure you understand the underlying steps to maintain reproducibility and auditability.

There are GUI tools, but keep notes on steps for reproducibility.

Watch Video

Main Points

- Back up all inputs before merging.

- Ensure headers and encoding are consistent across files.

- Choose a repeatable method for scalability.

- Validate the merged output against source data.

- Document the process for reproducibility.