CSV Merge Files: Practical Methods for 2026 Data Workflows

Learn practical, reliable methods to merge CSV files—stacking rows or joining columns—using code-free tools or Python, with tips for data quality, encoding, and validation in 2026.

You can merge CSV files by stacking rows when headers align, or by joining columns when you need a broader dataset. Start with consistent headers and encoding, then choose a method based on your goal: append rows for a unified table, or merge on a key for a relational-like result. This guide covers code-free and code-based options.

What csv merge files means in practice

Merging CSV files is a common data preparation task that enables analysts to build a single, cohesive dataset from multiple sources. When you merge, you decide whether to stack records (append rows) or to join columns (side-by-side), depending on your data model and reporting needs. The term csv merge files encompasses both strategies, so understanding when to apply each is essential for accuracy and reproducibility. According to MyDataTables, the best merges start with a canonical schema: the same column names, in the same order, and the same encoding across all files. This consistency prevents misaligned data and accidental data loss as you combine sources.

In any merge scenario, it’s critical to establish a guardrail: a clear target schema, a known delimiter, and a defined handling rule for missing values. By planning upfront, you reduce the risk of introducing duplicates, orphan columns, or mismatched rows that can undermine downstream analytics. This section lays the groundwork for practical, repeatable CSV merge processes.

- Recognize whether you are stacking rows or combining columns; this choice drives the tooling and approach.

- Ensure the headers are identical across files for a simple append, or map them when joining.

- Decide how to handle missing values and duplicate rows before you merge.

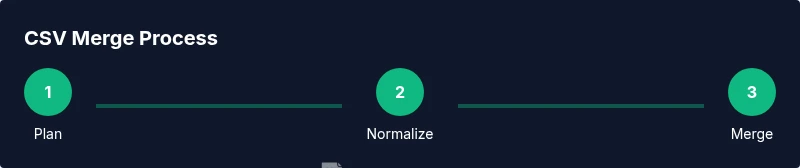

When you work with large CSVs, performance considerations become important. Use streaming I/O, process in chunks, and validate results incrementally. The MyDataTables team emphasizes planning a merge workflow as a series of repeatable steps to ensure auditability and ease of maintenance.

Merge patterns: appending rows vs joining columns

Two core patterns address most csv merge files tasks: appending rows (stacking data) and joining columns (enriching data). Appending rows is straightforward when every file shares the same schema. You simply concatenate the rows, then deduplicate if necessary. Joining columns, on the other hand, requires a key column that exists in all files. You perform a left, right, inner, or outer join depending on whether you want to preserve all rows or only matched ones.

Practical examples help illustrate the difference. If you have three quarterly sales reports with identical columns (date, region, sales), appending rows produces a single annual file. If you have customer data with shared IDs across systems (customers.csv and orders.csv with customer_id as the key), joining columns creates a richer dataset that combines attributes from both sources. The key is to select an approach that preserves data integrity while meeting analysis needs.

To plan correctly, create a small test file that mimics your real data and perform a dry run. Validate the results by spot-checking a few rows and comparing row counts before and after the merge. This reduces surprises when you scale up to the full dataset.

Prerequisites: headers, encoding, and delimiters

Successful.csv merges start with standardization. Ensure headers are consistent across files, and confirm that the delimiter matches in every file. Encoding should be uniform, preferably UTF-8, to avoid misinterpreting special characters. If different files use different headers, you must either rename columns to a canonical set or map sources to a single schema before merging.

Additionally, confirm the data types for key columns. Numeric columns should not be stored as text, and date values should be parsed into a consistent format. If you anticipate international data, verify that decimal separators and thousand separators are uniform. These upfront checks save time and minimize downstream cleaning.

When working with Excel or Sheets, consider using built-in data tools or add-ons to standardize headers and import settings. For code-based workflows, implement a small normalization step that harmonizes column names and data types before the merge.

Code-free strategies: Excel, Google Sheets, and basic workflow tips

Code-free CSV merging can be surprisingly powerful for many use cases. In Excel, copy all source files into a single workbook and use Power Query to union tables for appends or merge queries for joins. Power Query provides a UI to map columns, define join keys, and apply filters before loading the final result. In Google Sheets, use IMPORTRANGE to pull data from multiple files and then use QUERY to consolidate. Both tools support basic deduplication and quick validation steps.

Here are practical tips for code-free merges:

- Always import with UTF-8 encoding and the same delimiter to avoid character corruption.

- Disable automatic data types during import to prevent Excel from misclassifying numbers as text.

- After merging, sort by a primary key and sweep for missing or duplicated rows.

- Save the final result as CSV with a descriptive name and a date stamp for traceability.

If you’re collaborating, store the intermediate merged file and the original sources in a versioned folder to track changes over time.

Code-based merges: Python with Pandas for robust handling

Code-based merges offer precision, versioning, and scalability for CSV merge files. Python with Pandas is a popular choice because it can handle large files efficiently and supports complex join logic. Example workflows include appending multiple CSV files with pd.concat and performing merges with pd.merge on a common key.

Sample snippet:

import pandas as pd

from pathlib import Path

# List your CSV files

files = [p for p in Path('.').glob('data_*.csv')]

dfs = [pd.read_csv(f, encoding='utf-8') for f in files]

# Append example: stack rows

merged = pd.concat(dfs, ignore_index=True)

# Or a join example: merge on a key column 'id'

left = dfs[0]

right = dfs[1]

joined = pd.merge(left, right, on='id', how='outer')

merged.to_csv('merged_output.csv', index=False, encoding='utf-8')Notes:

- Use chunksize for very large files to avoid memory issues.

- Validate the merged output by comparing row counts and sampling keys.

- After a join, consider dropping duplicate columns that result from overlapping names.

If you’re new to Pandas, start with concatenation for simple append tasks and then experiment with merge for more complex integrations. This approach gives you a robust, repeatable workflow that scales as your CSV datasets grow.

Handling mismatched headers and extra columns

Mismatched headers are a common hurdle in csv merge files. Start by defining a canonical schema: a single set of column names that all inputs should conform to. When a file has an extra column, decide whether to drop it or carry it as a new field with a null value for rows lacking that column. If a file lacks a column present in others, fill the gap with a meaningful default or mark it as missing (null).

To align headers programmatically:

- Create a header map that renames non-standard column names to the canonical names.

- Use consistent data types across sources.

- For joins, ensure join keys exist in all inputs; if not, pre-create the key with a null value.

In spreadsheets, you can achieve this with a simple rename and a VLOOKUP-like join to align data. In code, a pre-step that applies a uniform header set minimizes later surprises and reduces the need for repeated cleanup.

If you encounter conflicting column names with different meanings, rename one to preserve the intent. Clear naming makes downstream analytics more reliable.

Performance considerations for very large CSVs

Large CSVs can overwhelm memory and slow down merges. When files are massive, use streaming or chunked processing. Pandas supports chunksize in read_csv, allowing you to process data in portions and append results incrementally. In Excel or Sheets, break the task into smaller batches or rely on Power Query’s query folding to push work to the data engine.

Key performance tips:

- Process in chunks and write out intermediate results before the final merge.

- Filter early to remove unnecessary columns and rows.

- Use a consistent encoding and delimiters to minimize parsing overhead.

- Leverage external storage or databases for extremely large datasets if your workflow grows beyond CSV capabilities.

Monitoring progress with a simple log (e.g., number of rows processed) helps identify bottlenecks early and makes troubleshooting easier.

Validation, testing, and quality checks after merge

Validation is the step that distinguishes a good merge from a bad one. Start by checking the row count after an append to ensure all input data is included. For joins, verify that the number of matched keys aligns with expectations. Check for duplicate rows or columns created by merges and confirm that key fields maintain data integrity.

Recommended checks:

- Compare sums or counts of key columns before and after the merge.

- Sample random rows and inspect a few fields for consistency.

- Validate that date formats, numeric values, and textual fields did not drift during the merge.

- Run a quick audit for missing values in critical columns and address them.

Document the merge process and include the exact files used as inputs, the tool, and the options chosen. This traceability is essential for reproducibility and audits.

Common pitfalls and best-practice checklist

Even experienced analysts fall into common traps when merging CSV files. Avoid treating text columns as numbers, overlook encoding differences, or forget to normalize headers. Create a reusable checklist that includes: header standardization, encoding alignment, delimiter consistency, join key validation, and a final validation pass. Keep a versioned log of changes and a changelog for each merged output.

Best practices:

- Always start with a small test merge before scaling up.

- Use a canonical schema and document any deviations.

- Validate post-merge results with automated checks when possible.

- Save a snapshot of the original inputs and the merged output for auditability.

Tools & Materials

- CSV files to merge(Two or more files with compatible headers)

- Text editor or spreadsheet app(For quick edits and header normalization)

- Backup copy of all CSV files(Store on external drive or cloud storage)

- Uniform encoding and delimiter awareness(UTF-8 as standard; ensure consistent delimiter)

- Python environment (optional)(If using Pandas for merges and joins)

- Pandas library (optional)(Needed for Python-based merges)

Steps

Estimated time: 60-120 minutes

- 1

Back up and inventory source files

Create exact copies of all CSV files to merge and note their locations, encodings, and delimiters. This safeguards against data loss and provides a rollback if needed.

Tip: Include a dated folder name and any relevant metadata (source system, data cut-off). - 2

Choose the merge pattern

Decide whether you will append rows or join columns. This decision determines the subsequent normalization and tooling.

Tip: If you’re unsure, start with a simple append (row-stacking) to validate data flow. - 3

Normalize headers and encoding

Standardize column names across files and ensure the same encoding and delimiter in every file.

Tip: Use a canonical header map to minimize surprises during the merge. - 4

Pick your toolset

For quick tasks, use Excel/Sheets with Power Query or basic formulas; for robust tasks, use Python with Pandas or a shell script.

Tip: If large files are involved, Python with chunking is often more scalable. - 5

Execute the merge

Perform the actual merge according to the chosen pattern, and save an intermediate result for validation.

Tip: Keep a log of operations and exact options used (join keys, how, etc.). - 6

Validate the result

Check row counts, key integrity, and sample values to ensure the merge behaved as expected.

Tip: Automate a quick data quality check where possible.

People Also Ask

How do I merge CSV files with the same headers?

If headers match, you can append rows or perform a simple left/right join depending on your goal. In spreadsheets, use Power Query; in Python, use pd.concat or a straightforward pd.merge when adding columns.

If headers match, just stack the files or join on a key, using a simple tool like Power Query or a small Python script.

What if headers differ between files?

Standardize headers by mapping to a canonical set before merging. Rename columns to align names, or create a header map that translates inputs to the target schema. This reduces confusion and ensures a clean merge.

If headers differ, map them to a single standard set before merging.

Can I merge with different delimiters?

Yes, but you must convert to a common delimiter and encoding before the merge. Re-save each source file with the same delimiter to avoid parsing errors.

Yes, convert to a single delimiter before merging.

Is it possible to merge very large CSV files efficiently?

Yes. Use chunked processing, streaming reads, and avoid loading all data into memory at once. Tools like Pandas with chunksize or database-backed workflows help manage scale.

Yes—process in chunks and use efficient tools.

Which encoding should I use to prevent data loss?

UTF-8 is the safest default encoding for CSV merges. If you must use another encoding, specify it when reading and writing to avoid data loss.

Use UTF-8 when possible.

Watch Video

Main Points

- Plan merge workflows as repeatable steps

- Standardize headers before merging

- Validate results with spot checks and counts

- Choose code-based methods for large or complex merges

- Document inputs and options for auditability