Convert HTML to CSV: A Practical Step-by-Step Guide for Data

Learn to convert HTML tables to CSV with practical steps, handling nested headers and complex structures using MyDataTables expert guidance.

You will extract table data from HTML and save it as a CSV, preserving headers and handling nested tables or merged cells. This guide covers manual approaches and automated tools, plus best practices for clean CSV output. By the end, you’ll have a reusable workflow to convert any HTML table into a flat, comma-delimited dataset.

Why converting HTML to CSV matters in data workflows

According to MyDataTables, converting HTML to CSV is a common data-prep task for analysts who need portable, text-based tables for analysis, sharing, or ingestion into databases. HTML tables often appear on internal dashboards, report pages, or scraped web data, and turning them into CSV unlocks easy processing with tools like spreadsheets, Python, or databases. The goal is to preserve the structure of the original table while producing a clean, consistently delimited file that can be consumed by downstream systems. This guide explains why the conversion matters, the challenges you may encounter, and reliable workflows that work across small and large tables.

Beyond a simple copy, HTML-to-CSV requires thoughtful handling of headers, merged cells, and inconsistent row lengths to prevent misaligned data. When done correctly, the resulting CSV becomes a robust input for analytics pipelines, BI dashboards, and data exports. The MyDataTables team emphasizes repeatability: establish a standard approach so your team can reproduce results across similar HTML sources.

HTML table anatomy and how its structure maps to CSV

HTML tables are built from a few core elements: <table>, <thead>, <tbody>, <tr>, <th>, and <td>. Headers typically live in <thead> and define the column meanings, while data rows live in <tbody>. When you convert to CSV, each table row becomes a CSV line, and each cell becomes a field separated by a delimiter (usually a comma).

Key mapping considerations include how to handle multilevel headers, colspan and rowspan attributes, and nested tables. Colspan expands a single header across multiple CSV columns, while rowspan can complicate alignment of data rows. A robust conversion workflow flattens these structures into a single header row and uniform data rows. If the HTML table includes nested tables, you must decide whether to inline nested data or treat nested tables as separate CSV sections. The mapping decisions you make here determine the clarity and usability of the final CSV.

Approaches to convert: manual vs automated

There are two broad approaches: manual conversion and automated extraction. Manual conversion is feasible for small tables or one-off tasks: you copy the table HTML, paste it into a text editor, clean up the header row, and reformat rows as CSV lines. This approach provides full control but is time-consuming and error-prone for larger datasets. Automated methods scale well and reduce human error. Common automation paths include:

- Python with pandas.read_html to parse HTML and export to CSV.

- Node.js with cheerio or jsdom to extract table data and write CSV.

- Command-line tools or browser-based extractors for quick captures.

Choosing between approaches depends on table size, data complexity (headers, merged cells), and how frequently you perform the task. MyDataTables guidance favors automated paths for repeatable workflows, with manual steps reserved for quick checks or simple tables.

Manual workflow: copy, flatten headers, and write CSV

A careful manual workflow begins with selecting the target table in your browser or HTML file and copying the raw HTML. Next, paste the HTML into a text editor and strip extraneous markup, retaining only the header and data cells. Flatten any multi-row header into a single row of names. Then assemble each data row into a CSV line, ensuring proper escaping of commas, quotes, and newline characters. Finally, save the file with a .csv extension and open it in a CSV viewer or editor to visually confirm alignment. This approach is practical for small datasets or quick validations, but remember to verify that no data were truncated during the copy-paste process.

Extraction with Python: using pandas.read_html to pull tables

Python’s pandas library offers a reliable way to parse HTML tables directly from strings, files, or web pages. A typical workflow loads HTML into a string, calls pandas.read_html(html), and retrieves a list of DataFrames—one per table found. You can select the desired table, then export it to CSV with df.to_csv('output.csv', index=False). Here is a minimal example:

import pandas as pd

html = '''<table><thead><tr><th>Name</th><th>Age</th></tr></thead><tbody><tr><td>Alice</td><td>30</td></tr></tbody></table>'''

dfs = pd.read_html(html)

df = dfs[0] # first table

df.to_csv('output.csv', index=False)This approach handles headers automatically and scales to larger tables, but it requires a basic Python environment.

Extraction with JavaScript/Node.js: parse tables with cheerio

For those preferring JavaScript, Node.js together with libraries like cheerio or jsdom lets you parse HTML and build CSV strings programmatically. A typical script loads HTML, selects rows and cells, escapes values when needed, and writes to a file. This method is useful when your workflow runs in a JS ecosystem or you’re integrating HTML parsing into a larger Node-based pipeline. An example outline:

const cheerio = require('cheerio');

const fs = require('fs');

const html = fs.readFileSync('table.html', 'utf8');

const $ = cheerio.load(html);

let rows = [];

$('table tr').each((i, row) => {

const cells = [];

$(row).find('th, td').each((_, cell) => cells.push($(cell).text().trim().replace(/"/g, '""')));

rows.push('"' + cells.join('","') + '"');

});

fs.writeFileSync('output.csv', rows.join('\n'));This JS approach is flexible and integrates with web-facing tooling, but you must handle encoding, escaping, and potential mismatches between header and data rows.

Validation, edge cases, and data quality checks

After exporting CSV, perform quick data quality checks: confirm the number of columns per row matches the header, verify that delimiters are placed correctly, and ensure non-ASCII characters are preserved with UTF-8 encoding. Edge cases include nested tables, merged headers, and missing cells. Flatten multi-row headers before export to minimize misalignment, and consider adding a separate row for any subheadings you decide to retain. If your HTML contains non-table content around the target, isolate the table’s HTML to avoid stray data rows. Regularly run your workflow against several sample pages to ensure consistency.

Performance considerations for larger HTML tables

Larger HTML tables pose memory and processing challenges, especially when parsing with full DOM libraries. For very large tables, consider streaming approaches or chunked parsing to avoid loading entire HTML into memory. If you must use Python, pandas.read_html can handle sizable inputs, but you may need to enable more memory or process pieces of HTML iteratively. In Node.js, streaming parsers or incremental DOM processing can reduce peak memory usage. Finally, validate the resulting CSV with a viewer to catch partial writes or encoding issues before integrating into downstream systems.

Common pitfalls and practical troubleshooting

Common issues include mismatched columns due to colspan/rowspan, missing headers for data rows, and quotes inside cells that break simple CSV parsers. To mitigate these problems, flatten headers first, standardize delimiter usage, and adopt a quoting strategy (e.g., enclose fields in double quotes and escape embedded quotes). When working with nested tables, decide whether to flatten nested data or export nested data as separate CSVs—keeping a clear convention helps downstream users interpret the data correctly. Finally, document your steps so teammates can reproduce the conversion reliably.

Tools & Materials

- Web browser with Developer Tools(Chrome or Edge; used to inspect and copy the target table’s HTML)

- Text editor(VS Code, Sublime, Notepad++ or equivalent for cleaning HTML and CSV prep)

- Python 3.x + pandas(Optional for pandas.read_html workflow; install via pip install pandas)

- Node.js + cheerio/jsdom(Optional for JS-based parsing; install via npm install cheerio or jsdom)

- HTML source file or URL(Local file or URL containing the target table)

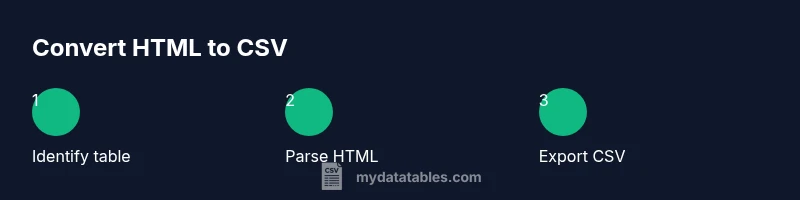

Steps

Estimated time: Total time: 25-60 minutes

- 1

Identify target HTML table

Locate the specific table in the HTML source and confirm it contains the data you need. If multiple tables exist, designate the one to convert and note any structural nuances (headers, merged cells).

Tip: Use browser Inspector/Developer Tools to copy exact table HTML for accuracy. - 2

Choose conversion approach

Decide between manual extraction for small tables or automated parsing for larger ones. Your decision should consider data complexity and repeatability.

Tip: Automate when table size or repetition justifies setup time. - 3

Normalize headers

Flatten multi-row headers into a single header row. Decide how to handle colspan and rowspan to maintain consistent column alignment.

Tip: Document how headers map to final CSV columns. - 4

Extract rows and cells

Parse each table row and capture cell values in the same order as headers. Ensure proper escaping for quotes and delimiters.

Tip: Test with a small subset before full export. - 5

Escape and encode

Escape special CSV characters (commas, quotes, newlines) and ensure UTF-8 encoding to preserve non-ASCII content.

Tip: Enclose fields in quotes if they contain delimiters. - 6

Save and verify

Write the CSV to disk and open it in a viewer to confirm column alignment and row integrity.

Tip: Check a few representative rows for accuracy.

People Also Ask

What is HTML-to-CSV conversion?

HTML-to-CSV conversion involves extracting data from an HTML table and saving it as a CSV file, preserving row data and headers. It can be done manually or with code.

HTML-to-CSV means turning table data from HTML into a CSV file, either by hand or with code.

Can I copy-paste from a webpage into CSV?

Copy-paste can work for simple tables, but complex headers and merged cells often break alignment. Parsing is safer for accuracy.

Copy-paste works for simple tables, but you may need parsing for complex ones.

How do I handle colspan/rowspan during export?

Colspan and rowspan complicate column alignment. Flatten headers and use a consistent mapping to CSV columns before export.

Colspan and rowspan can throw off alignment; flatten headers first.

What tools are best for large HTML tables?

Automated parsers like Python with pandas or JavaScript with cheerio scale better than manual methods for large datasets.

For large tables, use automated parsers like pandas or cheerio.

How can I validate the resulting CSV?

Open the CSV in a viewer to verify delimiters, row counts, and correct handling of quotes and special characters.

Validate by opening the CSV and checking structure and formatting.

Watch Video

Main Points

- Identify the HTML structure before conversion

- Map headers to CSV columns accurately

- Validate encoding and delimiters after export

- Document the workflow for repeatability