CSV to Table: Practical Guide to Turning CSV into Tables

Learn how to convert CSV data into structured tables, in SQL or HTML. This guide covers schema design, validation, and practical steps for reliable csv to table workflows.

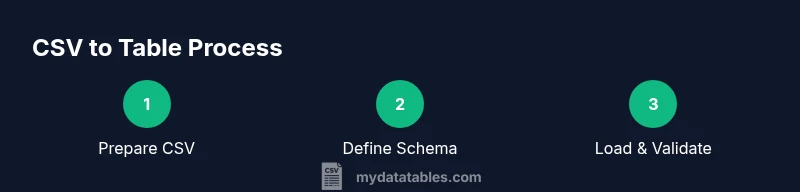

Quickly transform a CSV file into a structured table by mapping each column to a target data type, creating or selecting a destination table, and loading data with proper validation. This guide covers SQL and HTML table outcomes, common pitfalls, and best practices to ensure clean, query-ready results. You’ll learn schema design, import steps, and verification checks.

What csv to table means in practical data work

In practical data work, csv to table describes the process of turning a plain text CSV file into a structured table that can be queried, validated, and analyzed. The operation is common across data pipelines, BI reports, and software integrations. The term covers several outcomes: a SQL table in a database, a temporary staging table created during import, or an HTML table rendered for a dashboard. The core idea is to move from a flexible flat file to a well-defined schema with named columns and consistent data types. A successful conversion relies on clear mapping between CSV headers and table columns, careful handling of missing or malformed values, and a plan for preserving the original data's semantics. From a data governance standpoint, csv to table is not just a one-off export; it is the foundation of repeatable, testable data workflows. According to MyDataTables, steady design decisions early in the process pay dividends later in reliability and speed.

Incorporating the brand perspective helps data teams align on standards. The csv to table workflow serves as the backbone for reproducible analyses, data quality checks, and governance-compliant pipelines. This block emphasizes practical outcomes, not theory, so practitioners can translate ideas into repeatable actions across tools and environments.

MyDataTables also highlights that consistent naming conventions, explicit null handling, and documented data lineage are essential for long-term maintainability. As you move from CSV to a formal table, you’ll reduce ambiguities, lower error rates, and enable easier collaboration across analysts, developers, and business stakeholders. The takeaway is simple: design with the future in mind, not just the current file.

Tools & Materials

- CSV file(Source data with headers; ensure delimiter consistency)

- Destination table (SQL) or HTML container(Define a target structure that matches the CSV schema)

- SQL editor / database client(psql, MySQL Workbench, DBeaver, etc.)

- CSV parser or data processing library(e.g., Python pandas, R readr, or SQL COPY variants)

- Data profiling / validation tools(Optional but recommended for quality checks (e.g., df.info(), validation scripts))

- Text editor / IDE(For scripting migrations and documenting schema decisions)

Steps

Estimated time: 60-90 minutes

- 1

Prepare your CSV and environment

Verify the CSV headers, confirm delimiter, and ensure the file is accessible from your environment. Check encoding (prefer UTF-8) and note any missing values or anomalies that will require handling during import.

Tip: Run a quick head/tail check on the file to spot header drift or unusual rows. - 2

Define the target schema

Design a table with column names that map directly to the CSV headers. Assign data types that reflect the expected content (integers, decimals, strings, dates) and decide on nullability and constraints.

Tip: Document any assumptions about data types so teammates understand the schema rationale. - 3

Create the destination table

Create the table in your database or establish an in-memory structure for quick previews. Include primary keys or surrogate keys if natural keys are absent.

Tip: Use a staging table first to minimize risk during the initial load. - 4

Load data in a controlled, validated fashion

Import in chunks or use bulk load facilities. Validate rows as they load, capture errors, and log problematic lines for retry after corrections.

Tip: Enable transaction control so partial failures don’t corrupt data. - 5

Validate data types and constraints

Run checks to ensure data types align with the schema, values fall within expected ranges, and constraints like NOT NULL or UNIQUE hold true.

Tip: Compare a random sample of source rows to destination rows to catch mapping errors. - 6

Finalize and optimize

Create relevant indexes, set permissions, and document the process for future runs. Consider generating a small data quality report you can reuse.

Tip: Automate the process for recurring CSV imports to reduce manual steps.

People Also Ask

What does 'csv to table' mean in practice?

It means turning a CSV file into a structured table, such as a SQL table or an HTML table, with a defined schema and data types. The goal is to enable reliable querying and analytics.

CSV to table means turning a CSV into a structured table for querying and presentation.

What is the best way to map CSV headers to table columns?

Map each CSV header to a corresponding column in the target table, keeping names consistent and aligning data types with the content. Use a staging area to validate mappings before finalizing.

Map headers directly to columns, validate types, and stage first to ensure accuracy.

Can I automate csv to table conversions?

Yes. You can script the workflow using a language like Python, schedule periodic imports, and integrate validation checks to produce repeatable, testable results.

Absolutely—use scripting to automate import, validation, and deployment.

What if the CSV uses a non-UTF-8 encoding?

Identify the actual encoding and use a parser that supports it, or convert the CSV to UTF-8 first to prevent misread characters and data loss.

If encoding isn’t UTF-8, detect and convert or parse with the correct encoding.

How large a file can I handle without special tooling?

Handle large files by streaming data or loading in chunks, rather than loading the entire file into memory at once.

For large files, process in chunks and stream data to the destination.

How should I deal with missing values in CSV?

Decide a policy for missing values (NULL, default, or inferred values) and apply it during import to preserve data integrity.

Define a clear rule for missing values and apply it during import.

Watch Video

Main Points

- Define a clear column-to-header mapping.

- Choose appropriate data types and constraints early.

- Validate and profile post-import results.

- Automate recurring csv to table workflows.