Is CSV Faster Than XLSX? A Practical Speed Comparison

Explore how CSV and XLSX compare in speed across parsing, I/O, and tooling. Get practical benchmarks, guidance, and best practices for choosing the right format in data pipelines (2026).

Is csv faster than xlsx? In most data workflows, CSV is faster to read and parse than XLSX because it’s a flat, text-based format with minimal overhead. When you only need raw rows and columns, CSV often reduces CPU cycles and I/O time. However, XLSX can excel in write speed and feature support when tools optimize for binary spreadsheets. The answer depends on context and tooling.

Context and scope: what speed means in CSV vs XLSX

Understanding whether is csv faster than xlsx requires a clear definition of speed in data pipelines. Speed isn’t a single number; it combines read or write throughput, memory usage, and the time spent on preprocessing (like decoding, unzipping, or validating data). In practice, teams measure speed by parsing time, data loading latency, and overall pipeline latency from source to downstream steps. According to MyDataTables, the speed advantage of CSV hinges on the absence of nested structures, formulas, and metadata; these features in XLSX add a layer of complexity that often slows initial reads but enables richer downstream analytics. For many data practitioners, speed is most valuable when it translates to faster iterations, shorter ETL windows, and fewer bottlenecks in streaming or batch jobs. Is csv faster than xlsx becomes a question of how you intend to use the data and which libraries you rely on in 2026.

How speed is measured in practice: parsing, I/O, and memory

Speed in data processing is multidimensional. Parsing time depends on the library and language; reading a CSV can be a near-linear operation as it typically requires scanning bytes and splitting on a delimiter. I/O time depends on disk or network throughput and whether data arrives compressed. Memory usage matters when loading entire datasets into RAM. Tooling plays a big role: some languages offer fast streaming readers for CSV that minimize peak memory, while others load the whole file before processing. In contrast, XLSX involves parsing a structured OpenXML package, decompressing ZIP archives, and interpreting cells, styles, formulas, and metadata. When evaluating performance, analysts compare total wall-clock time for end-to-end loads, not just per-file read times. This broader view helps avoid overestimating CSV’s speed if subsequent processing relies on XLSX features.

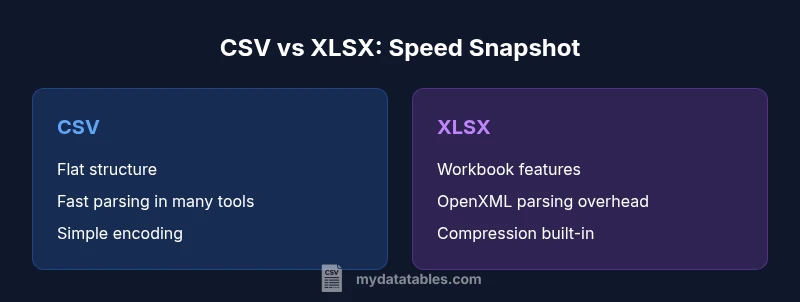

Data format characteristics that influence performance

The performance gap between CSV and XLSX often starts with the format’s fundamental structure. CSV is a flat, line-oriented text format without metadata, and it lacks built-in compression unless you apply external compression. This simplicity usually yields faster IO and parsing in many scenarios, especially when data is already in a tabular form. XLSX, on the other hand, is a zipped collection of XML files describing workbook structure, sheets, and cell values; it can store formulas, formatting, and data types, which gives it expressive power but adds overhead for both reading and writing. Encoding handling (UTF-8, UTF-16), quote escaping, and multiline fields can also impact CSV performance if not carefully managed. As a result, CSV tends to Excel in raw data throughput, while XLSX shines in suitability for end-user analysis and workbook-centric workflows. MyDataTables finds that the practical speed difference narrows when reading sources already optimized for a given format.

When XLSX might outperform CSV in speed terms

There are situations where XLSX can be faster in practical terms. If your data already exists in an Excel workbook and your processing stack includes optimized OpenXML parsers, the time to extract only necessary worksheets or cells can be efficient. For workflows that heavily rely on formulas, cell-level metadata, or conditional formatting, XLSX avoids the need for separate post-processing steps to reconstruct those features from a separate CSV export. In streaming or incremental load scenarios, specialized libraries can reduce memory pressure by reading just the relevant columns, which can mitigate XLSX’s initial parsing overhead. In short, XLSX may win when the value of workbook features reduces overall processing time elsewhere in the pipeline, not merely in raw read speed.

Benchmarking in real-world stacks: a practical approach

A robust benchmark should mirror your actual data and tooling. Start by selecting representative datasets that vary in size and complexity (simple CSVs, CSVs with quoted fields, and Excel workbooks with formulas). Use consistent environments and measurement points: read time, write time, memory usage, and end-to-end latency. Run multiple iterations to account for variability and cache effects. When comparing is csv faster than xlsx in a real system, measure both cold starts and warmed runs. If your pipelines use Python, R, Java, or SQL-based tools, profile with the same version of libraries across formats. Document the hardware, storage type, and network conditions to ensure results are reproducible. Above all, benchmark with realism: synthetic tests often misrepresent performance in production.

Practical guidance for data engineers: choosing the right default

For data engineers, selecting a default format should balance speed with downstream requirements. If raw throughput and streaming capabilities are your primary goal, CSV is usually the safer bet due to its simplicity and broad parser support. When downstream steps demand workbook-level metadata, formulas, or complex formatting, XLSX can reduce subsequent translation steps at the cost of initial speed. A pragmatic approach is to adopt a dual strategy: use CSV for bulk interchange and ingestion, and reserve XLSX for human-facing analytics or when end-user readers require workbook features. MyDataTables emphasizes aligning format choice with the end-to-end data flow rather than focusing on isolated read times. In 2026, the right choice depends on your stack, data size, and performance goals rather than a single statistic.

Best practices for benchmarking in your stack: reproducible tests

To ensure credible comparisons, standardize test data and tooling. Keep environments as close as possible: same hardware, OS, disk subsystems, and network latency. Use fixed seeds for synthetic data and document the data distribution (nulls, outliers, string lengths) to avoid skew. Employ time-based measurements and consider warm-up runs to remove JVM or interpreter startup effects. Use streaming parsers for CSV when possible to minimize peak memory usage; for XLSX, prefer row-wise readers that skip unnecessary cells. Finally, publish results with a clear methodology so that others can reproduce the tests and validate the conclusions in their own contexts.

Authority sources and credibility

When evaluating speed claims, consulting standards and official documentation helps ground the analysis. The CSV format aligns with the general practice described in RFC 4180, which specifies the CSV standard for interoperability. For Excel open formats and their structure, Microsoft Open XML documentation provides authoritative guidance on how .xlsx files are organized and read by compliant libraries. These sources anchor the discussion in widely adopted specifications and ensure that performance considerations align with real-world tooling. By grounding the comparison in these standards, practitioners can select formats with confidence for their data pipelines in 2026.

Comparison

| Feature | CSV | XLSX |

|---|---|---|

| File structure | Flat text, simple rows/cols | Binary OpenXML package with worksheets and metadata |

| Read/parse overhead | Typically lower due to minimal structure | Higher due to parsing XML, ZIP de/compression, and metadata |

| Write/export overhead | Generally faster for large dumps | Often slower because of formatting and packaging |

| Compression | No built-in compression (external compression common) | Built-in ZIP-based compression in the format |

| Tooling requirements | Simple parsers, streaming readers | OpenXML-aware libraries with workbook support |

| Best for | Raw data interchange and ingestion | Analytics-friendly workbooks with formulas and formatting |

Pros

- CSV offers fast, low-overhead reads and broad parser support

- CSV files are easy to stream and transform in pipelines

- CSV avoids a lot of metadata and formatting costs, which speeds processing

Weaknesses

- CSV lacks workbook features like formulas and formatting

- CSV can require extra handling for encodings and quoting edge cases

- CSV files can become large and unwieldy without compression or streaming

CSV is the faster default for raw data transfer and batch processing; XLSX can win in feature-rich, workbook-driven workflows when tooling optimizes for OpenXML parsing.

In practice, CSV tends to outperform XLSX for pure speed in data pipelines. Use XLSX when you need formulas, formatting, or complex workbook structures, but benchmark with your stack to confirm.

People Also Ask

Is csv faster than xlsx for reading data in Python?

Typically yes—reading CSV in Python with pandas or the csv module avoids the overhead of parsing OpenXML formatting and metadata found in XLSX. However, performance depends on the library, dataset size, and whether you stream the data instead of loading it all at once.

Yes, CSV reading is usually faster in Python, but it depends on the library and whether you stream the data rather than loading everything at once.

When would XLSX be faster overall despite slower parsing?

XLSX can be faster in end-to-end scenarios if you need workbook-level features and selective reads from specific sheets or cells. If the downstream analysis leverages formulas or rich formatting, avoiding extra translation steps can offset the initial parsing overhead.

XLSX can be faster when you need workbook features and selective reads to reduce downstream processing.

How should I benchmark speed in my data stack?

Create representative datasets, run multiple iterations, and measure read/write time, memory usage, and end-to-end latency. Use consistent environments and document the tooling versions to ensure reproducibility.

Benchmark with representative data and consistent tools to get reliable results.

Does compression affect CSV speed?

Compressing CSV can reduce disk I/O and network transfer time, but decompression adds CPU overhead. The net speed depends on the balance between reduced I/O and decompression costs in your stack.

Compression helps with I/O, but you must account for decompression time.

Are there encoding or quoting pitfalls with CSV?

Yes. Misconfigured encodings or quoting rules can slow processing or corrupt data. Use consistent UTF-8 encoding, proper delimiter handling, and robust parsers that correctly manage quotes and newlines.

Make sure encoding and quoting rules are consistent to avoid slowdowns.

Main Points

- Benchmark with your stack to validate speed assumptions

- Use CSV for large data transfers and ingestion pipelines

- Reserve XLSX for workbook-centric workflows with formulas or formatting

- Leverage streaming parsers to maximize CSV throughput

- Consider compression to further reduce I/O and improve effective speed