Why Is to_csv Not Working? A Practical Troubleshooting Guide

Struggling with to_csv not working? This MyDataTables guide explains common causes, encoding quirks, and a diagnostic flow to restore reliable CSV exports across apps and platforms.

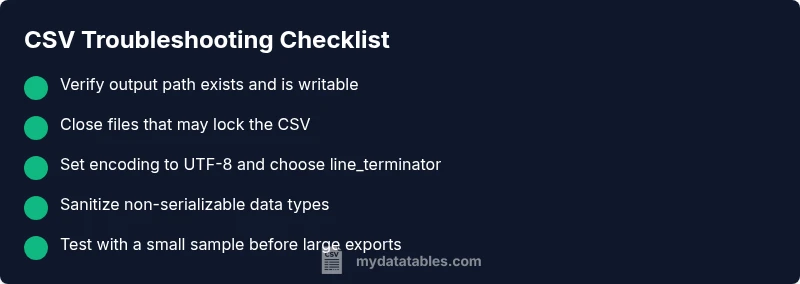

Most to_csv failures come from path, permission, or encoding issues rather than a bug in the function itself. Start by confirming the output path exists with write access, and that the encoding is appropriate (UTF-8 is typical). If errors persist, check for open files or mismatched delimiter settings before digging deeper.

Why the to_csv not working? Root causes and quick checks

When you see an error from to_csv, the first question isn't your Python syntax but where the file is written and how the data is encoded. In practice, failures usually come from a missing output path, insufficient permissions, or an encoding mismatch rather than a bug in to_csv itself. This MyDataTables guide helps you diagnose quickly with a repeatable checklist. To start, reproduce with a small test dataset, verify the destination directory exists, and confirm you have write access. Then confirm you’re using a compatible encoding (UTF-8 is the default for most environments) and that the delimiter and quoting settings match the intended consumer. By focusing on path, permissions, and encoding, you’ll likely pinpoint the issue within a few minutes and avoid chasing complex code fixes.

Common scenarios and quick fixes

- Path or directory not found: Ensure the full output path exists. Create missing folders or choose a path that exists.

- Permission denied: Run in a directory you own or adjust permissions; avoid writing to system folders.

- File in use: Close other applications that might hold the file open; restart your script if necessary.

- Encoding mismatches: Explicitly set encoding='utf-8' and handle non-ASCII characters.

- Wrong delimiter or quoting: Confirm the desired format and adjust sep and quoting when exporting.

- Environment differences: Verify you’re running in the expected Python environment and that no virtual environment path issues exist.

Encoding, delimiters, and quoting pitfalls

Even when the code looks correct, data can contain characters that break CSV formatting. A common pitfall is non-UTF-8 data sneaking into the export, causing a UnicodeEncodeError. If you must export with non-UTF-8 data, use encoding='latin1' or errors='replace' to prevent crashes, then clean up on the consumer side. Delimiters must not appear in the data without proper quoting; similarly, quotechar, doublequote, and escapechar settings matter when fields contain separators or newlines. In distributed systems, different platforms may prefer CRLF vs LF; harmonize newline handling by passing line_terminator='\n' or '\r\n' as appropriate. Finally, consider streaming or chunked writes for very large datasets to reduce memory pressure.

Permissions, paths, and file locks

A frequent reason to_csv fails silently or with a clear error is that the target path isn’t writable. Check directory permissions and confirm there’s enough disk space. If your file is opened by another application, close it and retry. In some environments, antivirus or backup tools may lock the file temporarily; pause these during export. If you’re exporting in a script invoked by a job scheduler, verify the user context has rights to create files in the destination. When problems persist, redirect errors to a log file to capture the exact failure mode.

Cross-platform concerns: newline, CRLF, and Unicode

CSV conventions differ by platform. Windows often uses CRLF endings; Unix-like systems use LF. If the consumer expects a specific newline, specify line_terminator accordingly. Unicode handling is another cross-platform trap: ensure the file is written with the correct encoding and that any non-ASCII characters are properly escaped or replaced. If you see strange characters when opening in Excel or a text editor, re-export with encoding='utf-8-sig' to include a BOM when needed.

Case studies: common error messages and how to respond

Error messages like UnicodeEncodeError: 'ascii' codec can't encode character show up when non-UTF-8 data is written without an encoding. PermissionError on Windows often points to a path issue or locked file. FileNotFoundError suggests the directory doesn’t exist. ValueError about invalid data types can occur if the data frame contains objects that aren’t serializable. Mapping these messages to concrete fixes — set encoding, create missing directories, close file handles, and sanitize data types — speeds resolution.

How to reproduce and isolate the problem

- Run a minimal export with a tiny subset of data to reproduce the issue. 2) Print or log the exact output path and permissions. 3) Try a simple export to a known-good location. 4) Switch encoding to UTF-8 and test. 5) If the error persists, inspect the data types in the frame and convert non-serializable types to strings. 6) Incrementally reintroduce columns to identify the offending field. This methodical approach isolates the root cause without rewriting your code.

Prevention and best practices

- Keep output paths simple and use absolute paths during development.

- Always explicitly set encoding and newline termination.

- Close files promptly and avoid writing to read-only or system directories.

- Validate data types before export and clean strings that contain delimiters.

- Use a test harness to simulate large exports and monitor memory usage.

- When in doubt, consult error messages and MyDataTables resources to verify best practices for CSV exports.

Steps

Estimated time: 20-40 minutes

- 1

Create a tiny export to reproduce

Start with a small DataFrame and a simple path. Run to_csv to confirm the basic process and gather any error messages. This isolates complexity from the data itself.

Tip: If this fails, you’ve identified a systemic path/permission/encoding issue before tackling data quality. - 2

Validate the output path and permissions

Check that the directory exists and is writable. Use an absolute path and avoid system folders. Try writing a test file with Python to verify permissions.

Tip: Print the path to the console to confirm there are no typos or stray spaces. - 3

Ensure the file isn’t locked

Close any applications that might hold the file open and ensure no background process is using it. Re-run after a short restart or in a clean shell.

Tip: On Windows, check Task Manager for apps locking the CSV; on macOS/Linux, use lsof to detect locks. - 4

Set encoding and delimiters explicitly

Specify encoding='utf-8' (or utf-8-sig if needed) and confirm the delimiter matches your consumer. Handle non-ASCII characters with errors='replace' if necessary.

Tip: Avoid relying on defaults when exporting for cross-system data sharing. - 5

Sanitize non-serializable data

Inspect the DataFrame for complex objects (e.g., custom classes) and convert them to strings or remove them before exporting.

Tip: Use df.astype(str) for problematic columns, then export and validate sample rows. - 6

Scale up and verify incrementally

Once the small export works, reintroduce columns one by one and test. If the issue reappears, identify the offending column or data type.

Tip: Document which column caused the failure to guide future exports.

Diagnosis: User runs a to_csv export and encounters an error message or silent failure while writing a CSV file.

Possible Causes

- highOutput path does not exist or is misspelled

- highInsufficient write permissions or the file is in use

- mediumEncoding mismatch or non-UTF-8 data causing UnicodeEncodeError

- lowVery large file or memory constraints causing write failure

- lowInvalid data types or non-serializable objects in the DataFrame

Fixes

- easyVerify output path exists and you have write permission; create directories if needed

- easyClose other programs using the file; retry export; run in a terminal to view real-time errors

- mediumExplicitly set encoding (e.g., encoding='utf-8') and handle problematic characters

- hardUse line_terminator appropriate for the target system and consider chunked writes for large files

- mediumSanitize data types or convert non-serializable columns to strings before export

People Also Ask

What is to_csv?

to_csv is a pandas DataFrame method that exports tabular data to a CSV file. It writes each row as a line with fields separated by the chosen delimiter.

to_csv is a pandas method that writes a DataFrame to a CSV file.

Export permission issue

Permission errors usually point to writing in a protected directory or a file lock. Check directory permissions, ensure you’re using an accessible path, and close any program that might hold the file.

Check directory permissions and ensure the file isn’t locked by another program.

Encoding errors during export

Encoding errors occur when non-UTF-8 data is written without a proper encoding. Set encoding to UTF-8 and consider replacing or escaping problematic characters before export.

Use UTF-8 encoding and handle non-ASCII characters during export.

Open file blocks to_csv

If Excel or another program has the file open, the export can fail. Close the file in other applications and retry the export.

Make sure no program is using the target CSV when exporting.

OS newline differences

Different OSes expect different newlines. Specify line_terminator to match the consumer and avoid surprise line breaks.

Match the newline to the target system when exporting.

Large export tips

For large DataFrames, consider chunked writes or gradually exporting to avoid memory pressure and slowdowns.

Use chunked writes for very large CSV files.

Watch Video

Main Points

- Verify path and permissions first

- Explicitly set encoding and newline

- Close competing processes before export

- Sanitize data types prior to exporting

- Test with small samples before full-scale exports