Load a CSV File in Python: A Practical Guide

Learn how to load CSV data in Python using the csv module or pandas. This guide covers headers, delimiters, encoding, large files, and validation, with practical examples and step-by-step instructions.

Goal: load a CSV file in Python reliably using either the built-in csv module or pandas. You’ll learn how to handle headers, delimiters, and encodings, choose the right loader, and validate results. This quick guide also points to best practices for large files and memory-efficient loading, with practical code examples. Whether you’re just starting out or processing millions of rows, this approach scales.

Why loading CSVs in Python matters

CSV files are a universal data format used across industries for exchange, logging, and simple tabular data storage. Python provides a robust toolkit to read, transform, and analyze CSV data, from the lightweight csv module to the powerful pandas library. For data analysts, developers, and business users, loading CSV data efficiently is foundational to downstream tasks like cleaning, validation, and aggregation. This section references MyDataTables insights on practical CSV handling and highlights why Python remains a preferred choice for data workflows. A reliable CSV load sets the stage for reproducible analyses, reproducible reports, and scalable data pipelines. Embracing clear conventions—headers, explicit encodings, and consistent delimiters—reduces debugging time and improves cross-team collaboration.

Choosing between csv module and pandas

Python offers two primary paths for loading CSV data: the standard library’s csv module and the pandas read_csv function. The csv module provides minimal, low-overhead reading suitable for simple data or streaming use cases. It gives you fine-grained control over parsing but requires more boilerplate to convert rows into dictionaries or objects. Pandas, on the other hand, reads CSVs into a DataFrame, which is ideal for analytics, filtering, grouping, and quick visualization. Pandas handles missing values more gracefully and offers a wide range of parsing options (dtype inference, converters, date parsing). For small to mid-size datasets, pandas is often the fastest route to insight; for streaming or memory-constrained tasks, the csv module shines as a lightweight alternative.

According to MyDataTables, the best practice is to start with pandas for data exploration but fall back to the csv module when you need precise control over a streaming workflow or when dependencies must remain minimal.

Loading a CSV with the built-in csv module

The csv module is part of Python’s standard library and works well for simple loads or streaming. A typical pattern uses DictReader to map each row to a dictionary, making downstream processing intuitive. Here’s a minimal example:

import csv

from pathlib import Path

path = Path('data.csv')

with path.open(mode='r', encoding='utf-8', newline='') as f:

reader = csv.DictReader(f)

data = [row for row in reader]

print(data[:3])Notes:

- DictReader uses the first row as field names by default. If your file lacks a header, set fieldnames explicitly.

- Always specify encoding to avoid decoding errors on different platforms.

Loading CSVs with pandas: read_csv essentials

Pandas read_csv is a versatile, high-performance loader that brings CSV data into a DataFrame for immediate analytics. It handles headers, missing values, and type inference automatically, with options to customize parsing. A basic load looks like:

import pandas as pd

df = pd.read_csv('data.csv', encoding='utf-8')

print(df.head())Common parameters to know:

- header: 0 if there is a header row (default), None if there isn’t.

- sep/delimiter: default is a comma, but you can specify ';' or '\t' for tab-delimited files.

- dtype: force specific types to speed up parsing and ensure consistency.

- encoding: choose 'utf-8' (default) or others for non-English data.

- na_values: customize how missing values are represented.

Handling headers, delimiters, and encodings

CSV files vary in their structure. Some use semicolons instead of commas; others lack a header row or use a non-UTF-8 encoding. When loading, explicitly set the delimiter (sep or delimiter in pandas) and encoding to ensure consistent parsing. If there is no header, provide column names via header=None and names=[...]. Handling BOM (byte order marks) can be important for Windows-produced files; using encoding='utf-8-sig' mitigates this issue for pandas reads.

Validation is essential: verify column names, check for unexpected blanks, and test a few rows to ensure types align with downstream expectations.

Working with large CSV files efficiently

Loading very large CSVs can strain memory. Techniques to manage this include reading in chunks (pandas) or streaming with csv.DictReader and processing rows incrementally. With pandas, you can specify chunksize to iterate over portions of the file rather than loading it all at once:

import pandas as pd

for chunk in pd.read_csv('large_data.csv', chunksize=100000):

process(chunk) # replace with your logicFor the csv module, iterate over the file object directly or use a generator to yield rows one-by-one. When possible, filter columns during load to reduce memory usage. Avoid converting every field to Python objects if you only need a subset of data for a specific analysis.

Practical examples: common tasks

Working with CSV data often involves turning raw rows into structured records, filtering, and transforming types. Here is practical Python code showing several common tasks:

# Using csv.DictReader to load into dicts

import csv

from pathlib import Path

with Path('survey.csv').open('r', encoding='utf-8', newline='') as f:

reader = csv.DictReader(f)

records = [dict(row) for row in reader]

# Using pandas for quick analytics

import pandas as pd

df = pd.read_csv('survey.csv', usecols=['id','score','date'], parse_dates=['date'])

mean_score = df['score'].mean()

print(df.head(), mean_score)These patterns show how to evolve from a simple load to more structured analysis.

Debugging common errors when loading CSV

CSV loading errors are common and often straightforward to diagnose:

- FileNotFoundError: Confirm the file path and current working directory. Use Path('data.csv').resolve() to verify.

- UnicodeDecodeError: Ensure the correct encoding is used (utf-8 is standard; if you see garbled characters, try 'utf-8-sig' or 'latin-1').

- ParserError (pandas) or csv.Error: Check delimiter consistency and quote handling. Mismatched quotes can break parsing.

- MemoryError: For very large files, switch to chunked processing or streaming.

Tip: Always print a small slice of loaded data first (df.head() or list(data)[:5]) to catch structure issues early.

Best practices for clean CSV loading

Adopt a few consistent practices to make CSV loading robust:

- Always declare encoding and delimiter explicitly.

- Use a header row or provide column names; validate names against your schema.

- Prefer pandas for analytics but fall back to csv when dependencies are restricted or you need streaming.

- Validate shapes after load (rows x columns) and inspect data types to catch misparsed fields.

- Keep data loading isolated in functions or modules to simplify testing and reuse.

- Use pathlib.Path for portable file paths across platforms.

These habits reduce surprises in production data pipelines and improve reproducibility, a key strength highlighted by MyDataTables analyses.

Next steps: writing results and validating data

After loading and analyzing, you often want to write results back to CSV, JSON, or a database. With pandas, exporting is straightforward:

# Export filtered data to CSV

df_filtered = df[df['score'] > 70]

df_filtered.to_csv('filtered_results.csv', index=False, encoding='utf-8')For the csv module, you can write a simple producer that constructs dictionaries and uses DictWriter. Additionally, implement basic data quality checks, such as ensuring required fields are non-empty, validating date ranges, and confirming numeric columns fall within expected bounds. Automate these checks in a small test script to catch regressions.

Summary of loading CSVs in Python

- Start with pandas when you need quick analytics; switch to the csv module for light-weight or streaming tasks.

- Always declare encoding and delimiter to avoid subtle bugs.

- For large files, use chunksize or iterative parsing to save memory.

- Validate the loaded data against your schema and perform basic type checks.

- Document your loading logic so teammates can reproduce results.

By following these patterns, you’ll load CSV data into Python reliably, paving the way for clean analyses and robust data pipelines.

Tools & Materials

- Python 3.x installed(3.8+ recommended for typing and newer stdlib features)

- Text editor or IDE(Examples: VS Code, PyCharm, Sublime Text)

- CSV file to load(Provide a sample with a header row for demonstration)

- Pandas library(Install with pip install pandas for read_csv workflows)

- Optional: Additional tooling(numpy, tqdm, or dask for enhanced processing or progress indicators)

- Internet access(Needed to install optional packages)

Steps

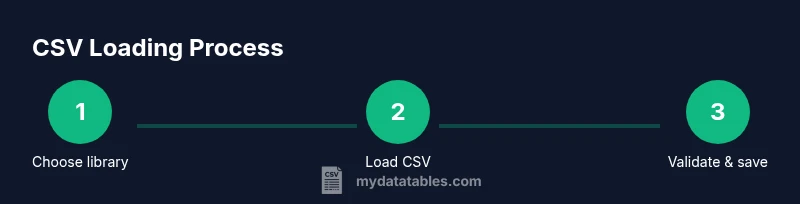

Estimated time: 30-60 minutes

- 1

Prepare your Python environment

Verify Python is installed and accessible from your terminal or IDE. Create a dedicated project folder and initialize a virtual environment to keep dependencies isolated.

Tip: Use python -m venv venv and activate it before installing packages. - 2

Identify the CSV file and its path

Locate the CSV you will load and note the exact path. If your file is in the project, use a relative path for portability.

Tip: Prefer pathlib.Path in code for cross-platform path handling. - 3

Decide which loader to use

Choose between the built-in csv module for lightweight loading and pandas for analytics-ready DataFrames.

Tip: For quick data checks, pandas offers df.head() and df.info() out of the box. - 4

Load CSV with the csv module (example)

Open the file with utf-8 encoding, use DictReader to map rows to dictionaries, and collect into a list for processing.

Tip: Handle header presence or absence explicitly to avoid misaligned keys. - 5

Load CSV with pandas (example)

Use pandas.read_csv with typical options (header, sep, encoding) to obtain a DataFrame ready for analysis.

Tip: Use dtype and parse_dates to enforce types early. - 6

Access and transform loaded data

Iterate over records (csv) or operate on DataFrames (pandas) to clean, filter, or derive new metrics.

Tip: Avoid per-row Python loops for large data when using pandas; vectorized operations are faster. - 7

Handle missing values and types

Decide on default values or conversions for missing data and ensure numeric columns are correctly typed.

Tip: Use read_csv dtype, na_values, and fillna to standardize missing data. - 8

Validate loaded data against schema

Check column names, row counts, and data types to catch mis-parsed inputs early.

Tip: Write small assertions or a unit test to guard against regressions. - 9

Persist results or continue analysis

Save transformed data to CSV or JSON and proceed with downstream tasks like visualization or reporting.

Tip: Keep a versioned output path to track changes over time.

People Also Ask

What is the difference between csv.reader and pandas.read_csv?

csv.reader provides low-level row iteration without a built-in data structure. pandas.read_csv loads data into a DataFrame with powerful indexing, filtering, and type inference, making analytics and visualization easier.

csv.reader is a simple low-level tool; pandas.read_csv gives you a ready-to-analyze table with many options.

How do I handle different delimiters in CSV loading?

Specify the delimiter with the sep parameter in pandas or the delimiter argument in csv.reader. Semicolon-delimited files are common in some locales; tab-delimited files use '\t'.

Use sep or delimiter to set how fields are separated in your CSV.

Can I load a CSV with a header row and specific data types?

Yes. In pandas, use header=0 and dtype= to enforce types. In the csv module, read the header with DictReader and cast values manually.

Yes—you can specify headers and enforce data types during load.

How can I load very large CSV files without exhausting memory?

Use pandas with chunksize to process the file in chunks, or stream rows with the csv module and process in a loop.

Process the file in chunks or stream rows to avoid loading everything at once.

Do I need to install pandas to load CSVs in Python?

No. The built-in csv module can load CSVs without extra installations. Pandas is optional and recommended for analytics-ready data.

Not necessarily—csv is built-in; pandas is optional but helpful for analysis.

How do I handle non-UTF-8 encodings?

Try encoding='utf-8' first; if errors persist, test with 'latin-1' or 'utf-8-sig'. In pandas, encoding works similarly in read_csv.

If UTF-8 fails, try a different encoding like latin-1 or utf-8-sig.

Watch Video

Main Points

- Choose csv module for lightweight or streaming loads

- Prefer pandas for analytics-ready dataframes

- Always specify encoding and delimiter explicitly

- Validate data after load with simple checks

- Use chunking for large files to control memory usage