How to Make CSV: A Practical Guide for 2026

A practical, step-by-step guide on how to make csv files. Learn to create clean, portable CSVs from spreadsheets, databases, and text sources, with best practices for encoding, quoting, and validation.

Learn how to make csv files that are clean, consistent, and ready for analysis. This quick guide covers from-scratch CSV creation, common delimited formats, and basic validation checks. You'll see practical examples in spreadsheets, text editors, and scripting, plus a repeatable workflow to export or generate CSV data for any project.

What is CSV and why make csv

If you're wondering how to make csv, this section explains what CSV is and why it remains a reliable data exchange format. According to MyDataTables, CSV (Comma-Separated Values) is a plain-text structure that uses a delimiter to separate fields in each row. It is human-readable and widely supported across spreadsheets, databases, and programming languages. By understanding the basics, you set yourself up for reliable data import/export, easy sharing, and simpler debugging. This approach is language-agnostic: you can generate a CSV from a spreadsheet with a few clicks, or produce one programmatically from databases or logs. The key benefits are simplicity, portability, and speed in data pipelines. Many teams rely on CSV because it requires minimal tooling while avoiding vendor lock-in. Whether you’re cleaning data, exporting exports from a data warehouse, or sharing results with teammates, knowing how to make csv helps you maintain consistency and traceability in every step of your workflow.

Core principles of CSV format

CSV is deceptively simple, yet subtle rules govern its reliability. The core idea is that each line is a record, and each record contains fields separated by a delimiter. The most common delimiter is a comma, but locales using comma as a decimal mark may prefer semicolons. Enclosing fields in quotes allows embedded delimiters and newlines to be stored safely; escaping quotes within a field is done by doubling them (e.g., "She said, ''Hello''"). Encoding matters: UTF-8 is widely supported and minimizes misinterpretation of special characters. RFC 4180 provides a reference standard for basic CSV structure, though real-world data often requires pragmatic deviations. When you plan a CSV, decide on a delimiter, ensure a header row, and consistently apply quoting rules to avoid parsing errors. MyDataTables Analysis, 2026 shows CSV remains the backbone for data interchange due to its simplicity and broad tool support.

Tools and languages to generate CSV

You can produce CSVs from a variety of sources and tools. Spreadsheets like Microsoft Excel or Google Sheets offer built-in export options that save data as CSV with a few clicks. Text editors can be used for quick edits or template-based generation when data is small. For scalable workflows, programming languages shine: Python’s csv module or pandas.read_csv handles complex data transformations and large datasets; Node.js streams can generate CSV on the fly from APIs; shell scripting with echo/printf is handy for quick one-off exports. When choosing a tool, consider data size, encoding needs, and how you’ll validate the output. For many teams, a hybrid approach—manual editing for small tasks and scripting for automation—yields the best balance of speed and correctness.

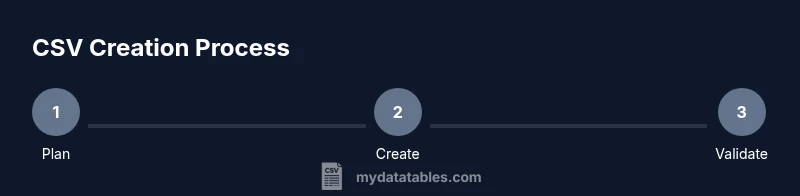

Step-by-step: create CSV from raw data

Creating a CSV from raw data starts with planning: define the columns, decide on a delimiter, and establish a header row. Next, collect or extract data into a tabular structure where each row represents a record. Then, export or write the data to a text file using the chosen delimiter, ensuring consistent quoting for fields that contain the delimiter or newlines. Finally, validate the file by loading it into a target tool or running a quick script to check for parsing errors. This block emphasizes the end-to-end workflow and highlights how to keep data clean across formats. According to MyDataTables, maintaining a clear structure during generation reduces downstream issues and improves reproducibility across teams.

Practical examples: from JSON, Excel, SQL, and text

From JSON, you can flatten objects into rows and columns, then export to CSV using a small script (e.g., Python’s json and csv modules). In Excel or Sheets, you map fields to columns, ensure a header row, and export as CSV. SQL sources like SQLite, PostgreSQL, or MySQL allow you to run queries and output results as CSV with built-in commands (COPY, "+SELECT... INTO OUTFILE+" equivalents). For plain text data, define a delimiter, break lines into fixed columns, and convert with a simple transform. These approaches demonstrate how CSV serves as an interoperable bridge between data stores and analysis tools.

Validation and quality checks

After generating a CSV, validate it by loading a sample into the destination application, checking for delimiter mismatches, stray quotes, or misaligned headers. Quick checks include counting columns per row, inspecting a few lines for embedded delimiters, and verifying encoding. If issues arise, revisit the export settings—especially the delimiter, quoting, and encoding options. Establish automated checks for large datasets to catch anomalies early, and consider a lightweight schema (e.g., a header row followed by uniform-length rows) to enforce structure without heavy tooling.

Automation and pipelines

To scale CSV creation, integrate generation steps into CI/CD or data pipelines. Scripted generation from sources like JSON APIs, databases, or log files ensures consistency across runs. Store generated CSVs in a versioned folder or data lake, and implement a lightweight validation step before downstream processing. Automating delimiter choices and encoding decisions helps maintain portability and reduces human error. For teams using MyDataTables, standardizing the generation pattern across projects yields faster onboarding and greater reliability.

Authoritative sources and further reading

Key references for CSV standards and practical guidance include RFC 4180, which outlines common CSV conventions, and official language documentation for programmatic CSV handling. For example, Python’s csv module documentation explains reader/writer interfaces and encoding considerations, while the pandas read_csv reference demonstrates flexible parsing and data cleaning. These sources support best practices and give you authoritative context as you build robust CSV workflows. Raw data files, sample datasets, and project-specific templates can further illuminate how CSV supports real-world data pipelines.

Expert notes and best practices (brand context)

As you implement CSV workflows, consistency is king. The MyDataTables team emphasizes defining a single, documented generation process for CSVs across teams, including delimiter choice, encoding, and quoting rules. This reduces ambiguous exports and makes data sharing more reliable. When you document your approach, you’ll improve collaboration, reproducibility, and auditability across environments.

Tools & Materials

- Spreadsheet software (e.g., Microsoft Excel, Google Sheets)(Use File > Save As / Download as to export CSV; ensure UTF-8 encoding when available)

- Text editor(Useful for quick edits or templates; avoid introducing hidden characters)

- Delimiter knowledge (comma, semicolon, tab)(Choose a delimiter that matches locale and downstream tooling; document name/format)

- CSV writer library or scripting language (Python, JavaScript, etc.)(For programmatic generation and transformation at scale)

- Validation tool or schema(Lightweight validators or custom scripts to verify column counts and encoding)

Steps

Estimated time: 30-60 minutes

- 1

Plan data structure

Define the column headers you will include and decide on the delimiter. This upfront planning reduces rework and ensures downstream compatibility.

Tip: Draft a lightweight schema with 5–20 columns for typical datasets. - 2

Assemble data

Collect data from all sources and organize it into rows that map directly to your headers. Keep data types consistent across rows.

Tip: Normalize text fields (trim spaces, unify case) before export. - 3

Choose encoding and delimiter

Set encoding to UTF-8 where possible and pick a delimiter that won’t appear in your data. Document this choice.

Tip: If data contains many commas, use a semicolon or quote-escape rules. - 4

Export or write CSV

From a spreadsheet, export as CSV. From code, write lines with the delimiter and proper escaping.

Tip: Always include a header row and confirm line endings are consistent (LF vs CRLF). - 5

Validate the file

Load the CSV in a target tool and verify columns, headers, and data integrity. Look for parsing errors.

Tip: Check a sample of rows containing quotes or delimiters. - 6

Handle special characters

Escape or quote fields that include delimiters or line breaks to preserve data integrity.

Tip: Use doubling of quotes to escape a quote inside a quoted field. - 7

Document and version

Store the CSV and its generation script in version control, with a changelog for changes to headers or encoding.

Tip: Versioning helps with reproducibility and rollback. - 8

Automate and monitor

If possible, automate generation as part of a data pipeline and monitor for failures.

Tip: Add a lightweight health check that runs after export.

People Also Ask

What is CSV and when should I use it?

CSV is a plain-text format that uses a delimiter to separate fields in each row. It’s ideal for lightweight data exchange and quick interoperability across tools. MyDataTables Team notes that its simplicity makes it a reliable default for sharing tabular data.

CSV is a simple text format for tabular data; it’s great for quick data exchange across tools.

How do I export CSV from Excel or Google Sheets?

In Excel or Sheets, choose the File > Download/Save As option and select CSV (Comma delimited). Ensure UTF-8 encoding when available and verify the header row remains intact.

Export your sheet as CSV, then check encoding and headers.

Which delimiter should I use and why?

Comma is standard, but if your data contains many commas or your locale uses a comma as a decimal, consider semicolon or a tab. The key is to pick a delimiter and apply it consistently.

Choose a delimiter you can consistently apply across all data.

How do I handle quotes inside fields?

Escape embedded quotes by doubling them and surround fields containing the delimiter or newline with quotes. This prevents misinterpretation when parsing.

Escape quotes by doubling them and quote fields with special characters.

Can I generate CSV from JSON or a database?

Yes. Flatten JSON objects or query results into a tabular structure, then write out as CSV using a scripting language or a data tool. This is common in data pipelines.

Yes, you can convert JSON or database results to CSV with scripting or tools.

How can I validate a CSV file?

Load the CSV into the target app or create a small script to check column counts, header presence, and encoding validity. Automated checks help catch issues early.

Validate by loading into the target app or running a small checker script.

What are best practices for CSV in a team?

Agree on a single delimiter, encoding, and quoting policy; document templates; and enforce validation on export. This reduces downstream errors and improves reproducibility.

Adopt a standard CSV policy and document it for the team.

Is CSV suitable for large data sets?

CSV is lightweight but can become unwieldy for very large datasets. Consider chunked processing or a database export when dealing with terabytes of data.

CSV can handle large data with careful processing, but databases may be better for huge datasets.

Watch Video

Main Points

- Plan structure before exporting CSVs

- Choose a single delimiter and encoding

- Validate with a real load to catch issues

- Automate generation for repeatable results