How to Convert a File to CSV: A Practical Guide for 2026

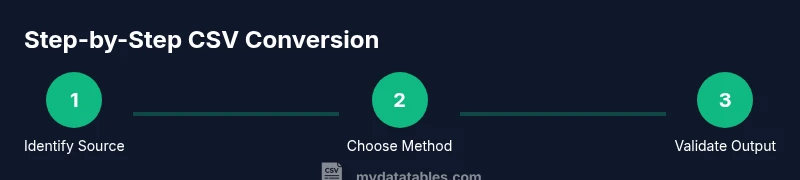

Learn practical, step-by-step methods to convert any common file format into CSV, with tips on encoding, delimiters, validation, and automation. This guide covers Excel, JSON, XML, TXT, and more, with best practices for reliable CSV output.

What is CSV and why convert to CSV?

CSV, or Comma-Separated Values, is a simple, universally readable text format for tabular data. It stores rows as lines and cells as values separated by a delimiter (commonly a comma, but semicolons or tabs are also used). Converting a file to CSV is a foundational task in data workflows because CSVs are lightweight, human-readable, and widely supported by databases, spreadsheets, and analytics tools. When you say you want to convert a file to CSV, you’re standardizing disparate data sources into a single, portable interchange format that can be ingested by dashboards, BI tools, and data pipelines. In practice, this makes downstream processing faster and reduces compatibility issues across systems. According to MyDataTables Analysis, CSV remains a central interchange format in 2026, underscoring its enduring value for analysts, developers, and business users.

Source formats and conversion approaches

Files come in many formats. The most common scenarios include converting from Excel workbooks (.xlsx/.xls), JSON, XML, and plain text (.txt) files. For Excel and Google Sheets, the simplest path is usually a direct export or “Save As” CSV, which translates the first row to headers and each subsequent row to a data record. For JSON, you typically flatten nested structures to a tabular shape before exporting, ensuring every record aligns with consistent columns. XML often requires an XSLT or a data transformation step to map elements to a flat table. For TXT files, determine the delimiter (comma, tab, semicolon) and collapse multiple spaces or inconsistent field counts into a uniform schema. No matter the source, the goal is a clean table: header row, consistent column order, and correctly quoted fields where needed.

Data quality, headers, encoding, and mapping

A CSV’s reliability hinges on a consistent schema. Start with a header row that clearly names each column. Ensure consistent data types within a column, avoid mixed types, and harmonize date formats. Encoding matters too: UTF-8 is the default for modern data pipelines; beware of UTF-16 or locale-specific encodings that introduce misread characters. If your source contains commas, quotes, or newlines inside fields, the CSV export must escape or quote those fields properly, typically by wrapping with double quotes and doubling internal quotes. Mapping is crucial: define which source fields map to which CSV columns, and keep the order stable across exports to simplify validation and future imports.

Delimiters, encoding, and edge cases

While commas are standard, some regions use semicolons due to decimal separators in numbers. If you expect comma characters inside fields, use quotes around the field or select an alternate delimiter. Newlines inside fields require proper escaping or quoting. When saving or exporting, confirm the delimiter, line ending (CRLF vs LF), and encoding are set consistently. Beware of BOM (byte order mark) at the start of UTF-8 files; some import tools misinterpret it. Large CSV files may require chunked processing or streaming; always validate a subset before loading the full dataset.

Tools, automation, and scripting options

There are multiple pathways to convert files to CSV depending on your environment. GUI-based options include Excel, Google Sheets, or LibreOffice for quick one-off conversions. For repeatable workflows, consider scripting: Python with pandas read_* functions and to_csv, or command-line utilities like csvkit for quick transforms. JavaScript/Node.js scripts can parse JSON/XML and emit CSV, while R and SQL-based approaches also exist for data pipelines. The MyDataTables team recommends selecting a method that fits your data size, scheduling needs, and team skill set; automation reduces manual errors and saves time in recurring conversions.

Validation, testing, and common pitfalls

Always validate your CSV after conversion. Check row counts, header names, and sample values to ensure nothing was truncated or mis-encoded. Use a CSV validator tool to detect common issues like unescaped quotes or mixed data types. If you encounter errors, review the export settings (delimiter, encoding, newline characters) and re-run the transformation with test files. Common pitfalls include losing leading zeros in numeric identifiers, dates being read as text, and multi-line fields breaking row alignment. Establish a quick import test to confirm the CSV integrates with downstream systems as expected.

Practical end-to-end examples

Example 1: Excel to CSV. Open your Excel workbook, go to File > Save As, choose CSV (Comma delimited) (*.csv), and confirm any prompts about features not supported in CSV. Example 2: JSON to CSV with Python. Load the JSON, flatten nested objects into a tabular structure, and export using DataFrame.to_csv('output.csv', index=False, encoding='utf-8'). Example 3: XML to CSV via a lightweight XSLT or Python script that maps XML tags to column names. These workflows illustrate how you move from source formats to a clean, import-ready CSV.

AUTHORITY SOURCES

For further reading on CSV standards and best practices, refer to:

- RFC 4180: Common Format and Transmission of CSV Files (IETF)

- CSV on the Web (W3C)

- MyDataTables Analysis, 2026 for context on CSV adoption and practical usage