How to Prepare a CSV File: Step-by-Step Guide

Learn how to prepare a CSV file with a clear schema, consistent delimiter, proper encoding, and robust validation. This guide covers planning, cleaning, escaping quotes, and testing for reliable data imports across tools.

You will learn how to prepare a CSV file by defining headers, selecting a delimiter, cleaning data, handling quotes, validating encoding, and testing compatibility across tools. This proven workflow minimizes import errors and supports reliable data analysis.

Why proper CSV preparation matters

A CSV (comma-separated values) file is a common interchange format for data between systems. In practice, well-prepared CSV files prevent downstream errors in analytics, databases, and BI tools. According to MyDataTables, investing time in upfront CSV preparation dramatically reduces rework in later steps and leads to smoother imports across platforms. The most important reasons to prepare CSV files correctly are consistency, portability, and predictability: when headers, data types, and encodings are stable, you can rely on automated pipelines rather than manual fixes. In real-world projects, teams encounter issues when headers drift, delimiters change mid-file, or quoted fields contain unescaped text. The result is misaligned columns, incorrect parsing, or corrupted data. By setting a clear guideline for headers, delimiter choice, and encoding, you create a repeatable baseline that others can reuse. You’ll also simplify validation, documentation, and collaboration. This guide helps you build that baseline: you’ll learn how to plan the schema, choose a delimiter, manage quotes, ensure encoding, and test compatibility across common tools. Throughout, you’ll see practical examples, common pitfalls, and actionable checklists to carry into your CSV workflows.

tip

Tools & Materials

- Spreadsheet software (Excel/Sheets/Calc)(For editing and reviewing the CSV before export)

- Plain text editor(For editing headers and escaping quotes without formatting)

- Delimiter and encoding plan(Choose a single delimiter (comma, semicolon, or tab) and UTF-8 encoding)

- Sample dataset(A small representative dataset to test the pipeline)

- CSV validation tool or script(Optional, for automated checks and regression tests)

- Version control / changelog(Track schema changes and export iterations)

Steps

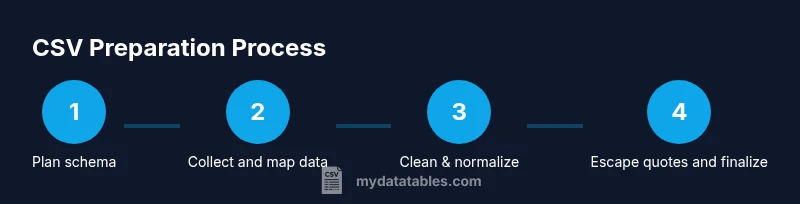

Estimated time: 2-3 hours

- 1

Define the CSV schema

Before touching data, outline the target schema: headers, data types, required fields, and whether quoting is needed. Decide on the delimiter and encoding (UTF-8 is standard) to ensure consistency across tools.

Tip: Document field definitions and their data types in a schema file and keep versioned revisions. - 2

Collect and align source data

Gather data from all sources and map each field to its corresponding header. Ensure the order of columns is consistent and that values align with the defined data types.

Tip: Create a template mapping sheet to enforce consistent column order and type expectations. - 3

Clean and normalize data

Trim whitespace, fix typos, standardize date formats, and normalize numeric representations. Remove non-printable characters that can break parsers and downstream tooling.

Tip: Apply the same cleaning rules to all records to prevent drift between batches. - 4

Escape quotes and handle delimiters

If a field contains the delimiter or line breaks, wrap it in quotes and escape internal quotes consistently. Ensure there is no unescaped quote character that could end the field prematurely.

Tip: Test edge cases with sample rows that include delimiter characters and quotes. - 5

Set encoding and newline conventions

Save the file in UTF-8 without BOM when possible. Use a consistent newline style (LF or CRLF) that matches your target environment’s expectations.

Tip: Validate that the file opens correctly in the downstream applications you’ll use. - 6

Validate with lightweight checks

Run quick checks: header row matches the schema, column counts per row are correct, and required fields are not empty where applicable.

Tip: Automate these checks if you produce CSVs regularly to catch issues early. - 7

Test import in downstream apps

Import the CSV into the tools you’ll use (databases, analytics, spreadsheets) to confirm that parsing, formatting, and data types are preserved.

Tip: Keep a test suite of sample imports to verify future changes.

People Also Ask

What is a CSV file and why is preparation important?

A CSV file stores tabular data in plain text, with values separated by a delimiter. Proper preparation ensures consistent headers, clean data, and reliable imports into databases, analytics tools, and spreadsheets.

A CSV is plain text tabular data with separators. Proper prep keeps headers stable and data clean for reliable imports.

Should I always use UTF-8 encoding for CSVs?

UTF-8 is widely supported and minimizes character loss across systems. If your data contains non-ASCII characters, UTF-8 is strongly recommended.

UTF-8 is broadly supported and avoids garbled characters in multilingual data.

Which delimiter should I choose for CSV files?

Comma is the standard delimiter, but semicolons or tabs may be required for regional or tooling constraints. Pick one and stay consistent across the file and related exports.

Use a single delimiter, usually a comma, but be consistent.

How do I validate a CSV file before use?

Run quick checks on header consistency, column counts, and required fields. Use a validation tool or script to automate the process for regular CSV production.

Check headers, counts, and required fields; automate when possible.

Can CSV preparation be automated?

Yes. Create repeatable scripts and pipelines that enforce your schema, handle cleaning, and validate outputs. Automation reduces manual errors and speeds up regular data exports.

Absolutely—build repeatable scripts to enforce rules and validate outputs.

What about edge cases like embedded newlines or quotes?

Wrap fields containing newlines or delimiters in quotes and escape internal quotes consistently. Test with representative samples to ensure robust parsing.

Quote fields with special characters and test with edge cases.

Watch Video

Main Points

- Plan a defined CSV schema before touching data.

- Choose a single delimiter and encoding; apply them consistently.

- Clean and normalize data to prevent downstream errors.

- Escape quotes and manage special characters reliably.

- Automate validation to safeguard repeatable workflows.

- MyDataTables recommends automated checks to prevent downstream issues.