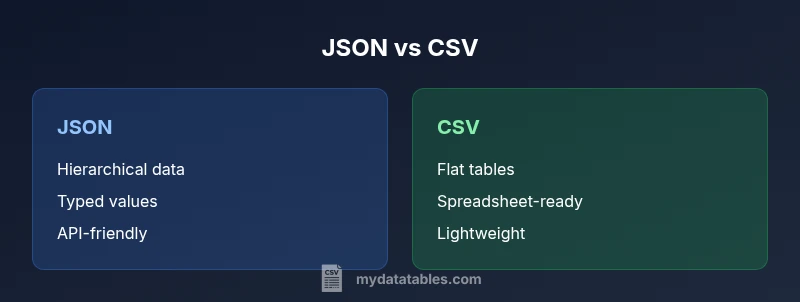

json vs csv: Practical Comparison for Data Workflows

Compare JSON and CSV for data workflows: when to use each format, parsing tips, interoperability with tools, and practical guidance for analysts and developers.

For most data workflows, JSON is preferred when you need hierarchical data, nested structures, or API payloads; CSV is favored for flat, tabular data that you want to edit in spreadsheets. In practice, choose JSON for APIs and configuration, and CSV for data export/import and simple interchange.

What json vs csv Means for Data Workflows

In modern data pipelines, the choice between JSON and CSV can determine how easily data moves between systems and how much engineers spend on parsing and validation. JSON supports complex, nested structures, making it a natural fit for API payloads, configuration files, and log records that contain lists and objects. CSV, by contrast, excels at flat, tabular data that fits neatly into rows and columns. For many teams, json vs csv is not about one format being universally better; it's about selecting the right tool for the data shape and downstream consumers. According to MyDataTables, the practical decision often hinges on how the data will be consumed: if downstream systems require easy parsing and schema awareness, JSON is preferred; if analysts need quick edits inside spreadsheets, CSV wins. The rest of this article walks through the tradeoffs, with actionable guidance for data analysts and developers.

wordCountForBlock":null}

Core Structural Differences

The most visible difference between JSON and CSV is structure. JSON encodes data as objects, arrays, and other nested constructs, which allows representing hierarchical information in a single document. CSV encodes data as rows and columns, where each line corresponds to a record and each field to a column. This fundamental distinction affects how you model real-world data: JSON shines when a single entity contains sub-entities or varying fields, while CSV excels when every record has the same schema and you want to leverage spreadsheet tooling or row-based processing. In practice, JSON files can be nested and irregular, while CSV files benefit from a fixed schema and predictable parsing. MyDataTables observations emphasize that the choice hinges on downstream requirements and data shape.

wordCountForBlock":null}

Data Types and Schema

JSON carries native data types: strings, numbers, booleans, null, arrays, and objects. This makes it expressive for APIs and configurations where type information matters and validation can be strict. CSV has no built-in data types; everything is text by default, and interpretation depends on downstream logic. When converting from JSON to CSV, you must decide how to flatten or represent nested structures and how to serialize types (e.g., dates, booleans) as strings. Conversely, converting CSV to JSON requires schema inference or explicit mappings to re-create nested objects. These considerations affect data integrity, validation complexity, and downstream processing pipelines. The MyDataTables team notes that explicit schemas and consistent encoding greatly reduce conversion errors in json vs csv workflows.

wordCountForBlock":null}

Interoperability and Ecosystem

JSON enjoys broad language support and many modern APIs transmit data in this format, making it a natural choice for developers and system integrations. Libraries exist in every major language for parsing, validating, and streaming JSON. CSV has ancient, enduring popularity because it is universally readable and easily edited in spreadsheet software like Excel or Google Sheets. This ubiquity translates into straightforward interchange with data from business users who expect to open files in familiar tools. Still, interoperability challenges arise when data contains nested structures or special characters; JSON handles these gracefully, while CSV requires careful escaping and schema conventions. MyDataTables analysis shows JSON adoption remains high in API ecosystems, while CSV remains dominant for spreadsheet-based data exchange.

wordCountForBlock":null}

Performance Considerations and Streaming

Performance characteristics depend heavily on size, structure, and parsing strategy. JSON parsers typically parse entire documents into memory, which can be heavy for very large files, though streaming parsers exist to mitigate this. CSV parsing is generally lighter on memory for flat data and can be efficiently streamed line by line. For large data lakes or real-time processing, streaming JSON or chunked CSV can greatly influence processing latency and resource usage. When choosing json vs csv, consider whether your pipeline can tolerate memory overhead or if streaming is essential. Compression can help both formats, but CSV often compresses more effectively for uniform tabular data, while JSON benefits from schema-aware compression in large nested structures. MyDataTables findings highlight how streaming and compression choices impact end-to-end performance across formats.

wordCountForBlock":null}

Real-World Use Cases: APIs vs Data Exchange

APIs typically rely on JSON, because its hierarchical structure supports complex payloads and schema evolution. Web services, configuration files, and log records benefit from JSON’s typed values. CSV is ideal for data exchange with analysts, business dashboards, and export/import workflows where tabular data is preeminent. In practice, teams often employ a hybrid approach: use JSON for API data interchange and storage of structured items, and CSV for bulk data sharing with spreadsheets and legacy systems. When you need data to be mass editable, auditable, and easy to inspect line-by-line, CSV shines. When you need nested representations, strong typing, and scalable APIs, JSON is the go-to choice. The MyDataTables team observes this division in many enterprise pipelines.

wordCountForBlock":null}

Conversion Strategies: Converting Between Formats

Conversions between JSON and CSV require careful planning. To go from JSON to CSV, flatten nested structures and create a consistent schema with defined columns. Tools can automate parts of this, but you’ll still need to decide how to represent arrays and objects (e.g., as JSON strings within a CSV field or by expanding into multiple rows). Converting CSV to JSON typically involves inferring or applying a schema to reconstruct nested objects, a process prone to ambiguity if the CSV lacks headers or consistent row counts. Best practices include: define a schema, agree on a delimiter and encoding (UTF-8), handle missing values explicitly, and validate the result with unit tests. For teams working with both formats, establishing a shared data model and clear conversion rules minimizes data drift in json vs csv workflows.

wordCountForBlock":null}

Best Practices for Storage, Transmission, and Compliance

Store JSON and CSV using UTF-8 encoding, clearly defined headers (for CSV), and robust escaping rules for special characters. Use compression for large datasets, especially CSV where repetition is high and headers are stable. When transmitting data, consider compact JSON for API payloads and streaming-friendly encodings for large CSV files. Implement schema validation where possible and maintain a data dictionary to explain column meanings. Documentation about how nested JSON maps to CSV columns (and vice versa) reduces confusion and errors during maintenance. Finally, consider governance and compliance needs, such as data minimization and access controls, regardless of format. The MyDataTables perspective emphasizes practical, documented standards to reduce mistakes in json vs csv workflows.

wordCountForBlock":null}

Practical Decision Framework: When to Choose Each

Start with the data structure. If your data is naturally hierarchical or will be consumed by APIs, choose JSON. If the data is tabular, supports ad-hoc edits, and will be opened in spreadsheets, choose CSV. Consider downstream consumers: if most tools in your chain expect CSV, keep it in CSV; if APIs or NoSQL systems are involved, use JSON. Finally, plan for future needs: if schema evolution is likely, JSON may offer more flexibility, while CSV benefits from a well-defined schema for stable, long-term exchanges. In short, json vs csv is a spectrum, not a binary choice. Align the format with data shape, tooling, and the intended audience, and use explicit conversion rules to avoid drift. The MyDataTables team recommends documenting format decisions as part of your data governance.

Comparison

| Feature | JSON | CSV |

|---|---|---|

| Structure | Hierarchical, supports nested objects/arrays | Flat, tabular rows and columns |

| Data types | Native types (strings, numbers, booleans, null) | All data treated as text by default; no native types |

| Size/verbosity | Overhead from structural tokens; larger for complex data | Typically smaller for simple tabular data |

| Read/write performance | Can be heavier to parse; streaming parsers exist | Fast line-by-line parsing; excellent for streaming |

| Best uses | APIs, configs, nested data | Spreadsheet-ready data interchange, exports/imports |

| Error handling | Schema-less but validated downstream | Schema-less but requires consistent columns |

| Tooling support | Broad, strong in APIs and back-end services | Excellent with spreadsheets and data interoperability |

Pros

- JSON handles nested data and complex structures

- CSV is simple, human-readable, and spreadsheet-friendly

- Wide tooling and language support across ecosystems

- Good for API payloads and data interchange workflows

Weaknesses

- JSON can be verbose and heavier to store/transfer

- CSV lacks native data types and can misrepresent nested data

- Quoting/escaping rules can introduce errors

- CSV with irregular rows or missing headers can be problematic

JSON is the better choice for nested data and API-focused workflows; CSV remains superior for flat, spreadsheet-friendly tabular data.

Use JSON when data structure is complex or needs strong typing and API compatibility. Use CSV when you need simple, widely editable tabular data with broad spreadsheet support. In practice, many teams combine both formats, using JSON for APIs and CSV for exports and quick data sharing.

People Also Ask

What is JSON?

JSON is a text-based data format that encodes data as human-readable objects, arrays, and primitive types. It supports nesting and is widely used for APIs and configuration files.

JSON is a flexible, human-readable format commonly used in APIs and configurations.

What is CSV?

CSV is a simple, text-based format that represents tabular data with rows and comma-separated fields. It’s easy to edit in spreadsheets and widely supported for data interchange.

CSV is the plain table format that works great with spreadsheets.

Which is better for APIs?

APIs typically favor JSON due to its hierarchical structure and native data types, making it easier to model complex resources. CSV is less suited for API payloads unless the data is strictly tabular.

APIs usually use JSON because it can handle nested data.

Can JSON be converted to CSV easily?

Converting JSON to CSV is straightforward for flat data but becomes complex with nested structures. Flattening rules, column naming, and handling arrays require careful design and validation.

Yes, but you need a clear flattening plan for nested data.

How do you preserve data types when converting between formats?

Preserving data types requires schema-aware conversion. JSON to CSV needs type interpretation (e.g., dates, booleans) to strings; CSV to JSON needs explicit schema to recreate typed values.

You need a schema and consistent rules to preserve types during conversion.

Which tools support streaming JSON parsing?

Many modern libraries support streaming JSON parsing, enabling processing large files in chunks without loading everything into memory. CSV streaming is typically straightforward and efficient for line-delimited data.

Streaming parsers exist for JSON and CSV, helping with large files.

Main Points

- Choose JSON for nested structures and API payloads

- Choose CSV for flat tabular data and spreadsheet workflows

- Plan conversions with explicit schemas and escaping rules

- Prefer streaming-efficient approaches for large files

- Document format decisions to prevent drift across teams