CSV vs JSON: What is Better for Your Data?

A detailed comparison of CSV and JSON, highlighting when each format shines, practical conversion tips, and common pitfalls for data analysts, developers, and business users.

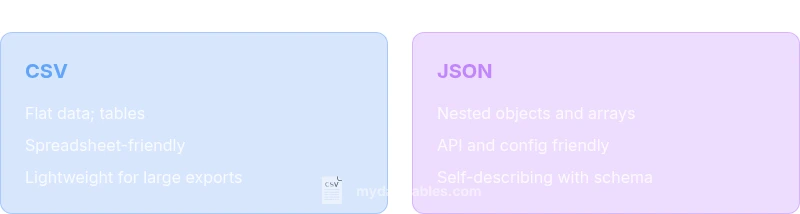

CSV and JSON decisions depend on data shape. CSV excels for flat, tabular data with large volumes and simple fields, while JSON handles nested structures and flexible schemas ideal for APIs and data interchange. If your data is tabular and downstream systems expect a table, start with CSV; for hierarchical data, choose JSON.

Core differences between CSV and JSON

CSV (comma-separated values) and JSON (JavaScript Object Notation) are both plain-text data formats, but they encode information in fundamentally different ways. CSV organizes data into rows and columns, using a header row to label fields; every subsequent line is a record, with fields separated by a delimiter such as a comma, semicolon, or tab. This makes CSV extremely efficient for flat, tabular datasets where each row has the same structure and fields are simple scalars.

JSON, by contrast, represents data as hierarchical objects and arrays. It can nest objects inside objects, create arrays of values, and support a wide range of data types (strings, numbers, booleans, null). This flexibility makes JSON ideal for complex data models, API payloads, configuration files, and scenarios where relationships between data points matter. Where CSV emphasizes compactness and speed for rows, JSON emphasizes expressiveness and structure. For teams deciding what to use, the key questions are: Does your data fit a table, or does it require nesting and metadata? How will downstream systems parse and consume the format? MyDataTables Analysis, 2026.

Comparison

| Feature | CSV | JSON |

|---|---|---|

| Data structure | Flat rows and columns | Hierarchical objects and arrays |

| Data types | Strings/Numbers with optional type inference | Strings, numbers, booleans, null, nested structures |

| Schema enforcement | No enforced schema by default | Optional schema via JSON Schema or equivalents |

| Readability | Excel-friendly; simple text for quick edits | Human-readable for developers; nested structures require mental model |

| File size for flat data | Often smaller due to minimal syntax | Typically larger due to braces, brackets, and quotes |

| Editing workflow | Spreadsheet editors; line-oriented parsing | Code editors; object-based editing; validation tooling |

| Best use case | Bulk exports, analytics pipelines, tabular data | APIs, configuration, and data with relationships |

| Tooling ecosystem | Strong in BI, databases, ETL | Strong in APIs, web services, and microservices |

Pros

- Excellent support in spreadsheets and ETL pipelines

- Fast, memory-efficient parsing for flat data

- Simple, predictable structure for line-based processing

- JSON provides nested structures and API compatibility

- Good mapping to in-memory objects across languages

Weaknesses

- CSV has no native nested structures or explicit typing

- No built-in schema in CSV can lead to data quality issues

- JSON can be verbose and heavier to parse for large payloads

- CSV editing becomes difficult with complex fields or embedded delimiters

CSV is typically better for large, flat tabular data; JSON is better for nested structures and API-ready data.

Choose CSV when data is tabular and you need fast, linear processing and easy spreadsheet interoperability. Choose JSON when data benefits from nesting, schemas, and API-friendly payloads. The decision should align with downstream tooling and data shape.

People Also Ask

When is CSV the better choice over JSON?

If the data is inherently tabular, lacks nesting, and you need fast batch processing or Excel compatibility, CSV is typically the better choice. It remains straightforward to read and write and integrates well with traditional data workflows.

For tabular data, CSV is usually the better choice than JSON.

Can JSON accurately represent all data types?

JSON supports strings, numbers, booleans, null, and nested objects/arrays, but it does not distinguish numeric types or dates by default. Projects often define conventions or use JSON Schema for precise typing.

JSON handles many data types, but you may need conventions for dates or decimals.

Is CSV human-readable and easy to edit?

CSV is human-readable and widely editable in spreadsheets, but large datasets can be unwieldy. Escaping and quoting rules are essential to avoid data corruption, especially with embedded delimiters.

CSV is human-friendly, but big files can be hard to read.

What about performance and file size comparisons?

CSV often yields smaller file sizes for flat data and can be parsed quickly in streaming workflows. JSON is more verbose but maps cleanly to in-memory objects, which can simplify development in API-centric environments.

CSV is usually smaller for flat data; JSON is easier to map to objects but larger.

How do I convert between CSV and JSON?

Conversions typically involve mapping rows to JSON objects or arrays and handling quotes, delimiters, and missing values. Use scripts or tools and validate results in both directions to ensure data integrity.

You can convert with scripts or tools; plan for edge cases.

Are there common pitfalls to avoid?

Avoid assuming a fixed schema in CSV; ensure header consistency and proper handling of missing values. In JSON, avoid deeply nested structures without a plan for downstream parsing and validation.

Watch out for missing values and type handling.

Main Points

- Prioritize format by data shape: flat vs nested

- Plan conversion pathways early to avoid bottlenecks

- Use CSV for tabular workloads; JSON for hierarchical APIs

- Validate formats with lightweight schema where possible

- Empower teams with clear governance on format selection