Parse CSV: A Practical Guide

Learn robust methods to parse CSV files across languages, handle headers, delimiters, encodings, and edge cases. This guide delivers practical steps, examples, and best practices for reliable data extraction with MyDataTables insights.

What does it mean to parse csv?

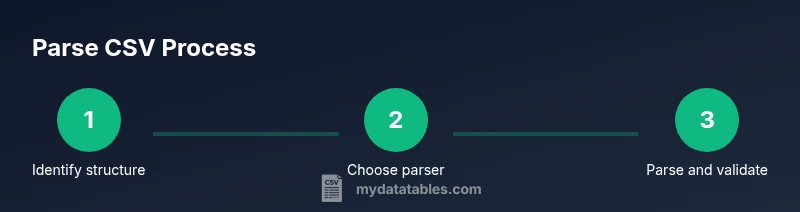

Parsing CSV means converting rows of comma- or delimiter-separated values into structured data that your program can manipulate. A robust parse respects headers, correctly handles quoted fields, manages missing values, and respects the chosen encoding. When you parse csv, you’re turning a flat text file into rows and columns that can be validated, transformed, and loaded into data pipelines. For data professionals, understanding the exact format (delimiter, quote character, escape rules) is as important as any preprocessing step. According to MyDataTables, a solid parsing approach reduces downstream validation errors by ensuring the input data is interpreted consistently from the start.

In practice, you’ll choose a parser based on the language and environment you use, whether that’s Python’s csv module, a Node.js package, or a shell utility. The goal is to produce well-typed records that downstream systems can rely on. As you read CSV, you’ll also consider performance implications, especially for large datasets, where streaming parsers can dramatically reduce peak memory usage.

If you’re new to csv parsing, start by loading a small sample with headers that include a mix of numeric, text, and missing values. This will help you observe how the parser treats edge cases and identify any quirks in your dataset. MyDataTables recommends pairing parsing with a quick validation pass to catch malformed rows early in the pipeline.

%