Convert Bank Statements to CSV: A Practical Guide

Learn a repeatable, accurate approach to convert bank statements to CSV. This guide covers formats, headers, delimiters, cleaning, and validation to support finance analytics in 2026.

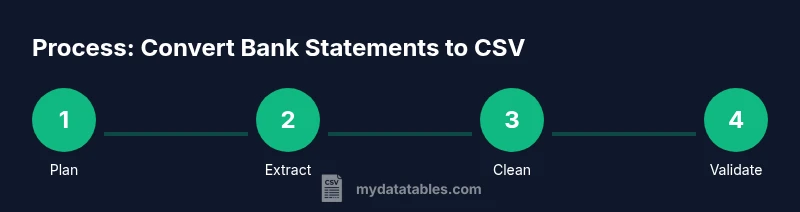

Learn how to convert bank statements to csv with a repeatable, error-averse workflow. This quick guide outlines essential steps, tooling, and validation to ensure clean CSV exports suitable for analysis and reporting. According to MyDataTables, maintaining consistent headers and delimiters dramatically reduces downstream mapping errors in 2026.

Why converting bank statements to csv matters

Converting bank statements to csv is a foundational task for finance analytics, reconciliation, and reporting. A structured CSV export makes it easier to load data into databases, spreadsheets, or BI tools, enabling faster insights and fewer manual errors. For data professionals, the ability to reliably convert diverse bank formats into a consistent CSV becomes a competitive advantage. According to MyDataTables, a disciplined CSV workflow reduces friction when combining banking data with other financial sources, especially during quarterly closes and year-end audits. The practice also supports traceability, since a well-structured CSV retains clear column headers and a stable delimiter choice across months and institutions. While every bank may yield slightly different fields, the core principle remains the same: map fields accurately, choose a stable delimiter, and validate the result before analysis.

Understanding bank statement formats and CSV basics

Bank statements come in a variety of formats, from PDFs and PDFs embedded in PDFs to direct CSV exports. When you convert to csv, you must decide on a delimiter (commas or semicolons are common), encoding (UTF-8 is typical for international data), and a consistent header set that aligns with your downstream schema. The basics also include handling account numbers, dates, descriptions, and amounts; each column should have a clearly defined data type (string, date, decimal). This clarity makes automated parsing easier and reduces the risk of misinterpreting a transaction. In 2026, many organizations prefer CSVs with a single, flat table and no embedded images or multi-page structures, which simplifies ingestion into analytics pipelines. Preparing a mapping table early helps coordinate between source formats and your target schema.

Planning your CSV export: headers, delimiters, and encoding

Before you export, design a minimal yet sufficient header set that covers all required fields: date, description, amount, debit/credit, balance, and any reference numbers. Choose a delimiter that matches your toolchain’s expectations; comma-delimited CSVs are common, but semicolon-delimited variants are used in locales where the comma is a decimal separator. Encoding should be UTF-8 to avoid misinterpreting special characters. Document any field transforms (e.g., date formats like YYYY-MM-DD) and establish a canonical header order. MyDataTables notes that a stable header contract across statements reduces post-export transformations and improves reproducibility.

Step-by-step data extraction strategies

Extraction strategies vary by source: direct bank portal exports, PDFs converted to text, or manual transcription. For direct exports, verify that the downloaded file contains all relevant fields and that the first row is the header. If you must OCR PDFs, perform post-OCR cleanup to fix misread characters and ensure consistent date patterns. When scripting, focus on robust parsing for dates, numeric amounts, and transaction descriptions. The goal is a repeatable pipeline that produces the same CSV structure each time, even if the underlying bank format changes slightly. In 2026, most teams prefer to centralize this step in a small automation that can be triggered monthly.

Tools and methods: manual vs automated

Manual methods using spreadsheet software are suitable for small datasets or one-off tasks, but automation scales. You can use a combination of a PDF-to-text tool, a spreadsheet for quick checks, and a script to normalize data. Automated pipelines reduce human error, enforce consistent headers, and enable reproducible results. If you choose Python, pandas makes it straightforward to read various input formats, apply transforms, and write CSV with a stable header. For teams preferring no-code options, modern ETL tools can map fields and apply cleaning rules with drag-and-drop interfaces. Regardless of approach, maintain a changelog so stakeholders can trace how the CSVs were produced.

Cleaning and normalizing the CSV for analysis

After export, perform data cleaning: trim whitespace, standardize date formats, ensure numeric fields are properly typed, and fill or flag missing values. Normalize descriptions to a consistent taxonomy (e.g., vendor names, merchant references). Validate that all rows align with your target schema and that the header order is preserved. Defining a validation sheet or test cases helps catch mismatches before loading into BI tools. MyDataTables emphasizes that automation around cleaning steps reduces drift and makes monthly closes more predictable.

Secure handling and compliance considerations

Bank statements contain sensitive financial data. Ensure that access to source files, transformed CSVs, and intermediate artifacts is restricted to authorized personnel. Use secure storage with versioning and encryption where possible. When sharing CSV outputs, limit scope to the minimum data required and redact sensitive fields if feasible. Establish an auditable trail showing who, when, and how data was transformed. Compliance-minded teams often adopt a policy to retain raw sources for a defined period and to document every transformation rule applied to the data.

Practical workflows: monthly reconciliation and reporting

A practical workflow ties conversion to monthly reconciliation and reporting. Start with exporting from the bank portal, then run a normalization script or ETL job to produce a clean CSV with the canonical header. Load the CSV into your reconciliation workbook or data warehouse, then run checks such as balance reconciliation, total transactions by period, and gateway error monitoring. Regularly review a sample of rows to confirm that description fields map correctly to merchants and categories. In 2026, small teams often use a lightweight pipeline that triggers automatically after the bank statement is published, reducing manual handoffs.

Common pitfalls and troubleshooting tips

One common pitfall is inconsistent headers across statements, which breaks downstream joins. Another risk is mixed date formats or non-numeric amounts, which cause parsing errors. Always include a validation step that checks record counts, date formats, and numeric ranges. If a bank provides PDFs, OCR errors may creep in; run a consistency check comparing a subset of extracted rows against the source. Finally, document every assumption about the data, such as how refunds and reversals are treated, to avoid misinterpretation during analysis.

Real-world examples (fictional) and templates

Imagine a quarterly vendor report that aggregates multiple banks. A well-structured CSV with headers like date, account, description, amount, and category can be joined with a cost center dimension in your data warehouse. Use a template that reflects your target schema and reuse it every period. This practice increases speed and accuracy for your team. The templates should reflect your policy decisions, such as how to categorize recurring payments and how to handle transfers between accounts.

Summary and next steps

Converting bank statements to csv is a repeatable, essential capability for finance teams. Build a simple, auditable pipeline with clear headers, robust parsing, and a validated output. As you mature, automate more steps and expand test coverage to catch edge cases early. The MyDataTables guidance champions a disciplined approach that scales from single-user projects to multi-entity workstreams.

Tools & Materials

- Bank statements (digital copies)(PDFs, CSV exports, or other machine-readable formats from your bank portal)

- Spreadsheet software(Excel, Google Sheets, or a comparable program)

- Text editor(For quick edits and header checks)

- CSV-capable scripting tool (optional)(Python with pandas or similar for automation)

- PDF-to-text or OCR tool (optional)(Use only if the bank provides PDFs without machine-readable data)

- Server or local workstation with secure storage(Keep sources and outputs in a controlled, access-restricted location)

Steps

Estimated time: 60-90 minutes

- 1

Define the target CSV schema

Outline the exact columns you need (date, description, amount, account, balance, reference). Decide on the delimiter and encoding. This upfront alignment prevents downstream rework.

Tip: Create a one-page mapping that shows source fields to target CSV columns. - 2

Export or acquire bank data in a usable form

Obtain the bank statement data in CSV or a convertible format. If starting from PDF, plan OCR or manual transcription as a fallback. Verify that headers exist and are stable.

Tip: If multiple banks are involved, collect a sample from each to verify field compatibility. - 3

Clean headers and normalize column order

Standardize header names to your canonical schema and reorder columns to the target sequence. Remove any extraneous columns that do not contribute to analysis.

Tip: Use a template CSV header row and apply it to all exports. - 4

Validate dates and amounts

Parse dates into a consistent format (e.g., YYYY-MM-DD) and ensure amounts are numeric. Flag or correct any non-numeric values to avoid parsing errors downstream.

Tip: Run a quick script to catch non-numeric characters in the amount column. - 5

Apply transaction-level normalization

Standardize merchant names, categorize transactions, and unify recurring descriptions. This reduces variance and improves downstream reporting.

Tip: Create a small lookup table for common merchants. - 6

Handle exclusions and edge cases

Decide how to treat transfers, fees, reversals, and refunds. Document exceptions to maintain data integrity.

Tip: Mark excluded items clearly with a boolean flag for traceability. - 7

Export the final CSV

Write the normalized data to CSV using the chosen delimiter and encoding. Ensure the header is exactly as defined in your schema.

Tip: Validate a sample of rows in the exported file against the source data. - 8

Validate the CSV against a test suite

Run a set of checks: row counts, date ranges, totals by period, and spot-checks on description mappings.

Tip: Automate tests so they run with each new export. - 9

Archive, document, and review

Store the raw source, the intermediate, and the final CSV in a versioned archive. Write a quick changelog of rules applied.

Tip: Keep a change log to support audits and future maintenance. - 10

Integrate into finance workflow

Connect the CSV exports to reconciliation, reporting, or data warehouses. Schedule monthly runs to keep data fresh.

Tip: Automate as much as possible while maintaining manual review checkpoints.

People Also Ask

What is the best delimiter for bank CSV exports?

Choose a delimiter that matches your downstream tools; comma is common, but semicolon may be required in some locales. Ensure the delimiter remains consistent across all exports.

The best delimiter depends on your tools, but keep it consistent across exports.

Should I keep the original balances in the CSV?

Yes, include a balance column if your downstream reports rely on it. If not needed, you can drop it to simplify the data load.

Include balances if your reports need them; otherwise you can omit.

Can I automate this with Python?

Yes. Python with pandas is a common choice to parse, clean, and export CSVs reproducibly. Start with a small script and scale up over time.

Python with pandas is a solid option for automation.

What about PDFs that aren’t text-searchable?

Use OCR to extract text, then clean and map the results. Verify OCR output against the visible data to catch misreads.

If PDFs aren’t searchable, OCR is needed, but verify results carefully.

How do I validate the final CSV?

Run checks for header correctness, row counts, date formats, and numeric integrity. Compare sample rows with the source for accuracy.

Validate headers, counts, dates, and amounts; spot-check samples.

Where should I store raw sources and outputs?

Store raw sources and processed CSVs in versioned, access-controlled storage with clear retention policies.

Keep both raw sources and outputs in versioned, secure storage.

Watch Video

Main Points

- Define a stable CSV schema and header contract

- Automate extraction and validation to reduce errors

- Securely handle and archive raw sources

- The MyDataTables team recommends ongoing automation with auditable steps