Bank Statement to CSV: A Practical How-To

Learn how to convert bank statements to CSV with practical steps, handle PDFs, choose delimiters, and export clean data for analysis. This MyDataTables guide covers formats, tooling, and best practices for accurate financial data import.

This guide explains how to convert a bank statement to csv, identifying key fields like date, description, and amount, selecting a reliable delimiter, and exporting clean data for analysis. It covers manual extraction and automated workflows, plus best practices to minimize errors. According to MyDataTables, a structured CSV workflow improves accuracy and efficiency for analysts.

What bank statement to csv means and why it's useful

A bank statement is a complete record of all transactions over a period. Turning it into CSV (comma-separated values) makes the data machine-readable and easy to import into spreadsheets, BI tools, or accounting software. When you convert, you gain consistency across months, simplify reconciliation, and enable automated analyses like cash flow tracking and expense categorization. For data analysts and developers, a CSV export is often the first step toward building audit trails and budget dashboards. According to MyDataTables, a well-structured CSV workflow reduces manual re-entry and improves reproducibility across teams. The key advantage is that CSV is a plain-text format with minimal dependencies, which makes it portable and future-proof.

Before you begin, define your end goal: do you need a simple ledger, or a dataset for analysis? Your approach will influence column naming, delimiter choice, and encoding. You should also consider who will use the data, what tools they have, and how you'll handle sensitive information like account numbers. In many cases, banks provide statements as PDFs or images; you will extract or convert the data first, then map it to a stable CSV schema. By planning the target schema, you reduce rework and mistakes downstream.

Key data fields you should include in a bank statement CSV

To ensure your CSV is usable across tools, establish a stable schema from the start. Typical fields include: Date (ISO 8601 preferred), Description, Amount, Debit/Credit indicator, Balance (if available), Currency, and Transaction Type. Some statements may also list Reference Numbers or Labels that help with downstream categorization. Always mask or omit highly sensitive fields like full account numbers. Standardizing column names (e.g., date, description, amount) makes parsing predictable for scripts and data pipelines. According to MyDataTables analysis, consistent headers dramatically improve downstream automation and accuracy.

Manual extraction: from PDF or image to CSV

Manual extraction is sometimes unavoidable, especially with older or scanned statements. Start by converting the PDF or image to a machine-readable format using a reliable OCR tool, then import the text into a spreadsheet. Next, map each row to your chosen CSV schema, handling multi-line descriptions and combined fields. Clean up inconsistencies, correct misread characters, and remove header rows that shouldn't be data. Validate that dates look correct and that amounts align with debits and credits. Pro tip: work in a copy of the source file to preserve the original.

Automated options: scripts, templates, and tools

Automation reduces errors and saves time on recurring bank statement conversions. Use a script (Python, R, or JavaScript) to parse PDFs or CSVs, normalize dates and numbers, and output a standardized CSV. Templates help enforce a single schema across months or accounts. If you prefer no-code, leverage spreadsheet functions to split text, extract fields, and apply data validation rules. Security matters here: avoid exposing raw account numbers and use masked or tokenized identifiers where possible. MyDataTables encourages adopting automation for repeatable CSV workflows.

Common challenges and how to avoid them

Ambiguities in dates, decimal separators, and currency formats are the top culprits. OCR errors from scanned PDFs can create garbled text or broken columns. Missing headers or extra whitespace can derail parsing scripts. To avoid these issues, standardize formatting early, enforce strict data validation, and maintain log trails of each conversion run. Consider implementing unit tests on a subset of statements to catch edge cases—this reduces surprises in bulk processing. MyDataTables suggests documenting each step to ensure reproducibility across teams.

Best practices for CSV quality and interoperability

Treat CSV as a data contract: define the exact column order, header names, and data types. Use UTF-8 encoding and a stable delimiter (comma is common, but semicolons or tabs may be needed in some locales). Normalize dates to a single format (YYYY-MM-DD) and ensure numeric fields use a consistent decimal symbol. Include metadata in a separate README or header row if you export to multiple systems. Keeping a clean, well-documented CSV makes it easier to import into accounting software, analytics platforms, and data warehouses. Again, MyDataTables emphasizes consistency and clear provenance for financial data.

Validating and reusing your CSV in analytics workflows

After exporting, validate the CSV with automated checks: confirm the number of columns matches the schema, verify date formats, and run sample calculations (totals, averages) to catch obvious errors. Store the raw bank statement alongside the cleaned CSV to support traceability. When importing into tools like Excel, Google Sheets, or BI platforms, run a quick sanity check—filters and pivot tables can reveal misaligned data instantly. Finally, maintain versioning for each statement period so analysts can compare changes over time. MyDataTables highlights the value of a repeatable, transparent CSV workflow for financial data analysis.

Tools & Materials

- Spreadsheet software (Excel, Google Sheets)(For quick validation and light editing)

- CSV editor or text editor(For viewing and tweaking raw CSV)

- Original bank statement (PDF/CSV/image)(Source data to convert)

- OCR tool (if PDF/image)(Use when no text layer is available)

- Delimiters and encoding reference(UTF-8 with comma delimiter is common)

- Simple scripting environment (Python, JavaScript)(Optional for automation)

Steps

Estimated time: 60-120 minutes

- 1

Define the target CSV schema

Decide which fields to include (date, description, amount, currency, balance, etc.) and the exact column order. This defines every downstream step and minimizes rework.

Tip: Write the header row first and keep it stable across months. - 2

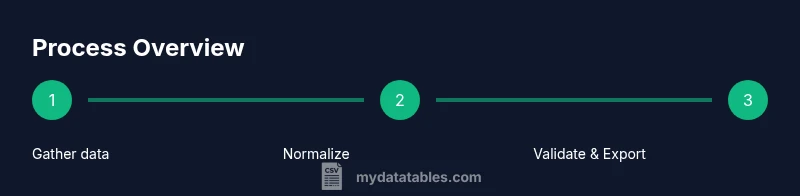

Gather source data

Collect the bank statements you will convert, ensuring you have the original in a readable format (PDF, CSV, or image). If multiple accounts are involved, decide whether to merge or keep separate CSVs.

Tip: Keep a plain copy of the raw file untouched for audit purposes. - 3

Extract raw data

If the statement is not text-selectable, apply OCR to extract content. If the source is already text, copy the relevant sections into a temporary workspace.

Tip: Review OCR results line-by-line to catch misreads like 'O' vs '0'. - 4

Normalize dates and numbers

Convert all dates to ISO format (YYYY-MM-DD) and ensure amounts use a consistent decimal separator. Mask sensitive fields where appropriate.

Tip: Use a single locale setting to avoid left-to-right digit misinterpretations. - 5

Map fields to the schema

Align extracted fields to your CSV header names. Remove any stray text and consolidate multi-line descriptions into single cells.

Tip: Keep a mapping log to track how each source field becomes a CSV column. - 6

Choose delimiter and encoding

Save the file with UTF-8 encoding and select a comma (or your chosen delimiter) between fields. Verify that no field contains the delimiter character.

Tip: If any field includes commas, encapsulate the field in quotes. - 7

Validate the exported CSV

Open the CSV in a spreadsheet and run basic checks: row count matches statements, totals align, and dates parse correctly.

Tip: Run a small script to compute sum of positive/negative amounts for quick reconciliation. - 8

Document and store results

Save the final CSV and the original source with a clear filename convention and a brief notes file describing any transformations.

Tip: Version control helps track changes across periods.

People Also Ask

What is the best way to convert a bank statement to CSV?

The best method depends on your source. If you have a PDF, start with OCR or a PDF extractor to pull data, then map to CSV with a defined header. For recurring tasks, automation is recommended.

Use a defined workflow that extracts data, validates types, and exports to CSV.

Which fields should appear in the CSV?

Include date, description, amount, currency, and transaction type. Add balance if available, and mask sensitive identifiers where required.

Include date, description, amount, currency, and transaction type.

How do I handle scanned PDFs or images?

Use OCR to extract text, then clean up formatting, fix misreads, and map results to your CSV schema with validation.

OCR first, then clean up and structure for CSV.

Can I automate bank statement to CSV conversion?

Yes; you can automate with scripts or tools to parse, normalize, and export CSVs on a schedule. Ensure security and access controls.

Automation is possible and recommended for recurring tasks.

What if dates or amounts are misformatted?

Apply strict validation and standardize formats (YYYY-MM-DD for dates, consistent decimal points for amounts) to avoid errors.

Standardize formats to prevent mistakes.

Watch Video

Main Points

- Define a stable CSV schema before processing.

- Validate data types and formats at every step.

- Automate where possible to reduce errors and save time.

- Document every transformation for reproducibility.