Convert TXT to CSV: A Practical Guide

Convert TXT to CSV with proper delimiters, encoding, and validation. This MyDataTables guide covers manual, Excel-based, and script methods for accurate data imports.

Convert TXT to CSV by choosing a delimiter, handling quotes, and selecting the right encoding. This guide shows manual and automated methods, including Excel imports and Python scripts, to produce clean, import-ready CSV files. You’ll learn practical steps, common pitfalls, and best practices for batch conversions, so data cleans you rely on stay accurate across tools and pipelines.

Why TXT to CSV matters in data workflows

According to MyDataTables, converting TXT to CSV is a foundational step in data ingestion. TXT files often come from logs, exports, or semi-structured records that use a delimiter or fixed-width fields. CSV offers a simple, portable structure that most databases, analytics tools, and BI platforms can read reliably. When you convert TXT to CSV, you create a consistent tabular format that supports headers, data types, and batch imports. This standardization reduces manual editing later, minimizes misalignment between columns, and makes downstream validation easier. In this guide, we explore both manual and automated options, explain how to detect the right delimiter, and provide practical checks to ensure your resulting CSV is ready for analysis or sharing. By understanding the common pitfalls and best practices, you can maintain data fidelity as you move between TXT sources and CSV targets.

Common TXT formats and CSV expectations

TXT input can range from simple delimited lines to free-form text blocks with embedded delimiters. The key to a successful TXT-to-CSV conversion is choosing a delimiter that does not appear inside field values, handling quoted fields, and preserving header rows. CSVs typically use commas, but semicolons, tabs, or pipes are common alternatives, especially for European locales or logs. If your TXT uses fixed-width fields, you may need to parse by character positions rather than a delimiter. MyDataTables recommends starting with a small sample to identify edge cases: lines with embedded quotes, multi-line fields, or missing values. After choosing the delimiter, implement a consistent escaping strategy and decide how you will handle empty cells during import. These decisions influence how you configure tools and scripts later.

Manual conversion in Excel or Google Sheets

Manual conversion is often fastest for small datasets or one-off tasks. In Excel, start by importing the TXT file via Data > From Text/CSV, choose the correct delimiter, and enable the option to treat quotes correctly. In Google Sheets, use File > Import > Upload, then select “Split text to columns” and the appropriate delimiter. Ensure the first row becomes headers, and review a few rows for unusual characters. Save the result as a .csv file with UTF-8 encoding to preserve special characters. This method requires careful review but is excellent for quick validation and minor edits.

Scripted conversions: Python and command-line options

For larger or recurring conversions, a small script offers repeatability and accuracy. Python’s csv module handles quoted fields and escaping robustly, and you can parameterize the delimiter to match your TXT input. A simple script reads rows from the TXT file and writes them to a CSV file, preserving headers when present. If your TXT uses tabs or other delimiters, adjust the delimiter value accordingly. Shell utilities like awk or PowerShell can also perform straightforward splits when the input format is consistent. This approach scales and minimizes human error.

Batch conversions and automation

Automation is essential for datasets that update regularly. Create a batch job that processes all TXT files in a folder, applies the same delimiter and encoding, and outputs corresponding CSV files in a separate destination. Schedule the job with your operating system’s task scheduler or use a CI/CD pipeline for enterprise workflows. Include logging to capture failed files and a retry mechanism. When automating, keep your scripts modular—separate delimiter handling, encoding, and validation—so you can swap components without rewriting the entire process.

Validation, quality checks, and best practices

After conversion, validate the CSV by checking row counts, column counts, and a sample of rows for data integrity. Verify that the headers match the expected schema and that encoding preserved special characters. Use a simple import into a test environment to confirm compatibility with downstream tools. Maintain a changelog of the delimiter choices, encoding, and any manual tweaks for future reference. By applying these checks consistently, you reduce import errors and improve data trustworthiness across platforms.

Tools & Materials

- Text editor(Notepad++, VS Code, or any code-friendly editor for quick edits)

- Spreadsheet software(Excel, Google Sheets, or LibreOffice Calc)

- Python 3.x (optional)(For script-based conversion with csv module)

- Command-line shell(PowerShell, Terminal, or Bash)

- TXT data file(Source file to convert)

- CSV-compliant delimiter reference(e.g., comma, semicolon, tab, pipe)

- Regex tester (optional)(Useful for edge-case field parsing)

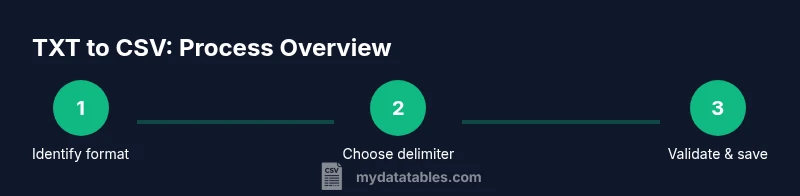

Steps

Estimated time: 1-2 hours

- 1

Identify TXT structure

Open the TXT file and inspect the first 20-50 lines to determine whether fields are delimited or fixed-width. Note the most common separators and whether quotes enclose fields. This step sets the direction for the entire conversion.

Tip: Sample several lines to catch inconsistencies like embedded delimiters inside quotes. - 2

Decide target CSV structure

Decide if your CSV will include a header row, which delimiter to use in the CSV, and how to handle empty values. Establish a consistent schema to protect against downstream misalignment.

Tip: If you’ll import to a database, align headers with table column names. - 3

Choose a conversion method

Select manual (Excel/Sheets) for small datasets or scripts for large batches. Document the chosen method so teammates reproduce results.

Tip: Keep a sample TXT/CSV pair to verify results after each method. - 4

Perform the conversion

If using Excel, import the TXT with the correct delimiter and quotes handling, then Save As CSV (UTF-8). If scripting, run the script and confirm no data loss during write.

Tip: Validate a few rows in the CSV to ensure fidelity before processing the whole file. - 5

Clean and normalize

Address problematic fields: unescaped quotes, multi-line cells, and inconsistent whitespace. Normalize line endings and trim unnecessary spaces to ensure clean data.

Tip: Optionally apply a post-processor to standardize date and number formats. - 6

Validate and save final CSV

Run a quick validation pass, check column counts, and verify encoding. Save the final version with a clear filename and extension.

Tip: Keep a changelog for each conversion batch. - 7

Document and automate

Document the delimiter, encoding, and method used. If batch processing is needed, create a reusable script or workflow and schedule it.

Tip: Modularize steps to ease future updates or format changes.

People Also Ask

What is the main difference between TXT and CSV formats?

TXT is plain text that may or may not be structured, while CSV is a delimited, tabular format. CSV data is organized into rows and columns, making it easier to import into spreadsheets and databases.

TXT is plain text; CSV is a delimited table. CSV is easier to import into tools like spreadsheets and databases.

Which delimiter should I use when converting TXT to CSV?

Choose a delimiter that does not appear in the data fields. Common options include commas, semicolons, or tabs. If data contains those characters, consider quoting rules and an alternate delimiter.

Pick a delimiter that doesn't appear in your data, and handle quotes properly.

Can I convert TXT to CSV using Excel?

Yes. Import the TXT using Excel's Text/CSV import feature, specify the delimiter, and then save as CSV with UTF-8 encoding. This works well for smaller files.

You can import with Excel's Text/CSV tool and save as CSV.

How do I handle quoted fields and multi-line cells?

Use a proper CSV parser or Excel's import options to handle quotes. For multi-line cells, ensure the parser treats line breaks inside fields as part of the field, not as a row terminator.

Handle quotes and multi-line cells with proper parsers or import options.

Is there a recommended approach for batch TXT-to-CSV conversions?

Yes. Use a script (Python) or a shell workflow to process multiple TXT files with the same delimiter and encoding. Include logging and error handling for reliability.

Batch with a script, and log any errors for reliability.

What encoding should I choose for CSV exports?

UTF-8 is typically recommended for CSV exports to preserve special characters and ensure compatibility across systems.

UTF-8 is best for CSV encoding.

Watch Video

Main Points

- Identify the TXT structure before choosing a method

- Choose a delimiter that minimizes conflicts with data

- Validate the converted CSV against a sample and target schema

- Automate batch conversions to ensure reproducibility