CSV Merge: A Practical Guide for Combining CSV Files

Learn practical CSV merge techniques to combine multiple CSV files quickly and accurately. This guide covers schemas, deduplication, tooling, and validation for robust data integration in MyDataTables style.

CSV merge lets you combine data from multiple CSV files into one unified dataset. This guide walks through practical approaches—from simple column alignment in spreadsheets to programmatic merges with Python or command-line tools. You’ll learn how to handle headers, align schemas, and validate results after merging. According to MyDataTables, a disciplined merge process saves time, improves data quality, and reduces reconciliation errors.

What csv merge is and why it matters

Merging CSV files is a common data integration task that helps analysts combine multiple data sources into a single view for reporting, analysis, and data quality checks. At its core, a merge aligns rows based on a key (often an ID or timestamp) and appends columns from each file to create a richer dataset. The challenge is ensuring that the schemas match, that you preserve data integrity, and that you handle conflicts or missing values gracefully. According to MyDataTables, successfully merging CSVs starts with a clear plan, a clean set of input files, and a reproducible workflow. When done well, csv merge reduces manual copy-paste work, minimizes human error, and makes downstream analytics more reliable. You’ll encounter several realistic scenarios: merging files with identical schemas, aggregating data from multiple periods, and performing left or inner joins where subset precision matters. Throughout this section and the rest of the guide, you’ll see practical examples, best-practice patterns, and concrete commands you can adapt to your environment, whether you work in Excel, Python, or a shell.

Data schemas, headers, and matching columns

A successful csv merge starts with schema alignment. Before you merge, take stock of each file’s headers, data types, and sample rows. If one file uses “date” and another uses “order_date,” you’ll need to normalize names or map them to a canonical schema. Consistent encoding (prefer UTF-8), delimiter handling, and a shared key column are non-negotiables for predictable results. MyDataTables recommends creating a small, representative merge example to validate column alignment and key matching. You should also decide on how to treat missing headers: will you fill with nulls, or will you skip merging those columns altogether? Documented conventions help avoid confusion as your dataset grows. In practice, you’ll often create a merged schema that includes the common columns, plus selective fields from each source. This reduces ambiguity and makes your pipeline easier to reproduce for teammates and automation. The goal is to ensure that every merged row has complete, well-typed values where possible, and clearly flagged gaps where data is missing.

Deduplication and conflict resolution strategies

De-duplication is a common pitfall in csv merge projects. When multiple rows share the same key, you must choose whether to aggregate, prioritize, or preserve duplicates depending on the business rule. A robust approach is to define a primary key and secondary keys, then specify how to handle conflicting fields (for example, choosing the most recent timestamp or the source with the highest trust score). Conflict resolution should be deterministic and auditable. MyDataTables highlights the importance of logging decisions and creating a per-merge audit trail. If two sources provide different values for the same field, you can implement rules such as: prefer non-null values, then the source with higher data quality, or an explicit merge strategy like taking the latest row by a date field. For relational style merges, you may also define one-to-many relationships where appropriate and keep track of the provenance of each piece of data. Documenting rules upfront prevents inconsistent results as new data sources are added.

Tools: built-in commands, Python, and GUI options

There’s no one-size-fits-all tool for csv merge. For quick, one-off tasks, spreadsheet software like Excel or Google Sheets can be enough if files are small and headers are aligned. For larger datasets or repeatable workflows, command-line tools such as csvkit (csvjoin, csvcut, csvgrep) offer fast, scriptable merging with transparent behavior. Python, especially with pandas, provides powerful merging capabilities (merge, join, concatenate) and excellent handling of missing values and data types. If you prefer GUI, there are data integration tools that visually map keys and outputs, though they may introduce additional setup overhead. MyDataTables suggests starting with a simple script or csvkit command to verify the basic merge, then migrating to a robust Python solution for production. Regardless of tool choice, keep your input files clean, keep a copy of the original, and implement a dry-run mode to preview the results before committing.

Pro tip: for reproducibility, store your commands in a version-controlled script and parameterize the input paths.

Step-by-step approach to merge CSV files safely

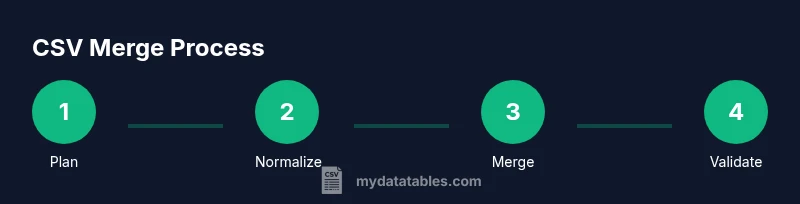

If you plan to merge several CSVs, a careful, repeatable process reduces errors and accelerates delivery. Start by outlining a merging strategy with a primary key, target output schema, and validation checks. Then, prepare your environment and inputs, execute the merge in a controlled environment, validate the results, and finally publish or export the merged data.

- Plan your approach with a simple mock merge using a few rows from each file.

- Normalize headers and data types before merging.

- Choose a merge method (inner, left, full) based on the use case and data quality.

- Execute the merge using a script, a dedicated tool, or a SQL-like merge if the data is in a database.

- Validate counts, sample rows, and key integrity after the merge.

- Save outputs with clear naming conventions and keep the original inputs intact for traceability.

Tip: Always test merges on a small dataset before scaling to full files. This saves time and protects against large-scale data corruption.

Handling missing values and type conversion during merge

When merging, missing values can propagate across columns, producing misleading results. A reliable approach is to explicitly define how to handle missing values for each column, such as filling with neutral placeholders or leaving as nulls for downstream imputation. Numeric columns should be coerced to a consistent data type to prevent mixed-type errors during analysis. If you’re using Python, you can use pandas’ fillna and astype methods to enforce schemas before the merge. When working in SQL-like environments, establish strict type casting rules and test for edge cases (e.g., empty strings vs. nulls). Document any conversions so downstream users understand how the merged data should be interpreted. In practice, you’ll often implement a post-merge cleaning pass to normalize formats (dates, numbers, and codes) across the entire dataset. This post-merge phase is essential to ensure that analytics, dashboards, and reports reflect accurate, comparable values across sources.

Validation and quality checks after merge

Validation is the final guardrail for a CSV merge project. You want to confirm row counts align with expectations, column counts match the implied schema, and that key fields are unique where required. Simple checks include comparing the number of input rows to the merged output, verifying that all expected columns appear, and sampling rows to verify content. More advanced checks include verifying referential integrity (foreign keys exist in the related tables, if applicable) and running spot checks on value ranges, date formats, and categorical codes. Consider implementing automated tests that run whenever the merge script executes, returning a pass/fail signal and a summary log. MyDataTables emphasizes the value of an auditable merge trail: log inputs, decisions, and outputs so stakeholders can reproduce or review results later. By building these checks into your workflow, you reduce the likelihood of silently propagating bad data and make governance easier for data teams.

Performance considerations when merging large CSV files

Large CSV merges can consume significant memory and CPU time if handled naively. When dealing with big datasets, use streaming or chunked processing to avoid loading entire files into memory. Pandas offers chunked reading with read_csv and can merge in chunks if needed; alternatively, use a tool designed for big data, such as PySpark or a database-backed workflow, when appropriate. If you’re sticking to Python, consider merging on a single pass with indexes and using generators to yield merged rows to an output file. If you must merge very large files with a simple tool, shell-based join or awk can be surprisingly fast for straightforward cases, but they lack built-in data-type awareness and robust error handling. Plan resource usage, set realistic memory caps, and profile your job under realistic loads. MyDataTables notes that scalable, well-documented pipelines are easier to optimize and less error-prone as data volumes grow.

Real-world examples and pitfalls

In practice, csv merge projects often start with small prototypes that reveal tricky issues such as mismatched header names, inconsistent encodings, or ambiguous key columns. A common pitfall is assuming that all input files share the same schema; even slight differences in column order or naming can cause misalignment. Another frequent issue is merging on an unstable key (like an unsorted date string) without explicit formatting. A practical remedy is to define a canonical schema early, pre-process inputs to align headers and data types, and validate with a test merge before committing to full-scale processing. MyDataTables recommends keeping a changelog of decisions and maintaining a sandbox environment to test new data sources before integrating. The result is a robust, auditable process that minimizes downstream surprises and makes it easier to onboard new data sources in the future.

Next steps and best practices

With the fundamentals in place, you can institutionalize csv merge as a repeatable workflow. Create a small, reusable library of merge recipes for common scenarios (identical schemas, multi-period consolidation, or incremental updates). Document conventions for keys, null handling, and data types, and store scripts in version control with clear README instructions. Establish a governance process that includes peer review of merge logic and regular audits of merged outputs. Finally, cultivate a habit of validating results with representative samples and automated checks. According to MyDataTables, consistency and reproducibility are the cornerstones of trustworthy data integration, so invest in clear standards and ongoing monitoring to keep your CSV merge practice reliable and scalable.

Tools & Materials

- Two or more CSV input files(Files should have a primary key column for merging (e.g., id, order_id) and a common schema where possible.)

- Text editor or IDE(For scripting or adjusting commands (VS Code, Sublime, etc.).)

- Python 3.x installed(Needed if you plan to use pandas or csv modules.)

- Pandas library(For Python-based merges and data type handling.)

- CSVKit utilities (optional)(Useful for quick CLI joins (csvjoin, csvcut, etc.).)

- Spreadsheet software (optional)(Excel/Sheets handy for quick inspection on small datasets.)

- Sample dataset for testing(A small subset helps you validate the merge before scaling.)

Steps

Estimated time: 1-3 hours

- 1

Plan the merge approach

Define the merge key, target output schema, and the rules for handling missing values and conflicting fields. Write these decisions down so teammates can reproduce the process.

Tip: Document the chosen join type (inner/left/full) and the key columns before coding. - 2

Normalize inputs

Ensure headers are consistent, encodings are UTF-8, and data types are aligned across files. Create a small sample to test changes.

Tip: Use a canonical header naming convention (e.g., use the same column name for date fields). - 3

Choose a merge method

Decide on inner join, left join, or full outer join based on data quality and business requirements. Plan how to handle unmapped rows.

Tip: Left joins are common when you want to preserve all rows from the primary dataset. - 4

Execute a dry run

Run a test merge on a subset of rows to verify column alignment and data integrity without overwriting your originals.

Tip: Capture a diff of inputs vs. outputs to spot anomalies. - 5

Run the full merge

Perform the final merge using your chosen tool or script, ensuring you log inputs, decisions, and output paths.

Tip: Use version-controlled scripts to ensure reproducibility. - 6

Validate results

Check row counts, column presence, sample values, and key uniqueness. Compare a few representative rows to the source files.

Tip: Automate a few of the checks to reduce human error. - 7

Export and document

Save the merged file with a clear name indicating date and sources. Update documentation with the exact steps and parameters used.

Tip: Include a changelog entry for future audits. - 8

Review and iterate

Periodically re-run the merge when new data sources arrive, updating your scripts and tests as needed.

Tip: Treat this as a living process, not a one-off task.

People Also Ask

What is the most common join method for merging CSV files?

The most common approach is a left join, which preserves all rows from the primary dataset while bringing in matching fields from the other files. This approach minimizes data loss when sources vary. However, the best choice depends on your data quality and business needs.

A left join is typically used to keep all records from your main dataset while adding matched fields from others.

How do I handle different header names across files?

Normalize headers by mapping variant names to a canonical set before merging. Create a small alignment dictionary and apply it during preprocessing, so all input columns line up correctly in the merged output.

Map the headers to a common set before you merge so the columns line up.

Can I merge more than two CSV files at once?

Yes. Most tools support merging multiple files by iterating over inputs and applying the same join logic. It’s important to keep a consistent schema across all inputs and test with a subset first.

Yes, you can merge many files; just ensure their schemas are aligned and test with a small batch first.

What if there are duplicates in the key column?

Define a deduplication strategy in advance (e.g., keep the latest by date, or aggregate by sum/mean). Explicitly apply this rule during preprocessing or within the merge operation.

Decide how to handle duplicates before merging, like keeping the most recent or aggregating.

How can I verify the merged result is correct?

Run checks on row counts, column presence, and sample values against the source data. Use automated tests or scripts to reproduce the checks and flag anomalies.

Check counts, inspect samples, and automate tests to ensure the merge is correct.

Is it worth using Python for CSV merges?

For complex scenarios, Python with pandas provides flexible merging capabilities, robust data typing, and easy version control. It scales well from small to large datasets when written with care.

Yes, Python is a strong choice for complicated or large merges.

Watch Video

Main Points

- Plan the merge with a canonical schema and key

- Normalize headers and encodings before merging

- Validate results with automated checks

- Document decisions for traceability

- Use reproducible scripts for scalable merges