CSV to Text File: A Practical How-To Guide

Step-by-step methods to convert CSV data into text files (TXT) with correct encoding, delimiters, and reproducible workflows for data analysts and developers.

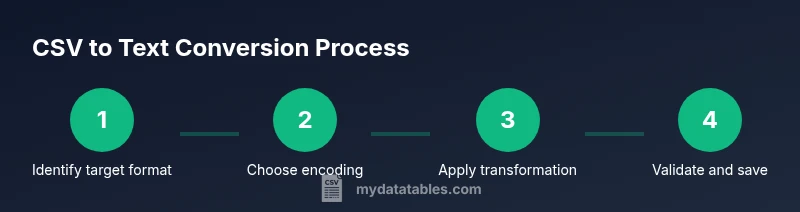

You will learn how to convert a CSV into a plain text file by choosing a target text format, selecting encoding, and applying a delimiter transformation. This guide covers manual and automated methods, plus common pitfalls and validation steps. Whether you prefer a quick editor trick, a shell one-liner, or a reusable script, this answer points you to reliable techniques.

Understanding CSV to Text File: What It Entails

CSV to text file is a common data workflow where structured tabular data stored as comma-separated values (CSV) is transformed into a plain-text format such as TXT, MD, or LOG. The transformation often changes how data is delimited, encoded, or quoted, while preserving the rows and columns. This conversion is essential when downstream tools or users expect a simple text representation rather than a binary spreadsheet. According to MyDataTables, mastering this task starts with a clear target format and a consistent encoding strategy, which reduces surprises during handoffs or automation. The choice of encoding (UTF-8 is widely recommended for cross-platform compatibility) and the delimiter used to separate fields should align with downstream consumers’ expectations. The result should be readable, reversible, and easy to validate across environments.

Text File Formats You Might Produce From CSV

When you convert CSV data to text, you typically pick from several plain-text formats, depending on how the data will be consumed. TXT is the simplest form, preserving raw lines with a single delimiter (often a tab or space). MD can add lightweight structure for documentation or reports, while LOG is suitable for sequential logs. The key considerations are readability, maintainability, and how the downstream system will parse the file. For broad compatibility, UTF-8 with a standard delimiter (comma, tab, or pipe) is a solid baseline. MyDataTables analysis shows that UTF-8 encoding is favored for cross-platform CSV to text workflows, minimizing misinterpretation of characters across operating systems.

Core Concepts: Encoding, Delimiters, and Quoting

Converting CSV to text requires explicit decisions about encoding (how characters are represented), delimiters (how fields are separated), and quoting (how special characters inside fields are handled). In CSV, a field may contain the delimiter itself or line breaks, which necessitates quoting and escaping rules. When you switch to a text file, you may adopt a new delimiter (e.g., switching from a comma to a tab) to improve readability or parsing in the target tool. Consistent line endings (CRLF vs. LF) are also important to ensure files render correctly on Windows, macOS, and Linux. Establishing a documented rule set for encoding, delimiter, and quoting will help you automate conversions with confidence.

Practical Methods to Convert CSV to Text File

There are several practical methods to perform the conversion, ranging from quick manual tweaks to robust scripting for large datasets. Here are common approaches with their typical use cases:

- Text editor tricks: Simple edits can work for small files or ad-hoc tasks. You can replace delimiters, adjust line endings, and save as a .txt or .md file. This is fastest for tiny datasets but not scalable.

- Command line tools: Using awk, sed, or tr on Unix-like systems lets you change delimiters, strip or insert quotes, and control encoding during export. This approach is repeatable and lightweight for batch jobs.

- Python scripts: The csv module in Python provides precise control over quoting, delimiter choices, and newline handling. Python is ideal for larger files and repeatable pipelines, especially when you need transforms beyond simple delimiter changes.

- Excel or spreadsheet exports: Import the CSV, then export to TXT or MD. This can be convenient for quick formatting, but it’s less predictable for automated workflows and large data.

- PowerShell or Windows scripting: For Windows-centric environments, PowerShell offers clear commands to read CSV and write to a text-based format, with explicit encoding control.

Each method has trade-offs in speed, reliability, and ease of automation. If you’re starting from scratch, a Python-based approach typically offers the best balance for reusability and accuracy, while shell-based solutions are excellent for quick, one-off tasks. The goal is to produce a faithful text representation that remains easy to validate and reuse.

Handling Special Cases: Encodings, Delimiters, and Quoting

Real-world CSV files often deviate from idealized formats. Here are practical guidelines for handling edge cases:

- Encoding: UTF-8 is the safest default for cross-platform workflows; if your data contains non-ASCII characters, ensure the output text file is saved with UTF-8 to avoid garbling.

- Delimiter choice: If the data contains commas within fields, consider a less common delimiter like a tab or pipe, and ensure downstream parsers expect that delimiter.

- Quoting: When fields contain delimiters or line breaks, proper quoting and escaping are essential to preserve data integrity in the text file. If your target format supports, use a quoting policy that matches the source CSV’s behavior.

- Line endings: Normalize line endings to CRLF for Windows-oriented consumers or LF for UNIX-like systems to avoid display issues when the file is opened in different environments.

- Large files: For very large CSVs, streaming your data rather than loading it entirely into memory helps avoid performance bottlenecks and memory errors.

A disciplined approach—defining encoding, delimiter, and quoting policies, and applying them consistently across all conversion steps—reduces surprises in downstream systems. MyDataTables emphasizes documenting these decisions to improve reproducibility and auditability.

Validation and Quality Assurance

After converting, validate the output to confirm parity with the source data. Start with a spot check of several rows to ensure field counts align and that the chosen delimiter consistently separates fields. Use a lightweight validator that reads the text file and reports the number of lines, fields per line, and any anomalies such as unexpected quote characters. If you maintain a sample dataset, run a round-trip check: convert CSV to text, then re-import to see if the data reads back without loss. This reduces the risk of subtle data corruption, especially when the dataset will be used for reporting or machine processing. Keeping a small verification set helps catch issues before they scale.

Automating Conversions for Reproducibility

Automation is the backbone of reliable CSV to text workflows. Create a repeatable script or pipeline that you can run on new data without manual editing. Use explicit parameters for encoding, delimiter, and output format, and wire those parameters into your chosen tool (editor, shell script, Python script, or PowerShell). Version-control the script and maintain a changelog as you improve the workflow. Scheduling the script via a task scheduler or cron job enables ongoing daily or weekly conversions with minimal intervention. Documentation is essential: describe inputs, outputs, and any transformation rules so new team members can reproduce results.

Real-World Scenarios and Best Practices

Converting CSV to text files is common in data integration, reporting, and archival workflows. For example, exporting a CSV inventory dataset to a clean TXT log for a legacy system may require a specific delimiter and fixed-width formatting. In other cases, teams convert CSV data to Markdown to include tables in documentation, or to plain TXT for quick checks in a terminal. Best practices include: starting with UTF-8, choosing a delimiter that reduces misparsing, testing on representative data, and logging conversion results. Following these practices helps ensure your text outputs are robust, portable, and easy to validate across environments.

Tools & Materials

- Computer with internet access(For running commands or scripts)

- Text editor(Notepad++, VS Code, or similar for quick edits)

- Command line interface(Terminal (macOS/Linux) or Command Prompt/PowerShell (Windows))

- Python interpreter (optional)(For script-based conversion using csv module)

- CSV file to convert(Input data with a known delimiter and header (if present))

- Encoding reference sheet(Helpful for selecting UTF-8 or alternatives)

- Output text file reference(Guides for TXT/MD/LOG formats)

Steps

Estimated time: 45-90 minutes

- 1

Define target format and encoding

Decide whether you want TXT, MD, or LOG as the output and choose an encoding (UTF-8 is preferred for cross-platform use). Document these decisions so the conversion is reproducible.

Tip: Write down the chosen format and encoding for future reference. - 2

Choose conversion method

Select a conversion method that fits your data size and automation needs (editor tricks for small files, shell or Python scripts for larger datasets).

Tip: Prefer a scripted approach for repeatability. - 3

Prepare the CSV for conversion

Inspect the CSV to confirm delimiter, header presence, and any quoted fields. If needed, standardize the input (e.g., ensure consistent quotes).

Tip: Back up the original CSV before any changes. - 4

Perform the conversion

Apply the chosen method to transform the CSV into your target text format, ensuring the delimiter and encoding are correctly applied.

Tip: Test on a small subset before processing the full file. - 5

Validate the output

Check line counts, field counts per row, and a sample of converted lines to ensure fidelity with the source.

Tip: Use a quick one-liner to compare line counts between input and output. - 6

Save with clear naming

Save the result with a descriptive filename that reflects the input source, date, and format (e.g., data_inventory_202602.csv -> inventory_202602.txt).

Tip: Include versioning if you re-run the process. - 7

Automate for future runs

If this task repeats, wrap the steps in a script or pipeline, and schedule it to run automatically on new data.

Tip: Add logging to capture success or failure for each run.

People Also Ask

What is a CSV file?

A CSV file is a plain-text format where each line represents a row and fields are separated by a delimiter, usually a comma. It’s widely used for exchanging tabular data between applications.

A CSV file is a simple text with rows and columns separated by a delimiter, typically a comma.

What is a text file in this context?

A text file (TXT, MD, LOG) stores data as readable text with a defined delimiter or formatting, suitable for consumption by humans or simple parsers. It does not rely on a binary format like a spreadsheet.

It's a plain text file that uses characters you can read and edit easily.

How do I choose the right encoding?

UTF-8 is the most portable choice across platforms. If your data contains special characters, ensure the text file is saved in UTF-8 to avoid garbled text when opened elsewhere.

UTF-8 is usually best to keep characters intact across systems.

Can I convert large CSV files safely?

Yes, but you should process the file in chunks or stream the data rather than loading the entire file into memory. This prevents memory errors and speeds up handling of big datasets.

You can process big files in chunks to stay within memory limits.

What are common pitfalls in CSV to text conversion?

Common issues include losing quotes, mishandling embedded delimiters, and incorrect line endings. Establish a consistent policy for encoding, delimiter, and quoting to avoid these problems.

Watch out for quotes and embedded delimiters; they often cause problems.

How can I automate this process?

Wrap the steps in a script or a small pipeline, and schedule it to run on new CSV data. Include logging and output validation to maintain reliability over time.

Yes—create a script and set it to run on a schedule for repeatable results.

Watch Video

Main Points

- Choose a clear output format and encoding upfront

- Use a reproducible, script-based workflow

- Validate output with line and field checks

- Keep a backup of the original CSV

- Automate to ensure consistent, repeatable results