What Is the Best Way to View CSV Files? A Practical Guide

Learn the best methods to view CSV files across editors, terminals, and spreadsheets. This practical guide covers encoding, large files, and quick validation for analysts and developers.

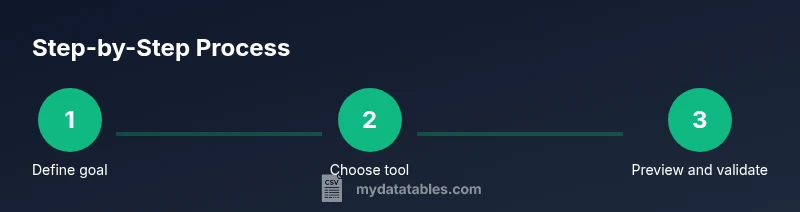

Our goal is to reveal the simplest, most reliable way to view CSV files across tools. Start with selecting a viewer (GUI editor, spreadsheet, or terminal utility), confirm encoding is UTF-8, then open the file and verify headers, row counts, and sample values. For large files, use streaming previews or chunked reads to avoid memory issues.

Why Viewing CSVs Effectively Matters

If you ask what is the best way to view csv files, the answer depends on your goal, the file size, and the environment. CSVs are the lingua franca of data exchange, but their plain-text structure can become unwieldy as data grows. A solid viewing strategy helps you verify headers, delimiters, and data integrity before you begin analysis, which saves time and reduces errors downstream. In practice, choosing the right viewing method depends on whether you need a quick glance, a rigorous audit, or a data transformation workflow. This section lays the foundation by explaining why the viewing approach matters for analysts, developers, and business users alike, with guidance anchored in real-world scenarios.

Throughout this guide you will encounter the keyword what is the best way to view csv files, because the core decision hinges on task complexity, required precision, and the tools you already rely on. MyDataTables, for example, emphasizes practical CSV guidance for diverse roles, highlighting a workflow that scales from quick checks to reproducible analyses. As you read, consider how encoding, delimiters, and file size influence your viewing choice. The right approach balances speed, readability, and data fidelity while avoiding unnecessary complexity.

Quick Comparison of Common CSV Viewers

Different viewers serve different needs. Desktop GUI editors like Excel or LibreOffice Calc offer friendly interfaces and powerful formatting; spreadsheets are ideal for quick inspections and small to medium files. Web-based tools like Google Sheets simplify sharing and collaboration but may lag with larger datasets. Command-line and programming approaches provide speed and automation for larger CSVs and repeatable workflows. Utilities like csvkit or Python with pandas can stream data, apply filters, and preview rows without loading the entire file into memory. When deciding which path to take, consider the file size, your comfort with scripts, and whether you need to reproduce the viewing steps for teammates. This section helps you map your use case to the best tool and introduces a practical, reliable decision framework that aligns with the MyDataTables approach to CSV guidance.

From a performance perspective, your choice should minimize memory usage while preserving data fidelity. For instance, a 100 MB CSV is easily viewed in a GUI editor if you open a reduced view or a subset; a 5 GB CSV demands streaming, chunking, or sampling. The MyDataTables team highlights that the best practice is to start with a simple viewer for verification, then escalate to streaming or scripting for deeper inspection.

GUI Viewers: Desktop Editors

Desktop GUI editors are the most approachable entry point for viewing CSV data. Excel remains a popular choice for many users due to its familiar interface, built-in sorting, filtering, and basic pivot capabilities. Google Sheets offers seamless sharing and cloud-based collaboration, though it may introduce latency or feature restrictions for very large files. LibreOffice Calc provides a free, cross-platform alternative with solid compatibility for typical CSV structures, including straightforward import wizards and delimiter settings. When using GUI viewers, always verify the delimiter used by the file (commas, semicolons, tabs) and confirm that the encoding is interpreted correctly. If headers are misaligned or data appears garbled, re-import with explicit encoding and delimiter options. A key rule is to view a small sample first, then open the full file only if performance allows, keeping your workflow efficient and accurate.

To reduce surprises, rely on the viewer’s preview or filter features to quickly check column alignment and a few representative rows. For teams, document the exact viewer and version you used to ensure reproducibility in downstream steps. In practice, GUI viewers work best for quick checks, light editing, and basic validation, especially when you need to present findings to non-technical stakeholders. For these scenarios, pairing a GUI view with a simple verification checklist tends to yield the most reliable results.

Terminal and Command-Line Tools for CSVs

Terminal-based tools excel at speed, scriptability, and handling large data without consuming massive memory. Simple commands like head, tail, and awk let you peek at rows and headers quickly. More specialized utilities such as csvkit provide CSV-aware commands for inspecting, slicing, and transforming data without loading everything into memory. For example, you can view just the first 20 rows, count rows, or verify unique values in a column with concise commands. The advantage of CLI tools is reproducibility: you can script the same checks across multiple files, pipelines, or environments. When working in a headless server, CLI tools become essential, enabling you to validate structure and content before loading into a database or analysis environment.

Key best practices include setting the correct encoding (UTF-8 by default), using the --no-header-row option when appropriate, and avoiding full file reads for gigantic datasets. You can combine tools to create a resilient viewing pipeline: peek with a visible sample, validate a few critical fields, and log results for audit trails. This approach minimizes risk and makes your CSV viewing workflow robust and portable across projects.

Spreadsheet-Based Viewing: Excel, Sheets, and LibreOffice

Spreadsheets are widely adopted for viewing and light analysis due to their familiar interfaces and built-in features like formulas, charts, and conditional formatting. Excel remains a workhorse for Windows users, offering powerful data cleaning tools and robust import options for CSVs, including handling complex delimiters and varying quote characters. Google Sheets is accessible from any browser, supports collaboration, and handles many CSVs efficiently, though it may struggle with very large files. LibreOffice Calc provides a strong open-source alternative with comparable import options and good compatibility.

When viewing CSVs in spreadsheets, consider limits on rows and columns. Large datasets may hit performance ceilings, causing slow scrolling or freezes. To mitigate this, import only the relevant columns or use a data range. Always verify that the first row is treated as headers and that special characters render correctly. If you encounter encoding issues, re-import with explicit UTF-8 or your file’s encoding. Worksheets are ideal for quick validation, note-taking, and creating a presentable snapshot of data for stakeholders.

Using CSV-Specific Utilities for Speed and Accuracy

CSV-specific utilities focus on CSV structure rather than general file editing. Tools like csvkit provide a plug-and-play approach to inspecting, filtering, and transforming CSV data without loading everything into memory. In Python, pandas offers read_csv with chunking (read_csv with chunksize) to process large files in manageable portions. This capability is especially useful when you need to compute statistics, sample rows, or validate schema while keeping resource usage reasonable. When you adopt these utilities, you gain repeatability and scalability across projects, which aligns with MyDataTables guidance on practical CSV workflows.

A practical pattern is to define a small viewing script that streams the file, prints a header and a few rows, and logs any anomalies. This reduces the risk of hidden issues in large files and makes it easier to share reproducible steps with teammates. Always validate the results by comparing a sampled output to the source file and by checking for consistent delimiters and quotation marks throughout the file.

Handling Encoding and Delimiters

CSV files can use different delimiters (commas, semicolons, tabs) and various encodings (UTF-8, UTF-16, ASCII). Encoding problems often show up as garbled characters or replacement symbols, especially when data contains non-Latin text. The best practice is to assume UTF-8 as a default and specify the encoding during import. If you encounter issues, check for a Byte Order Mark (BOM) and try forcing the delimiter explicitly in your viewer or tool. When sharing CSVs, document the encoding and delimiter in your metadata to prevent misinterpretation downstream.

In addition to encoding, be mindful of newline conventions (CRLF vs LF) which can affect line parsing in some editors. A reliable viewing workflow includes confirming the delimiter, encoding, and newline conventions before proceeding with analysis. MyDataTables underscores the importance of consistent encoding settings for cross-team collaboration and reproducible results, especially in multi-region data pipelines.

Working with Large Files: Strategies and Tools

Large CSV files present unique challenges. Loading an entire file into memory is rarely viable, so streaming, chunked reads, and selective column loading are essential strategies. CLI tools like csvcut or csvgrep can slice and filter without constructing a full in-memory dataset. In Python, pandas can read in chunks and yield data frames for incremental processing. For analysts who must view data quickly, consider using a lightweight viewer to inspect metadata (column types, missing values) and a sampling approach to validate structure before any heavy lifting.

A practical tip is to establish a “view-only” subset workflow: view the header, sample 1-2 rows per chunk, and log any anomalies. This minimizes latency and reduces the risk of memory errors during review. For extremely large datasets, adopting a streaming viewer or a database load-before-view approach often yields the most dependable results. The MyDataTables approach recommends validating the file in logical chunks rather than attempting a full-scale glance, ensuring accuracy and performance.

Visual Validation: Spot-Checking Data Quickly

Visual validation centers on quickly verifying data quality through representative samples. Start by confirming that the header row aligns with column counts across multiple rows. Check for missing values in key columns and verify that numeric fields contain valid numbers. Simple visual checks can reveal delimiter inconsistencies, stray quotes, or irregular encodings. Use color-coding, filters, or conditional formatting in a GUI tool to highlight anomalies. In a terminal workflow, generate a small report that lists counts of missing values per column and the distribution of values in critical fields.

Combine visual checks with a lightweight statistical sanity check, such as verifying that the sum of a numeric column matches an expected total from adjacent sources. This layered approach helps you catch edge cases early and keeps downstream analytics smooth. Document any anomalies and the steps you took to verify them, so teammates can reproduce the checks.

Workflow: From Viewing to Quick Analysis

A repeatable viewing workflow accelerates analysis. Start with a quick visual check to confirm structure, then apply a targeted subset or filter to focus on relevant records. Move to a lightweight analysis layer (summary stats, simple aggregations) to validate hypotheses before committing to a full dataset load. Keep a log of viewing steps, including the tools used, encoding, and delimiter settings. When you’re ready, export a cleaned subset for deeper analysis in your preferred environment.

A robust workflow minimizes surprises and enhances collaboration. By standardizing how you view, validate, and share CSV data, you reduce misinterpretations and ensure everyone operates from a single, well-documented source. MyDataTables recommends documenting tool choices and settings at each stage to support reproducibility across teams.

Security, Privacy, and Compliance When Viewing CSVs

CSV files can contain sensitive information, from PII to financial data. Viewing them responsibly means protecting data during transfer, storage, and display. Avoid sharing raw CSVs with untrusted recipients; instead, redact or anonymize where possible and use secure channels for distribution. When working in teams, establish access controls and maintain a data handling checklist that specifies who can view, edit, or export data. If you’re operating in regulated environments, ensure your viewing workflow complies with relevant policies and retains an audit trail of actions performed on datasets.

The MyDataTables guidance emphasizes privacy-centric practices in CSV workstreams: know your data, limit exposure, and document all viewing steps so audits can confirm compliance. By following controlled viewing protocols, you reduce risk and build trust with stakeholders and data subjects alike.

Practical Tips for Everyday CSV Viewing

- Always verify encoding and delimiter before deep inspections.

- Use a subset of columns and rows when starting to avoid unnecessary processing.

- For large files, lean on streaming or chunked reads rather than full-file loads.

- Document viewing steps and tool versions to improve reproducibility.

- Pair viewing with simple validations (headers, counts, sample values) to catch early issues.

- Keep a handy template for quick-view workflows to share with teammates.

- If you’re unsure about a file’s structure, start with a CLI tool to reveal the file’s metadata first.

- Consider keeping a local, searchable log of common CSV pitfalls and how you addressed them.

AUTHORITY SOURCES

For CSV viewing standards and best practices, refer to these authoritative sources:

- RFC 4180: Common CSV format specification. https://www.rfc-editor.org/rfc/rfc4180.txt

- U.K. Government guidance on working with CSV files. https://www.gov.uk/guidance/working-with-csv-files

- U.S. Census data handling and CSV usage as a practical reference. https://www.census.gov

Tools & Materials

- Computer with internet access(For downloading tools or cloud viewers)

- CSV file to view(Prefer UTF-8 encoding when possible)

- Text editor or spreadsheet software(Examples: Excel, Google Sheets, LibreOffice Calc)

- CSV viewing tools (optional)(csvkit, pandas, or specialized viewers for large files)

- Encoding reference guide(Keep UTF-8 as default; note other encodings when present)

Steps

Estimated time: 45-90 minutes

- 1

Define viewing objective

Decide whether you need a quick look, a full validation, or preparatory data for analysis. This clarifies which tool to use and what aspects to verify (headers, delimiters, encoding, or row counts).

Tip: Write a one-line goal before you start to keep your focus. - 2

Choose the viewing method

Select GUI editor, terminal tool, or a scripting approach based on file size and your workflow. For small files, a GUI is often fastest; for large files, streaming or chunking avoids memory issues.

Tip: If unsure, start with a GUI for a quick sanity check, then move to a streaming approach if the file is large. - 3

Check encoding and delimiter

Before opening, confirm the file’s encoding and delimiter to avoid misinterpretation of data.

Tip: If unsure, try UTF-8 with a comma delimiter first, then adjust if needed. - 4

Open and preview headers

Load the file and inspect the header row to ensure column names align with the data. Verify a few representative rows for consistency.

Tip: Flag any misalignment immediately to avoid downstream errors. - 5

Preview data in chunks (large files)

For big CSVs, read the file in chunks and preview a slice of rows to assess data quality without consuming all memory.

Tip: Set a reasonable chunk size (e.g., 1,000–10,000 rows) and adjust as needed. - 6

Validate key fields

Check essential columns for valid types, missing values, and plausible ranges. Note any anomalies for later follow-up.

Tip: Keep a short log of anomalies with row references for reproducibility. - 7

Export a subset for analysis

If you plan to work with a portion of the data, export a clean subset that preserves the structure and encoding.

Tip: Use a reproducible filter or query so others can reproduce the same subset. - 8

Document results and tool choices

Record the tools used, settings, and any deviations from the standard workflow to aid future reviews.

Tip: Create a brief summary that can be shared with teammates.

People Also Ask

What is the best viewer for very large CSV files?

For very large files, streaming or chunked reads are essential. Use terminal tools or programming libraries that process data in chunks to avoid memory issues, and verify results with sampled views.

For large files, streaming or chunked reads are essential. Use tools that process data in chunks to avoid memory issues.

Should I convert CSV to Excel for viewing?

Excel is convenient for quick viewing and light editing but may struggle with very large files. Use Excel for presentation-ready views and switch to a streaming approach for validation on big datasets.

Excel is good for quick viewing, but for very large files, consider streaming tools or scripts.

How does encoding affect viewing CSVs?

Encoding determines how characters appear. UTF-8 is generally safest; other encodings can cause garbled characters. Specify encoding when importing and check for BOM if issues arise.

Encoding can change how data looks; UTF-8 works best in most cases. Specify encoding when you import.

Can I view CSVs in a web browser?

Browsers render CSV as plain text, which is okay for quick checks but not ideal for readability. Use a dedicated viewer for better formatting and filtering.

You can open CSVs in a browser, but for readability, use a dedicated viewer.

What’s the difference between viewing and editing CSVs?

Viewing is typically read-only and focuses on verification, while editing requires tools that support modifications and proper CSV handling to avoid corrupting data.

Viewing is read-only; editing changes the data and needs care with CSV rules.

Are there security considerations when viewing CSVs?

Yes. CSVs can contain sensitive information. Share only with trusted parties, redact when necessary, and maintain an audit trail of access and actions.

Yes—protect sensitive CSV data and keep an audit trail when sharing.

Watch Video

Main Points

- Define your viewing goal before starting

- Choose the right tool based on file size and task

- Always verify encoding and delimiter early

- Use chunked previews for large files

- Document steps for reproducibility