How to Load CSV: A Practical Step-by-Step Guide for Analysts

Learn practical methods to load CSV data into Python, SQL, or Excel with correct encoding, delimiters, and headers. Includes step-by-step instructions, tips, and troubleshooting for reliable CSV imports.

You will learn how to load a CSV file into common targets such as Python/pandas, SQL, or Excel, with reliable encoding, correct delimiters, and header handling. The guide covers choosing the right tool, preparing your file, performing the load, validating results, and troubleshooting common errors. Expect practical tips and safe defaults.

What load csv means in practice

Loading a CSV, short for Comma-Separated Values, is the process of converting a plain-text table into a structured data object in memory or into a persistent data store. For data analysts, developers, and business users, csv loading is a foundational task that directly affects downstream analysis, reporting, and automation. A well-executed load preserves headers, respects the intended data types, and handles missing values gracefully. According to MyDataTables, consistent encoding and explicit delimiter choices are foundational for reliable CSV loading, especially when data moves across tools like Python, SQL, or spreadsheet apps. In everyday workflows, a CSV is often the single source of truth for tabular data, so getting the load right saves time and prevents subtle quality issues later in the pipeline. This section sets the stage by clarifying what you’re loading, where you’re loading it, and how you’ll verify success. The goal is not just to import data, but to import it correctly and reproducibly across environments.

Environment choices: Python, SQL, Excel, and more

CSV loading happens in many ecosystems. Your choice depends on where the data will be used next, the size of the file, and the required validation. Common targets include Python with pandas for quick exploration and transformation, relational databases like PostgreSQL or MySQL for robust storage and SQL-based queries, and Excel or Google Sheets for ad-hoc analysis or sharing with non-programmers. Each environment has its own strengths: Python/pandas excels at data cleaning and transformation; SQL databases shine for performance and structured querying; Excel provides accessibility and familiar tooling. When planning, consider: data size, required validation checks, downstream tools, and how you'll handle encoding and delimiters. MyDataTables notes that aligning the import settings with downstream expectations reduces rework and errors later in the data pipeline. Decide early which target you’ll use and tailor your load steps accordingly.

File preparation: encoding, delimiter, headers, and line endings

Before loading, inspect the CSV to identify encoding (for example UTF-8 or Latin-1), the delimiter (comma, semicolon, or tab), and whether there is a header row. Line endings may be CRLF (Windows) or LF (Unix). A mismatch between file format and importer settings often causes misaligned columns or corrupted characters. If you know the origin of the data, confirm whether a Byte Order Mark (BOM) is present and decide if you need to strip it. A robust plan also includes validating a small sample first to catch common issues like extra delimiters or quoted fields that contain separators. Recording these decisions helps with reproducibility and audits, which aligns with best practices in data management.

Loading CSV with Python and pandas

To load a CSV in Python, pandas offers a simple, flexible interface that handles most common formats. Start with a direct read using read_csv, then refine options as your data demands. Example: pd.read_csv('data.csv', encoding='utf-8', sep=',', header=0). If your data contains a BOM, you can use encoding='utf-8-sig' to handle it gracefully. After load, inspect the dataframe with df.head() and df.info() to confirm types and non-null counts. If you anticipate mixed data types, you can specify dtypes for columns to improve performance and prevent automatic type inference from producing surprises.

Loading CSV into SQL databases

SQL databases often require a dedicated import step. For PostgreSQL, the COPY command is a fast, server-side load: COPY public.my_table(col1, col2, col3) FROM '/path/data.csv' WITH (FORMAT csv, HEADER true, DELIMITER ','); For MySQL, the LOAD DATA INFILE command is a common alternative: LOAD DATA INFILE '/path/data.csv' INTO TABLE my_table FIELDS TERMINATED BY ',' ENCLOSED BY '"' LINES TERMINATED BY '\n' IGNORE 1 ROWS; Ensure the database user has access to the file path and that the server can read the file. When loading into SQL, verify constraints and indexes are in place to enforce data quality during the load. MyDataTables emphasizes validating schema compatibility and ensuring the data types map correctly to table definitions to minimize post-load casting.

Validating the loaded data

Validation is essential after any load. Start with a quick shape check: df.shape or SELECT COUNT(*) FROM table to confirm the row count matches expectations. Inspect a few rows with head or LIMIT to verify headers and data alignment. Check for nulls in required fields, out-of-range values, and unexpected data types. Create a small set of sanity checks or a light data quality script to confirm that critical columns conform to expected ranges. For large datasets, run sampling-based validation to save time while still catching obvious anomalies. Documentation of what you validated helps when you audit the process later.

Handling large CSV files efficiently

Large CSVs can exhaust memory if loaded in one go. Strategies include processing in chunks, streaming rows, or using databases as the final sink. In Python, set chunksize in read_csv or iterate over chunks to perform incremental cleaning and writes. In SQL, consider staging the data in a temp table, then bulk move to the final table with minimal locking. If you use Excel, consider splitting the file or using Power Query to load portions. Prioritize streaming and chunking for performance and reliability, and monitor memory usage during the import. MyDataTables highlights chunked loading as a practical safeguard for robust pipelines.

Common pitfalls and how to avoid them

Deliberate planning helps avoid common CSV loading pitfalls. Common problems include mismatched headers, inconsistent quoting, stray delimiters inside fields, and broken encodings. Always test with a representative sample, verify headers against the destination schema, and set the correct delimiter and encoding. If you encounter column misalignment, check for embedded delimiters within quoted fields and adjust quote handling. Keep a small, reproducible recipe for load steps and reference the data dictionary to ensure column semantics stay intact across environments. Finally, log any deviations from the expected format to support future debugging and audits.

Authority sources

- Documentation for Python's CSV library: https://docs.python.org/3/library/csv.html

- U.S. Census data portal and CSV usage guidance: https://www.census.gov

- NIST data management and encoding practices: https://www.nist.gov

These sources provide guidance on encoding, parsing, and validating CSV data across tools and platforms.

Tools & Materials

- CSV file(Accessible path or URL to the CSV file)

- Loading environment(Choose Python with pandas, SQL client, or Excel depending on the target)

- Encoding and delimiter clarity(Know the file encoding (e.g., UTF-8) and delimiter used (comma, semicolon, tab))

- Test data subset(An small sample to validate the import before full load)

- Validation scripts(Simple checks for shape, nulls, and basic ranges)

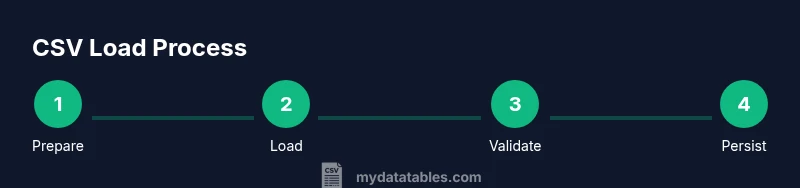

Steps

Estimated time: 60-90 minutes

- 1

Verify prerequisites and file access

Confirm you can read the CSV file from your working directory or the specified path. Check permissions and ensure the file isn't locked by another process. This prevents cryptic I/O errors later.

Tip: Use a quick directory listing to confirm the exact path before loading. - 2

Choose loading method and target

Decide whether to load into Python data frames, a SQL table, or a spreadsheet. This determines the libraries, functions, and parameters you will use. Align the choice with downstream analysis or reporting needs.

Tip: If you plan to do data cleaning later, start with Python/pandas for flexibility. - 3

Load the file with correct encoding and delimiter

Import using the proper encoding and separator. If you encounter errors, adjust encoding to utf-8-sig for BOMs or switch to the appropriate delimiter, and verify the header row is recognized.

Tip: Test with a small subset first to catch import-time issues. - 4

Inspect the loaded data

Examine the shape, data types, and a few sample rows. Confirm headers map to the intended fields and that no columns are corrupted by misinterpretation of delimiters or quotes.

Tip: Use df.info() and df.head() to get a quick snapshot. - 5

Handle large files efficiently

If the CSV is large, use chunked loading or streaming to avoid memory exhaustion. Write incremental results to the destination to maintain responsiveness.

Tip: Set a reasonable chunksize and monitor memory during the process. - 6

Persist or export the loaded data

Store the data into the final destination, whether it's a dataframe, a SQL table, or a cleaned CSV. Validate post-load and document the steps for reproducibility.

Tip: Keep a record of the exact parameters used for future audits.

People Also Ask

What is the simplest method to load a CSV into Python?

The simplest method is to use pandas read_csv, which handles common formats and returns a dataframe. Start with encoding='utf-8', sep=',', and header=0, then inspect the dataframe with head() and info().

Use pandas read_csv to load the CSV, then inspect the dataframe with head and info.

How do I handle different delimiters like semicolons?

Pass the correct delimiter to read_csv with sep=';' for semicolons or sep='\t' for tabs. If your data uses quotes, ensure quoting is handled by the parser.

Specify the delimiter in read_csv to match your file's format.

Do I need to remove the header row before loading?

If the file has a header row, keep header=0. If the first row is data, use header=None and provide column names via names=[...].

Keep the header when present; otherwise provide column names manually.

How can I load huge CSV files without exhausting memory?

Use chunksize in pandas or a streaming approach, and write results incrementally to the destination to avoid loading everything at once.

Load in chunks to manage memory for large files.

What encoding issues should I watch for with CSV files?

UTF-8 is common, but BOMs require utf-8-sig or proper handling. Latin-1 is sometimes used for older data. Always specify encoding in your importer.

Check encoding and handle BOMs when needed.

How do I verify that the import was successful?

Compare row counts, inspect a sample of rows, and validate column data types. Run a small quality check script to catch obvious mismatches.

Count rows and validate sample data after loading.

Can I reuse the same load steps for different CSVs?

Yes. Create a reusable script or notebook cell with parameterized path, delimiter, and encoding. This ensures consistency and reduces errors when handling multiple files.

Create reusable load steps for consistency.

Watch Video

Main Points

- Define the target environment first

- Verify encoding and delimiter explicitly

- Use a small sample to validate the import

- Check headers align with the destination schema

- Document the load steps for reproducibility