Plain Text to CSV: A Practical Conversion Guide for 2026

Learn to convert plain text to CSV with robust delimiter handling, quoting, and validation. This comprehensive guide covers manual, semi-automatic, and automated workflows to produce reliable, import-ready CSV files for spreadsheets, databases, and analytics.

Goal: convert plain text to csv. You will learn a robust, repeatable workflow to transform unstructured lines into a clean CSV. We'll cover delimiter detection, handling quotes, trimming whitespace, and validating row integrity so the resulting file imports reliably into spreadsheets, databases, or BI tools. This guide from MyDataTables shows practical methods for both quick ad-hoc conversions and large-scale pipelines.

What plain text to CSV means

According to MyDataTables, plain text to csv is the process of turning plain, human-readable lines into a structured tabular format that machines can consume. In many workplaces, data arrives as log files, exported reports, or free-form notes. These sources are often not aligned to a fixed schema, making manual copy-paste error-prone. The goal of the conversion is to define a stable schema: a consistent delimiter, a clear header row, and well-defined escaping rules so each line yields the same number of fields. When done well, the resulting CSV is portable across analysis tools like spreadsheets, SQL databases, and data visualization platforms. The keyword plain text to csv emphasizes that the input is text-only and that the output adheres to the CSV standard, which uses a delimiter to separate fields and quotes to preserve embedded separators. At its core, this is about turning messy, human-friendly text into machine-friendly data, enabling reliable filtering, sorting, and aggregation. Throughout this guide, you’ll see practical techniques that cover both quick, one-off conversions and scalable pipelines.

bold content and practical steps are used to illustrate the process.

Common formats and pitfalls

CSV is human-friendly but machine-understandable only when the delimiter, quotes, and line endings are consistent. Common formats include comma-, tab-, semicolon-, or pipe-delimited files. Pitfalls include: fields containing separators that aren’t properly quoted, multi-line fields, trailing delimiters, and inconsistent header rows. The “plain text to csv” task becomes trickier when input uses irregular indentation, mixed encodings, or inconsistent line breaks (CRLF vs. LF). MyDataTables highlights that the most reliable conversions start with a known, documented delimiter and a plan for escaping embedded separators, especially in fields like addresses, descriptions, or notes. To minimize errors, validate a representative sample before scaling up, and keep a backup of the original text. If you must preserve special characters, UTF-8 encoding is usually the safest choice for cross-tool compatibility.

Preparation: plan before converting

A successful plain text to csv conversion begins with a plan. Start by inspecting a small sample of the input; identify the most likely delimiter (comma, tab, semicolon, or something else) and confirm whether a header row exists. Check for quotes around fields that contain separators, and decide how to treat empty fields. Establish a target schema: number of columns, column names, and the expected data types. Decide on an escaping convention (e.g., double quotes to wrap text with embedded delimiters). Document any anomalies you find — e.g., inconsistent row lengths or irregular whitespace — so you can implement targeted fixes later. This preparation saves time and reduces the risk of producing a malformed CSV during the conversion.

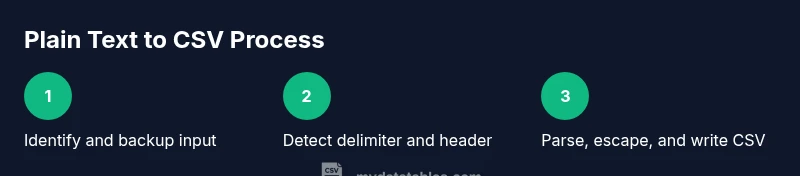

Practical workflows: manual, semi-automatic, and automated

You can approach plain text to csv conversion in three broad ways: manual, semi-automatic, or fully automated. Manual works for small datasets or one-offs: use a capable text editor or a spreadsheet's import feature, and fine-tune delimiter settings. Semi-automatic combines a quick script with manual verification—great for moderate-sized datasets. Automated workflows rely on scripts (Python, awk, or shell utilities) or dedicated ETL tools, enabling repeatable, scalable conversions. MyDataTables recommends starting with a small, well-defined template if you plan to automate, so you can re-use the logic for larger batches. Each approach has trade-offs in speed, accuracy, and maintainability, so choose based on volume, variability, and your comfort with scripting. Regardless of method, always test with edge cases like fields containing commas, quotes, or newline characters, and verify outputs against your target schema.

Validation and quality checks

Validation is essential to ensure a reliable plain text to csv conversion. After producing the CSV, verify that every line contains the same number of fields and that headers align with data columns. Use a small validator that counts separators per line and flags anomalies. Check for proper encoding (UTF-8 is preferred) and confirm that quotes are balanced. It helps to load the CSV into a trusted viewer (Excel, Google Sheets, or a database import tool) and perform a quick sanity check: sorts, filters, and a few sample queries should return sensible results. MyDataTables emphasizes creating automated tests for common edge cases, so future conversions remain predictable. If discrepancies appear, revisit the input sample, adjust delimiter handling, and re-run validation until confidence is high.

Real-world example: sample data to CSV

Consider a small plain text sample where fields use a comma as a delimiter, but some fields contain embedded commas and quotes. Input sample:

Name,Role,Location "Smith, John",Engineer,"New York, NY" "Doe; Jane","Data Scientist","San Francisco, CA"

Converted CSV output:

Name,Role,Location "Smith, John",Engineer,"New York, NY" "Doe; Jane","Data Scientist","San Francisco, CA"

This example shows how quotes are used to escape embedded commas and how a consistent header row helps downstream tools parse data predictably. When dealing with larger datasets, you would generalize this logic into a script or ETL step, test with a representative sample, and then apply it to the full dataset.

Tools & Materials

- Plain text data file (input)(Back up the original file before starting the conversion.)

- Delimiter detection tool or script(Automates identifying whether the input uses comma, tab, semicolon, or another separator.)

- CSV editor/viewer(Excel, Google Sheets, or a text editor with CSV support for previewing results.)

- Scripting environment (Python, awk, sed) or an advanced text editor(Use if automating; Python’s csv module is a common approach.)

- Backup and version control(Maintain versions of inputs/outputs to guard against errors.)

Steps

Estimated time: 45-90 minutes

- 1

Identify input file and create a backup

Locate the plain text source you will convert and create a safe copy. This minimizes the risk of data loss during experimentation and fixes.

Tip: Always keep the original untouched until you confirm the output is correct. - 2

Inspect the sample to determine the delimiter

Scan several lines to identify the most frequent separator (comma, tab, semicolon, etc.). Note any consistency issues.

Tip: If multiple delimiters appear, prefer a delimiter that appears cleanly across data or plan to pre-clean first. - 3

Check for a header row

Decide whether the first line is a header. A header defines column names and guides downstream processing.

Tip: If headers are missing, create a header row that clearly identifies each column. - 4

Normalize encoding and line endings

Ensure input uses a consistent encoding (prefer UTF-8) and uniform line endings (LF or CRLF).

Tip: Inconsistent encoding or mixed line endings often causes misinterpretation of data. - 5

Choose manual, semi-automatic, or automated method

Select a method based on dataset size, variability, and your comfort with scripts. Start small to validate the approach.

Tip: Begin with a simple template if you plan to automate later. - 6

Create a conversion template or script

If automating, write a template or script that reads the input, splits by delimiter, handles quotes, and writes CSV with proper escaping.

Tip: Use a CSV library or module to handle edge cases like embedded quotes. - 7

Parse lines into fields with proper escaping

Implement quoting rules so that fields containing delimiters are wrapped in quotes and embedded quotes are escaped.

Tip: Double-quote escaping is a common standard. - 8

Normalize and trim field values

Remove extraneous whitespace and standardize dates, numbers, and textual data.

Tip: Keep a deterministic approach to trimming to avoid data loss. - 9

Validate column counts on every row

Ensure each line yields the same number of fields as the header to prevent misalignment.

Tip: Flag rows with missing or extra columns for manual review. - 10

Save the result as UTF-8 CSV

Save the final file with a .csv extension and UTF-8 encoding to maximize compatibility.

Tip: Avoid BOM in UTF-8 when sharing with certain tools. - 11

Preview in a CSV viewer

Open the file in Excel, Sheets, or a CSV viewer to verify structure and content.

Tip: Spot-check a few rows with filters or sorts. - 12

Document and iterate

Record what worked, what didn’t, and any special cases for future conversions.

Tip: A short README or changelog helps maintain repeatability.

People Also Ask

What is plain text to csv?

Plain text to csv is the process of transforming unstructured text lines into a structured comma- or delimiter-separated values file. The output follows the CSV standard so it can be imported into spreadsheets, databases, or data tools.

Plain text to CSV turns text lines into a table-like file that helps you analyze data easily.

What delimiter should I use for CSV files?

Use a delimiter that does not appear in your data, or ensure embedded delimiters are quoted. The most common choice is a comma, but tab, semicolon, or pipe can be appropriate depending on the dataset and regional conventions.

Pick a delimiter that your data doesn’t contain, or quote fields that include the delimiter.

How do I handle quotes and embedded commas in fields?

Wrap fields containing delimiters in quotes and escape any quote characters inside such fields (commonly by doubling them). Consistent quoting prevents misinterpretation when importing to CSV readers.

If a field has a comma or quote, enclose it in quotes and double internal quotes.

Can I automate plain text to csv conversions?

Yes. Automation is suitable for repeated conversions or large datasets. Use a script or ETL tool that reads input, applies delimiter rules, escapes quotes, and writes a UTF-8 CSV.

Automation is great for big jobs; you can script the process for reliability.

What if rows have missing fields after conversion?

Missing fields usually indicate inconsistent input or delimiter issues. Revisit the input sample, adjust the delimiter logic, and re-run the conversion with validation to catch all rows.

If rows don’t line up, fix the source or adjust parsing before re-running.

Is UTF-8 encoding important for CSV files?

UTF-8 is widely recommended for CSV files because it preserves characters from multiple languages and avoids misinterpretation of special symbols during import.

UTF-8 keeps characters from different languages intact in CSV data.

Watch Video

Main Points

- Plan delimiter and header before converting

- Choose manual, semi-automatic, or automated approach based on data size

- Validate CSV with a viewer and quick checks

- Handle quotes and embedded delimiters correctly

- Maintain encoding to prevent data corruption