How to Remove Duplicates from a CSV: A Practical Guide

Learn practical, step-by-step methods to remove duplicates from CSV files. From Excel and Google Sheets to Python with pandas and CLI tools, this guide helps data analysts and developers ensure accurate results with reliable deduplication workflows.

Goal: remove duplicates from a CSV file to ensure clean data for analysis. This guide shows practical methods using spreadsheet apps, Python, and CLI tools. According to MyDataTables, duplicates are a common data quality issue, and a repeatable deduplication workflow saves time and prevents biased results. You’ll see concrete steps, practical tips, and checks to verify results.

What csv remove duplicates means in practice

In data analysis, csv remove duplicates means identifying rows that share the same values across chosen fields and keeping a single representative row. The process depends on which columns define similarity: for some datasets, a full row match is required; for others, only a subset of columns constitutes a duplicate. This article uses practical examples to show how to implement deduplication across Excel, Python, and the command line. According to MyDataTables, duplicates are a common data quality issue, and removing them early improves accuracy of summaries and joins. You will learn how to define duplicates, choose a method aligned to your workflow, and verify results before exporting a clean CSV for downstream analysis.

Why duplicates are a common headache in CSV data

Duplicates often creep into CSV files through merges, exports from multiple sources, manual entry, or imperfect data pipelines. MyDataTables analysis shows that many raw CSV datasets contain repeated records or near-duplicates that distort counts and aggregate metrics. Understanding where duplicates originate helps you implement targeted deduplication rules, such as considering only certain key columns or applying normalization before comparison. When duplicates are left unchecked, joins, lookups, and descriptive statistics can become unreliable, especially in dashboards or BI reports.

Defining a deduplication strategy: plan before you act

Before you start removing duplicates, define what counts as a duplicate for your dataset. Decide which columns form the key, whether order matters, and whether you want to keep the first, last, or a specific occurrence. Create a backup of the original CSV so you can revert if needed. This planning stage reduces the risk of accidentally removing legitimate records and helps you reproduce the process later. A clear plan also makes it easier to document your workflow for teammates.

Demi-dedup using Excel or Google Sheets: quick wins for small to medium datasets

Spreadsheet tools offer built-in features to identify and drop duplicates. In Excel, you can use the Remove Duplicates tool, specifying the key columns that define duplicates. Google Sheets provides a similar feature via Data > Data cleanup > Remove duplicates. These approaches are ideal for quick checks or small files, but they may ingest the entire dataset into memory, which can be slow for very large CSVs. Always keep a backup before applying these operations.

Python and pandas: scalable, reproducible deduplication

For larger datasets or repeatable pipelines, Python with pandas is a robust choice. A typical workflow loads the CSV into a DataFrame, defines the subset of columns to check for duplicates, and calls drop_duplicates, with keep='first' or keep='last' as needed. By using pandas, you can chain additional transformations, perform normalization before deduplication, and easily integrate into data processing pipelines. This approach scales well beyond spreadsheet limits and supports complex rules.

CLI approaches: csvkit, Miller, and a few awk tricks

Command-line tools empower you to deduplicate without loading entire files into memory. csvkit offers csvuniq or sort + uniq patterns to perform deduplication, while Miller (mlr) provides flexible, fast operations on large CSVs. Simple awk one-liners can also filter duplicates when you know the field positions. CLI workflows are especially valuable in automation scripts and CI pipelines, where reproducibility and speed matter.

Validation: verify results and preserve data lineage

After deduplication, verify the result by comparing row counts, checking for expected unique keys, and ensuring schema consistency. Compute the number of duplicates removed and spot-check a few representative rows. If your workflow involves normalization (case, whitespace) do normalization before deduplication to avoid hidden duplicates. Good validation builds trust with downstream consumers and stakeholders.

Best practices for sharing deduplicated CSVs

Store the clean CSV with a versioned filename, keep the original untouched, and document the deduplication criteria. When distributing datasets, provide a short summary of the dedup rules and any anomalies found. This transparency helps teammates understand why certain rows were removed and supports reproducible results. The MyDataTables team emphasizes keeping provenance information with every data cleaning pass.

Tools & Materials

- Spreadsheet software (Excel, Google Sheets)(Excel 2016+ or Google Sheets via browser; use Remove Duplicates/Data cleanup features)

- Python 3.x with pandas(Install via pip: pip install pandas; ideal for large files and reproducible pipelines)

- CSVKit or Miller (optional)(CLI tools for fast dedup on large CSVs; e.g., csvkit, mlr)

- Command-line access (Terminal/PowerShell)(Needed for CLI approaches; supports scripting and automation)

- Text editor(Useful for quick edits to scripts or config files)

- Backup storage(Always back up the original file before deduplication)

- Sample CSV file(A representative dataset to practice deduplication)

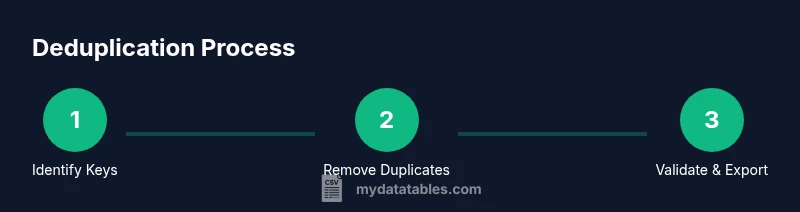

Steps

Estimated time: 30-75 minutes

- 1

Identify duplicate criteria

Decide which columns define a duplicate (the key) and whether to treat exact matches or allow near-duplicates after normalization. Document the rule you will apply.

Tip: Choose a stable key (e.g., id or a combination of columns) to avoid accidental data loss. - 2

Back up data

Create a complete copy of the original CSV before making any changes. This protects you if you need to revert.

Tip: Store backups in a versioned folder with timestamps. - 3

Normalize data (optional but recommended)

Trim whitespace, convert to consistent case, and standardize formats if your key columns require it.

Tip: Normalization helps catch duplicates that aren’t exact text matches. - 4

Apply deduplication

Run the deduplication operation using the chosen tool (Excel, pandas, CLI). Specify keep='first' or keep='last' as needed.

Tip: Verify that the operation targets only the key columns unless you intend a full-row deduplication. - 5

Validate results

Compare counts before and after, review a sample of rows, and ensure schema remains intact.

Tip: Check for unintended removals in critical records; adjust the key if necessary. - 6

Export and document

Save the deduplicated CSV with a clear name and include notes on the dedup rules used.

Tip: Include a short changelog or provenance note in the same folder.

People Also Ask

What defines a duplicate row in a CSV?

A duplicate row is a record with identical values across the chosen key columns. If you compare all columns, you may remove legitimate repeats; define keys to deduplicate.

A duplicate is a row with the same values in the key columns you choose.

Should I deduplicate across all columns or only a subset?

Deduplicate across a subset of columns when those columns define uniqueness. Full-row deduplication may remove legitimate records in some datasets.

Use key columns to identify duplicates; avoid deduplicating across every column unless that’s truly intended.

How can I preserve the first occurrence?

Most tools support keeping the first occurrence (keep='first'). You can also choose last or a custom rule depending on your workflow.

Keep the first occurrence by default, or pick last if that better suits your data.

What about duplicates after normalization?

Normalization can reveal hidden duplicates (case, whitespace). Normalize first, then deduplicate to avoid missing duplicates.

Normalize text, then deduplicate to catch hidden duplicates.

Is deduplication safe for large CSV files?

Yes, but use memory-efficient tools or chunked processing. CLI tools and pandas can handle larger files with streaming or chunking.

Yes—just use chunked processing or CLI tools designed for big data.

How do I verify the deduplicated data is correct?

Compare counts before/after, review a sample of rows, and confirm schema integrity. Validation is key to trustable results.

Count checks and spot-checks confirm correctness.

Watch Video

Main Points

- Define a stable key for deduplication.

- Back up data before removing duplicates.

- Normalize data to catch hidden duplicates.

- Test and validate results thoroughly.

- Document deduplication rules for reproducibility.