CSV and CSR: A Practical Side-by-Side Guide

A rigorous analysis of CSV and CSR formats, detailing when to use each, performance and tooling implications, and practical conversion workflows for data analysts and developers in 2026.

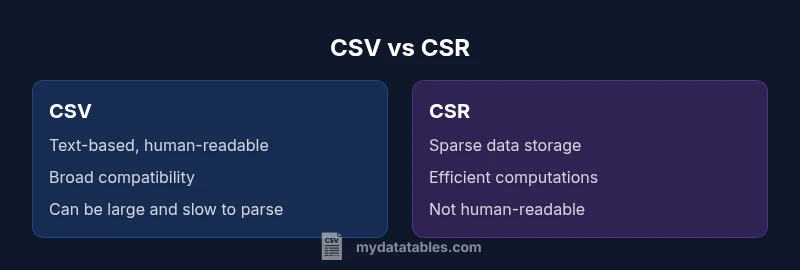

CSV and CSR serve different purposes in data processing. Use CSV for broad interoperability and human readability; CSR for efficient storage and computation on sparse matrices. For data teams, start with CSV for data exchange, then move to CSR when your workload involves large, sparse datasets or numerical linear algebra. This decision hinges on data shape, tooling availability, and performance goals.

What csv and csr Mean in Data Context

In modern data workflows, csv and csr represent two ends of the storage spectrum: CSV for general tabular data interchange and CSR for sparse numeric representations. CSV, or Comma-Separated Values, is a plain-text format that emphasizes accessibility, portability, and ease of editing. CSR, short for Compressed Sparse Row, is a compact representation designed for sparse matrices where most values are zero. In practice, csv and csr address different questions: how data is organized for humans to read and edit (csv) vs how data is organized for efficient computation (csr). In the words of the MyDataTables team, analysts often start with CSV when importing data from external sources, then shift to CSR when the workload involves heavy matrix computations, large datasets with many zeros, or operations like matrix-vector products. This distinction matters across industries, from finance and marketing analytics to scientific computing and machine learning pipelines. When planning a data project, you should map your data shape, tooling, and performance goals to decide which format to favor in the early design phase.

wordCountRangeStart":100,"wordCountRangeEnd":200}

Core Differences at a Glance

- Data orientation: CSV is row/record oriented for tabular data, while CSR is column-block oriented for sparse matrix storage.

- Readability: CSV files are human-readable and editable; CSR data is typically binary or compact and not meant for direct viewing.

- Metadata support: CSV can carry headers and simple schemas; CSR relies on separate metadata to describe dimensions and non-zero positions.

- Performance footprint: CSV tends to be larger and slower to process for sparse data; CSR minimizes memory usage and speeds up numeric computations.

- Tooling ecosystem: CSV enjoys universal support (spreadsheets, databases, languages); CSR relies on scientific computing libraries (e.g., SciPy) for construction and manipulation.

- Convertibility: You can convert between formats, but the effort and fidelity depend on data shape and required metadata.

- Best use cases: CSV for data exchange and lightweight analysis; CSR for large sparse matrices and high-performance computations.

wordCountRangeStart":120,"wordCountRangeEnd":200}

Data Representation and Readability

CSV stores data as plain text, with each line representing a row and commas (or other delimiters) separating fields. This structure makes it easy to inspect, edit, and share, even without specialized software. CSV supports headers, which provide basic schema and improve interpretability, and UTF-8 encoding to cover international data. By contrast, CSR encodes a sparse matrix as three arrays: values (non-zero entries), column indices, and row pointers. This compact representation omits zeros, dramatically reducing storage when data is sparse. However, the format is not human-friendly; reading and editing CSR generally requires software libraries. For csv and csr together, teams often maintain separate metadata describing matrix dimensions, data types, and row/column semantics to preserve interpretability while benefiting from CSR’s compactness. When interoperability with standard tools matters most, CSV remains the default; for computations on sparse data, CSR provides a superior foundation.

wordCountRangeStart":120,"wordCountRangeEnd":200}

Storage Efficiency and Memory Footprint

One of the most pronounced differences between csv and csr is how they occupy storage space. CSV is verbose: every field becomes text, complete with delimiters, line breaks, and, often, quoting rules. The result is human readability at the expense of file size and parsing overhead, especially for large tables. CSR, in contrast, saves space by recording only non-zero values and their positions, along with pointers to row starts. This is especially beneficial for matrices with a low density of non-zero entries, common in domains like natural language processing, recommendations, and network graphs. The memory advantage of CSR scales with sparsity; as non-zero density grows, the benefit diminishes. Conversely, when data is dense, CSR may not offer practical advantages and could introduce complexity in data handling. In practice, you’ll often see a hybrid workflow: CSV for intake and export, CSR for in-memory computation and model training on sparse data, with a metadata layer bridging the two representations.

wordCountRangeStart":140,"wordCountRangeEnd":190}

Performance Considerations

Performance implications drive format choice in large-scale analytics. CSV reading and writing involves sequential parsing of text, which can become a bottleneck for big datasets, especially when you need to infer data types or deal with inconsistent quoting. CSR gives substantial speedups for sparse linear algebra operations, matrix-vector products, and certain iterative methods because non-zero storage and indexing reduce memory bandwidth and cache misses. However, CSR workloads require specialized libraries and careful handling of data types, shapes, and alignment with mathematical operations. For data pipelines, performance also hinges on the ability to stream data, parallelize parsing, and leverage compression. In many workflows, the bottleneck isn’t the format itself but the surrounding tooling, I/O bandwidth, and the efficiency of data preprocessing steps. When designing a system, run benchmarks with your actual data shapes to identify the threshold where CSR’s gains offset conversion costs and integration overhead.

wordCountRangeStart":150,"wordCountRangeEnd":210}

Interoperability and Tooling

CSV is the lingua franca of data interchange, supported by virtually every data tool, library, and database. You can edit CSV in spreadsheets, ingest it with ETL pipelines, or load it into data warehouses with minimal friction. The downside is limited schema enforcement and potential data quality issues if headers are missing or encodings vary. CSR integrations are more specialized and typically revolve around numerical computing ecosystems. Libraries such as SciPy provide robust support for constructing, converting, and performing operations on CSR matrices, while numpy and machine learning frameworks rely on such structures for efficiency. When choosing between csv and csr, consider intended tooling and the workflow’s position in the data lifecycle. If your pipeline already uses Python-based scientific computing, CSR may integrate cleanly; if you’re exchanging data with external partners, CSV’s universality is often invaluable.

wordCountRangeStart":150,"wordCountRangeEnd":210}

Data Transformation and Pipelines

Transforming data between csv and csr requires explicit mapping from tabular fields to matrix indices. A typical path starts with a CSV importer that validates headers, encodes data types, and handles missing values. The next step is constructing a matrix in CSR format by locating non-zero entries and populating the values and indices arrays. This process may involve re-encoding, normalization, scaling, or pivot operations to align with downstream models. Pipelines frequently store separate metadata files that describe the dimensionality, sparsity pattern, and data semantics, ensuring that downstream stages interpret the data correctly. Validation checkpoints—such as shape checks, non-zero counts, and value ranges—reduce the risk of silent data corruption. Automation scripts and data validation tools are essential to maintain reliability when converting between csv and csr across environments and teams.

wordCountRangeStart":150,"wordCountRangeEnd":210}

Practical Scenarios: When to Use Each

In practice, csv shines when you need portability and human inspection: ad-hoc analyses, data sharing with stakeholders, or importing data into spreadsheets. If your task involves large, sparse data structures—such as adjacency matrices for graphs, term-frequency matrices in NLP, or sparse feature matrices in ML models—CSR becomes the practical choice due to memory savings and faster computations. For iterative workflows, you can keep a canonical CSR representation in memory for performance, while exporting to CSV for interchange with non-specialist teams or legacy systems. Consider hybrid architectures where initial ingestion occurs in CSV, followed by conversion into CSR for modeling steps. The key decision is data density and the required operations: high density and text-heavy analytics favor CSV; sparse numerical computation favors CSR.

wordCountRangeStart":150,"wordCountRangeEnd":210}

Best Practices for Conversion and Validation

When converting between csv and csr, start with a clear data model that defines the mapping from rows and columns to non-zero values. Use consistent delimiters, text encoding (UTF-8), and explicit data type inference to minimize surprises. Validate after every conversion with checks for dimensionality, non-zero counts, and value ranges. Preserve metadata that describes matrix shape, row/column semantics, and units, so downstream consumers interpret results correctly. For robust pipelines, implement idempotent conversion steps, versioned artifacts, and automated tests that compare CSR statistics with the original CSV counts. In 2026, automated data lineage and reproducibility are critical; ensure your tooling captures provenance and maintains traceability between formats across environments.

wordCountRangeStart":150,"wordCountRangeEnd":210}

Pitfalls, Edge Cases, and Common Mistakes

Common mistakes include assuming one format is universally better, neglecting metadata, and ignoring encoding issues that break cross-platform compatibility. In CSV, missing headers or inconsistent quoting can derail parsing; in CSR, misaligned indices or inconsistent data types undermine computations. Another pitfall is over-optimizing too soon on CSR without validating whether the sparsity pattern will actually benefit the workload. Always test with realistic datasets that resemble your production scale and density. Finally, be mindful of toolchain compatibility; a powerful CSR workflow is only valuable if your entire stack can generate, persist, and read CSR data without frequent bespoke adapters.

wordCountRangeStart":150,"wordCountRangeEnd":210}

Metadata, Encoding, and Data Integrity

Metadata matters as much as the data itself. CSV’s strength lies in its header lines, but you must standardize naming conventions, data types, and missing-value representations to avoid ambiguity. Encoding choices—prefer UTF-8 with explicit BOM handling where necessary—reduce cross-system misinterpretations. CSR relies on explicit metadata files to describe dimensions, sparsity patterns, and the data schema, since the compact arrays alone do not convey semantic meaning. Data integrity checks, such as round-trip validation (CSV -> CSR and back) and consistency checks across formats, are essential in robust pipelines. Adopting a lightweight schema or data dictionary improves interoperability and makes future migrations smoother.

wordCountRangeStart":150,"wordCountRangeEnd":210}

The Road Ahead: Trends in Data Formats

As data volumes grow and models become more sophisticated, the industry is leaning toward formats that balance readability with computational efficiency. Hybrid approaches that store dense data in CSV-like interchange formats and sparse sections in CSR or similar compact structures are gaining traction. Streaming and compression techniques are being integrated into data pipelines to reduce I/O costs without sacrificing accessibility. Standards bodies and major vendors are likely to emphasize stronger metadata support, schema validation, and improved tooling for format conversion. For data teams, staying current means designing flexible architectures that can adapt to evolving formats while preserving reproducibility and auditability. MyDataTables anticipates continued emphasis on clean data shapes, robust validation, and tooling that makes csv and csr work together seamlessly for diverse analytics needs.

wordCountRangeStart":150,"wordCountRangeEnd":210}

Conclusion: Why the Right Choice Matters for Data Teams

Choosing between csv and csr is not about picking a single “best” format, but about aligning data representation with the task, the data’s density, and the downstream workflows. CSV remains unbeatable for exchange, manual inspection, and rapid iteration with minimal tooling. CSR is indispensable when working with large, sparse matrices where memory efficiency and computational performance are priorities. A mature data strategy uses both formats where appropriate, supported by metadata and automation that bridge the gap between interchange-friendly CSV and computation-friendly CSR. By planning data shape, tooling, and pipeline architecture, teams can minimize conversion costs and maximize throughput across the data lifecycle.

wordCountRangeStart":180,"wordCountRangeEnd":220}

Comparison

| Feature | CSV | CSR (Compressed Sparse Row) |

|---|---|---|

| Best use case | General data exchange and portability | Large sparse matrices in scientific computing and ML |

| Human readability | High; text-based and editable | Low; not designed for direct human viewing |

| Metadata/schema support | Headers and simple schemas supported | Dependent on external metadata; limited built-in semantics |

| Storage efficiency | Less efficient for sparse data | Highly efficient for sparse representations |

| Tooling ecosystem | Spreadsheets, databases, ingestion tools | Scientific computing libraries (e.g., SciPy) |

| Performance for sparse data | Not optimized for sparse math | Excellent for sparse linear algebra |

| Conversion complexity | Straightforward for simple datasets | Requires careful mapping to indices and shapes |

| Error handling | Parsing errors can cascade with malformed lines | Corrupt metadata can disrupt matrix integrity |

Pros

- CSV offers broad interoperability and human readability

- CSR provides memory-efficient storage for sparse data

- CSV is easy to generate and parse across tools

- CSR enables fast linear algebra operations on sparse data

- Conversion workflows exist between formats to support hybrid pipelines

Weaknesses

- CSV can be inefficient for large datasets due to text size

- CSR is not human-readable and requires specialized tooling

- Direct editing of CSR data is impractical without libraries

- Converting between formats can incur processing overhead and validation steps

CSV is the default for data interchange; CSR is preferred for sparse data processing

For everyday data sharing and lightweight analytics, CSV remains unbeatable. When datasets are large and sparse, CSR offers substantial memory and computation advantages. The right choice depends on data density, workflow tools, and performance goals.

People Also Ask

What is the practical difference between csv and csr?

CSV is a text-based format ideal for data exchange and human inspection, while CSR is a compact representation tailored for sparse matrices and fast computations. The choice depends on data density and analytical needs.

CSV is great for sharing and editing data; CSR is best when you’re doing heavy sparse-matrix computations.

Can I edit CSR data directly?

CSR data is not designed for direct editing. It’s best manipulated through specialized libraries that manage the non-zero values and indices consistently.

You usually edit CSR data through software, not by hand.

How do I convert CSV to CSR?

Conversion involves parsing CSV into a dense or semi-dense representation, then extracting non-zero elements and their positions to populate CSR arrays. Validation ensures dimensions and data types align.

You convert by building the sparse structure from non-zero entries after loading the CSV.

Are there common formats between CSV and CSR?

Yes—intermediate formats like matrix market or JSON-based representations can bridge the gap, especially for metadata and schema between interchange and computation formats.

There are bridge formats to connect CSV with CSR when needed.

Which tools support CSR?

Major scientific computing libraries (e.g., SciPy) support CSR matrices, along with neighboring ecosystems in NumPy and ML frameworks.

SciPy and friends are the typical tools for CSR.

Is CSR universally better than CSV?

No. CSR excels for sparse numerical data and performance, but CSV remains superior for general data interchange, readability, and compatibility with non-specialist tools.

It depends on the use case; CSR isn’t a replacement for CSV in all scenarios.

Main Points

- Choose CSV for portability and manual review

- Choose CSR for sparse data and compute-heavy workloads

- Maintain metadata to bridge formats across teams

- Benchmark real workloads to decide when CSR pays off

- Automate validation to protect data integrity across conversions