CSV vs TXT: Is CSV Better Than TXT? A Practical Guide

Explore when CSV outperforms TXT, covering structure, encoding, parsing, and real-world use cases. MyDataTables analyzes pros, cons, and best practices for data professionals.

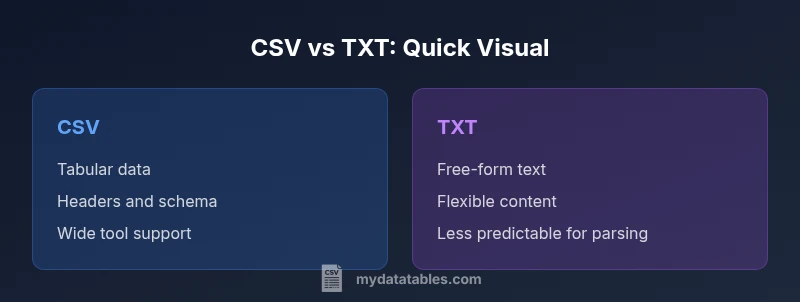

Is CSV better than TXT for most data workflows? The short answer is yes, in most analytical and automation contexts. CSV provides a structured, delimiter-driven format with predictable parsing rules, headers, and easy collation across tools. TXT, by contrast, is a free-form text container that often requires custom parsing. For routine data tasks, CSV is usually the stronger choice, while TXT can serve simple, human-readable notes or non-tabular content.

Data Formats Landscape: Is CSV Better Than TXT?

In data work, choosing between CSV and TXT shapes how easily you can load, transform, and analyze information. The question is is csv better than txt for most practical purposes, and the answer depends on structure, tooling, and downstream needs. CSV is designed around a tabular model, with rows representing records and columns representing fields. TXT, meanwhile, is a flexible bag of characters that can encode any content, but without strict guarantees about delimiters or schema. According to MyDataTables, most data analysts reach for CSV when they need consistent parsing rules, repeatable imports, and support across databases and analytics platforms. This article unpacks the decision with concrete criteria, scenarios, and guidelines so you can pick the format that minimizes hassle and maximizes reliability.

Core Structural Differences

The first major difference is structure. CSV enforces a table-like layout with rows and columns; TXT offers no formal schema. CSV uses a delimiter (commonly a comma, but semicolons, tabs, or pipes are also common) and often includes a header row to describe fields. This structure enables straightforward imports into spreadsheets, SQL databases, and BI tools. TXT files may contain paragraphs, lists, or arbitrary data blocks without a strict boundary between records. This makes TXT flexible but harder to parse consistently, especially when content includes line breaks, varying delimiters, or embedded metadata. For data workflows, predictability is a feature; TXT trades predictability for raw freedom.

Encoding, Headers, and Data Types

CSV files typically rely on a stable encoding such as UTF-8 and a consistent delimiter; headers define fields, and each subsequent line follows the same column order. Values are treated as strings by default unless the consuming tool applies type inference. TXT files do not prescribe encoding, headers, or data typing; you must decide how to structure and interpret content. If a TXT file contains mixed data (numbers, dates, free text), downstream processing often requires custom parsers or regex-based extraction. In contrast, a well-formed CSV with explicit headers can be ingested with near-zero schema mapping in many ETL pipelines. The trade-off is that CSV’s rigidity requires upfront agreement on delimiter choices and escaping conventions.

Practical Performance and Tooling

Performance considerations matter, particularly with large datasets. CSV parses efficiently in most streaming parsers because the structure is regular: one row per line, a fixed number of columns. TXT might require more complex tokenization or bespoke parsers that assess where a record ends, how fields split, and what constitutes metadata. Tool availability is another differentiator. CSV is supported by nearly every data tool—databases, spreadsheets, statistical packages, and programming libraries provide built-in CSV readers and writers. TXT support varies by application and often depends on the assumed content. When speed, interoperability, and repeatability matter, CSV generally wins in production data pipelines.

Real-World Use Cases and Trade-offs

Typical CSV use cases include exchanging tabular data between systems, exporting database query results, and storing spreadsheets in a portable form. CSV’s predictable structure makes it ideal for batch processing, automation, and version-controlled data sharing. TXT shines in contexts where content is unstructured or semi-structured—log notes, transcripts, or configuration files that don’t conform to a table. The trade-off is that TXT can complicate automated parsing, especially when content contains inconsistent line endings or embedded delimiters. For businesses, this means CSV is often preferred for analytics-ready data, while TXT serves as a flexible scratchpad for human-readable notes and config data. MyDataTables recommends evaluating downstream tooling before choosing a format.

Common Pitfalls and How to Avoid Them

Delimiters can ruin portability if inconsistent across files or teams. If you rely on CSV, agree on delimiter choice, escaping rules, and how to quote fields containing delimiters. Another pitfall is assuming a header is always present; some CSV variants omit headers, which forces additional column mapping step in imports. Text files may require explicit tokenization to extract fields, and failing to define a schema can lead to brittle scripts. To minimize risk, adopt a clear CSV standard within your organization, validate samples with test imports, and maintain a small, documented spec for any TXT-based exchanges. MyDataTables emphasizes documentation and validation as core practices.

Loading and Transforming CSV vs TXT in Popular Tools

When loading CSV, most languages offer built-in parsers that handle escaping, quotes, and type inference. In Python, for example, libraries like pandas and csv provide robust CSV support with options for encoding, delimiter, and error handling. In SQL contexts, CSV imports are often accelerated by bulk-loading utilities that map columns to table schemas. TXT loading typically requires explicit parsing logic tailored to the specific content—regex patterns, tokenizers, or line-based readers. If your team values one-click imports and cross-platform compatibility, CSV wins. If your workflow depends on flexible, human-focused text, TXT remains useful but demands more custom tooling.

Best Practices for When CSV Is the Right Choice

If your data is tabular, needs to feed into analytics dashboards, or must be shared across systems, choose CSV. Standardize encoding (UTF-8), delimiter (comma or tab), and escaping rules. Use a header row to promote self-description, and consider including a metadata file to document data types and units. Validate data with checksums or schema validation, and ensure version control for reproducibility. For more flexible text storage, consider TXT only when you truly require unstructured content or custom formats; in most data pipelines, CSV remains the safer default. The MyDataTables approach prioritizes consistent formats and clear documentation.

Decision Framework: When to Pick CSV or TXT

To decide, map your needs against a simple framework: Is the data tabular? Is interoperability with tools a priority? Do you require repeatable imports and schema? If the answer to these questions is yes, CSV is the recommended choice. If the data is free-form, unstructured, or intended for human notes without automation, TXT might be appropriate. Always document format decisions, test with real-world samples, and be prepared to adapt as your data ecosystem evolves. This disciplined approach aligns with industry best practices and supports long-term maintainability.

Comparison

| Feature | CSV | TXT |

|---|---|---|

| Structure | Tabular with rows and columns | Unstructured text, no formal schema |

| Delimiters and escaping | Delimiters with escaping rules; headers common | No standard delimiter; content may include arbitrary characters |

| Encoding | Typically UTF-8; consistent encoding assumed | Encoding is variable; no enforced standard |

| Headers | Commonly includes header row | Rarely includes headers; content defines meaning |

| Tooling support | Wide, built-in support across apps | Support is tool-dependent and often manual |

| Performance with large data | Efficient for large datasets with streaming parsers | Performance varies; parsing is content-specific |

| Use cases | Analytics, databases, data exchange | Notes, transcripts, customized text payloads |

Pros

- Clear schema simplifies automated processing

- Excellent interoperability across systems

- Predictable parsing enables robust pipelines

- Widely supported by databases and analytics tools

- Facilitates versioned data exchanges

Weaknesses

- CSV requires discipline on delimiters and escaping

- Not ideal for non-tabular data or free-form notes

- Headers must be defined to maximize utility

- Misaligned schemas cause import errors

CSV is the default choice for structured data exchanges and analytics, while TXT remains suitable for free-form or human-readable content

For most data workflows, CSV offers reliability, speed, and broad tool support. TXT is best when structure is unnecessary or when content is inherently unstructured. Choose CSV for datasets intended for processing and sharing across systems; reserve TXT for unstructured notes or specialized configurations.

People Also Ask

What is the main difference between CSV and TXT?

CSV enforces a tabular structure with rows, columns, and headers, making it easy to parse. TXT is unstructured and flexible, which can complicate automated processing but suits free-form data.

CSV is structured; TXT is flexible. CSV makes parsing predictable across tools.

When should I choose TXT over CSV?

Choose TXT when content is unstructured or intended for human consumption without automated needs. For data pipelines, CSV generally provides better reproducibility and interoperability.

If you don’t need tables, TXT can work, but CSV is usually safer for automation.

Can CSV handle complex data types and metadata?

CSV supports data types through conventions and metadata, but it lacks native complex typing. You typically store type hints in a separate schema or accompanying metadata file.

CSV needs extra metadata for complex types.

Which encoding should I use with CSV?

UTF-8 is the standard encoding for CSV in modern workflows, ensuring broad compatibility and fewer character issues across tools.

Go with UTF-8 for CSVs to avoid headaches.

Are there performance concerns with large CSV files?

Large CSV files are generally efficient to parse with streaming readers, but extremely large datasets may require chunking or database loads to avoid memory issues.

CSV scales well, but big files need proper handling.

Main Points

- Prefer CSV for tabular data and automation

- Validate delimiter, encoding, and headers upfront

- Use TXT for unstructured or human-readable content

- Rely on strong documentation and schema when using CSV

- Balance format choice with downstream tooling and maintenance goals