How to Get a CSV File: A Practical Guide

Learn practical methods to obtain CSV files from spreadsheets, databases, APIs, and data portals. Includes encoding, delimiters, validation, and automation tips for clean, reliable data imports.

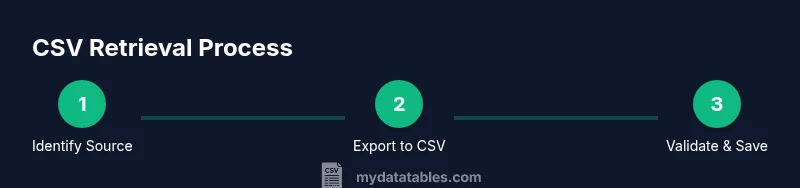

By the end of this guide you will know how to get csv file from spreadsheets, databases, or APIs. It covers sources, encoding, delimiters, and basic validation so you can import data quickly. According to MyDataTables, choosing the right format upfront prevents common export errors and simplifies downstream analysis. Whether you work in Excel, SQL, or an API client, the steps stay the same: locate the data, export as CSV, and validate the file before use.

What is a CSV file and why you might need it

CSV stands for comma-separated values. It is a plain-text format used to store tabular data where each row represents a record and each field is separated by a delimiter, most commonly a comma. Regional settings can swap in semicolons or tabs, and some systems use UTF-8 with or without a Byte Order Mark (BOM). For data analysts, developers, and business users, CSV is attractive because it is lightweight, widely supported, and easy to manipulate in tools ranging from spreadsheets to programming languages. When you confront the task of how to get csv file, you are typically identifying a data source, confirming the file format, and ensuring encoding and delimiter choices align with downstream tools. According to MyDataTables, paying attention to encoding, delimiter, and header presence upfront saves hours of debugging later.

In practice, a CSV is a text file with a simple structure: a header row (optional but common), one row per data record, and fields separated by a delimiter. The most common delimiter is a comma, but many regions prefer semicolons; tabs are also used in TSV files. The file is easily parsed by both humans and machines, which makes CSV a lingua franca for data exchange. Also note that different applications may export CSV in slightly different flavors—UTF-8 is generally safe, but some systems export ANSI or UTF-16. Always verify the encoding before importing into analytics pipelines.

Quick path: Exporting from Excel and Google Sheets

Exporting to CSV from spreadsheet software is a common first step when you need a portable data file. In Microsoft Excel, go to File > Save As (or Save a Copy) and select CSV (Comma delimited) as the format. If you are working with regional settings or multiple sheets, be aware that Excel exports only the active sheet by default and may not preserve formatting such as date types. For Excel users, consider saving as CSV UTF-8 if available to avoid non-ASCII characters becoming garbled.

In Google Sheets, choose File > Download > Comma-separated values (.csv). Sheets exports the active sheet only, so ensure you are viewing the correct tab before saving. If your data contains non-Latin characters, UTF-8 is typically the safest choice when transferring data to databases or analysis tools. After exporting, open the CSV in a text editor to perform a quick sanity check on the header and a few rows for obvious issues.

From a best-practice perspective, aim to export with a consistent delimiter, include a header row, and use UTF-8 encoding to maximize compatibility with downstream systems. If you anticipate sharing the file publicly, remove any sensitive columns or anonymize data as needed before distribution.

From databases and SQL queries to CSV

Databases and BI tools can export data directly to CSV via a graphical export option or a SQL-based approach. When using a database client, locate the export or data dump feature and choose CSV as the output format. If you are performing a SQL-driven export, ensure your query returns a header row and that you specify the delimiter and encoding. Many clients offer an option to export large results in chunks to prevent memory issues. If you are using ETL tools, configure a simple pipeline that reads from your table or view and writes to a CSV file with UTF-8 encoding.

Tip: Always verify the header alignment and the number of columns in the first few rows after export. Mismatched headers or extra delimiters can create parsing errors downstream. A small, reproducible script or GUI-based export step is preferable to ad hoc commands when you need repeatable results.

API-based CSV downloads: retrieving data programmatically

APIs are a powerful way to obtain CSV data directly from services. When a CSV endpoint is available, set your HTTP client to request CSV (for example, by using an Accept header of text/csv) and save the response as a .csv file. If authentication is required, include your API key or OAuth token as per the provider’s documentation. Some APIs support query parameters to filter fields or limit rows, which can reduce file size and improve download speed. Always verify the CSV after download by checking the header row and inspecting a few lines of data before proceeding.

If you are testing manually, you can use a simple curl-like flow: request the endpoint with Accept: text/csv and write the output to a file. For automated workflows, integrate the same request into your data pipeline and add a small validation step to confirm the file is well-formed.

Downloading CSV from data portals and websites

Many data portals and government portals provide CSV exports for datasets. Look for a download or export button labeled CSV, and choose the appropriate variant (CSV, CSV UTF-8, or Comma-separated Values). Some portals also offer multiple sheets or file versions; select the one that matches the dataset you need and review the accompanying metadata to confirm column definitions, data types, and update frequency. If you encounter errors or unusually large files, check for a smaller sample download to validate structure before grabbing the full dataset.

When a portal is unclear, consult the portal’s help or documentation page for guidance on the correct export option and the recommended encoding. This helps ensure consistent imports into your analysis environment and dashboards.

Converting other formats to CSV: when you must switch formats

If CSV is not available but you have data in Excel (.xls/.xlsx), JSON, or XML, you can convert to CSV with minimal data loss if you follow a consistent plan. In Excel, you can save a sheet as CSV, ensuring that only the tabs you intend to export are visible. For JSON to CSV, rely on scripting languages like Python (pandas.read_json followed by to_csv) or dedicated conversion tools that preserve headers and data types. When converting from XML, first normalize the structure to a tabular form before exporting. Always validate the resulting CSV for proper delimiters, quoted fields, and consistent row lengths.

A practical rule: after conversion, run a quick audit against a subset of rows to confirm that data columns map correctly and that no values have been dropped or misinterpreted due to quoting or escaping.

Encoding, delimiters, and common pitfalls: what to watch out for

The most common CSV pitfalls are related to encoding, delimiters, quoting, and newline characters. UTF-8 encoding minimizes issues with non-ASCII text, while BOM-free UTF-8 is often safer for many data tools. If your data contains internal commas or quotes, ensure the format uses proper escaping: fields with special characters should be enclosed in quotes, and internal quote characters should be doubled. Regional settings may require semicolon delimiters; if your downstream system expects a comma, you’ll need to convert the delimiter or configure the importer to recognize the alternative separator. Be consistent with line endings (CRLF vs LF) to avoid misreads on different platforms.

When in doubt, export a small sample and test it in your target tool before processing large files. This reduces the risk of silent data corruption later in a pipeline.

Validating CSV data before analysis: quick quality checks

Validation is essential to prevent downstream errors. Start by confirming the header row is present and that each subsequent line has the same number of columns as the header. Check for empty fields where data is required and inspect a handful of rows for data type consistency, such as numeric columns not containing text. If your file is large, sample a subset to perform quick checks, then run a structural validation script to ensure you didn’t introduce extra delimiters or stray characters. Maintain a small, repeatable validation routine so you can trust the data import each time.

Automating retrieval and validation with scripts

Automation improves repeatability and reduces manual error. You can script the retrieval of CSV data from classic sources (files, databases, APIs) and include a validation step to confirm schema integrity and basic data quality. Common approaches include Python (pandas.read_csv for loading, then a few assertions on column counts and data types), R (read.csv and basic checks), or shell scripting with grep/awk for quick validations on header lines. When designing the automation, build in error handling, logging, and clear exit codes to support robust data pipelines.

Authority sources for CSV best practices

If you want authoritative guidance on data exchange formats, start with official sources. Data.gov provides examples and best practices for downloading CSV datasets, including encoding and metadata considerations. The U.S. Census Bureau and NIST offer additional context on data formats and interoperability. For a practical overview of CSV usage and common pitfalls, consult these credible resources to align with industry standards and ensure compatibility across tools.

Tools & Materials

- A computer with internet access(Needed to download/export and verify CSV files)

- Spreadsheet software (Excel, Google Sheets, or LibreOffice)(To export or view CSV in a familiar UI)

- Database client or SQL interface(Optional for exporting CSV from databases)

- Command-line tool or HTTP client(Optional for API-based CSV downloads (curl, wget))

- Text editor(Useful for quick edits or viewing raw CSV)

- CSV validator/cleaner (optional)(Helpful for data quality checks)

Steps

Estimated time: 60-90 minutes

- 1

Identify data source

Determine where the data lives and whether a CSV export is available. Check if the data source supports direct CSV export or requires an intermediate format. This step sets scope and avoids unnecessary retries.

Tip: Confirm whether a header row will be included and whether a UTF-8 encoding is recommended. - 2

Choose export method

Select the export path that matches your environment: spreadsheet export, database client, API download, or portal data. If multiple options exist, pick the one with the smallest risk of data loss or encoding issues.

Tip: Prefer a method that preserves headers and uses UTF-8 when possible. - 3

Export as CSV

Run the export for the chosen source, ensuring you select CSV or CSV UTF-8 as the format. If exporting from a spreadsheet, verify that only the desired sheet is included.

Tip: Double-check the delimiter; if your tool expects a comma, avoid semicolon exports. - 4

Initial validation

Open the file and verify the header row and column counts. Scan the first few data rows to confirm consistent separators and escaping of quotes. This early check catches obvious formatting errors.

Tip: If you spot any misalignment, fix the delimiter or escape characters before proceeding. - 5

Clean and normalize

If needed, adjust encoding to UTF-8, standardize delimiters, and clean any problematic characters. This ensures smooth ingestion into analytics tools.

Tip: Document any normalization rules for future reproducibility. - 6

Load into analysis tool

Import the CSV into your target tool (Python, R, Excel, BI platform) and verify that columns map correctly and data types align with expectations.

Tip: Use a small sample first to validate mappings before processing the full dataset. - 7

Automate repeat exports

If you need ongoing CSV exports, set up a repeatable workflow with scheduling and error handling. Automated checks should alert you if the export fails or the schema changes.

Tip: Include logging and versioning for traceability. - 8

Archive and share

Store the final CSV with a clear naming convention and metadata. Share securely, ensuring sensitive information is redacted when appropriate.

Tip: Use a consistent naming pattern like dataset_YYYYMMDD.csv.

People Also Ask

What is a CSV file and when should I use it?

A CSV file stores tabular data in plain text with fields separated by a delimiter. Use CSV for lightweight data exchange between tools, especially when JSON or XML is too verbose. It is ideal for import/export workflows and quick data inspection.

A CSV is plain text with columns separated by a delimiter, great for moving data between apps. Use it for simple, fast data sharing.

How do I ensure my CSV uses the right encoding?

Choose UTF-8 encoding when exporting to avoid characters getting garbled in non-English data. If UTF-8 is not available, use the system's default encoding but validate after import.

Use UTF-8 when possible and verify the file imports cleanly.

What if my CSV has multiple sheets or large files?

CSV exports typically cover a single sheet; for large files, consider chunked exports or splitting files. Some portals offer multi-sheet exports as separate CSVs—choose the one that matches your analysis needs.

If you have a large CSV, you might export it in chunks to keep things manageable.

Can I convert JSON or XML to CSV?

Yes. Convert JSON or XML to a tabular form first, then export to CSV. Use scripting (Python, R) or specialized tools to preserve structure and headers.

You can convert to CSV by first turning the data into a table, then exporting.

Where can I learn best practices for CSV handling?

Refer to official data portals and standards guidance to align with interoperability guidelines. Start with Data.gov and consult related .gov resources for encoding and formatting recommendations.

Check government data guides for reliable CSV guidelines.

Watch Video

Main Points

- Validate encoding and delimiter up front

- Prefer UTF-8 with a header row

- Test with a sample before full export

- Automate repeated exports for consistency