How to Remove Blank Rows in CSV Files: A Practical Guide

Learn practical step-by-step methods to remove blank rows from CSV data using Excel, Google Sheets, Python, and shell. Improve data quality for analysis and ensure clean, reliable downstream processing.

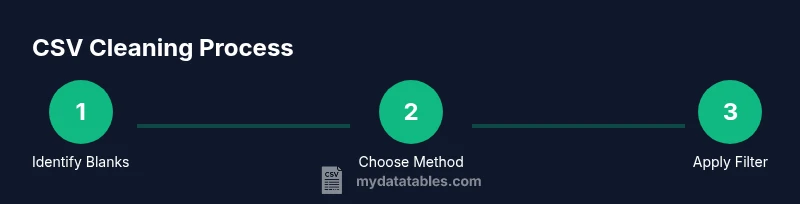

By the end of this guide, you will remove blank rows from a CSV file across multiple tools, without losing data. You’ll start with a backup, then pick a method (Excel, Google Sheets, Python, or shell), apply a reliable filter, and verify results before saving a clean CSV. This multi-tool approach ensures data integrity and scales to larger datasets.

Why removing blank rows matters

According to MyDataTables, blank rows in CSV files can disrupt data integrity and complicate downstream processing. They often cause misalignment in joins, errors during imports, and unreliable counts in automated checks. The MyDataTables Team emphasizes that a clean CSV improves reproducibility and saves time in data pipelines. By removing empties, analysts reduce noise, simplify parsing, and help ensure that subsequent transformations behave predictably. Treat this cleanup as a standard data-quality step in any CSV workflow.

Quick check: identify blank rows

Before cleaning, scan the file to understand how blanks appear. Blank rows may be completely empty lines or lines with only delimiters and whitespace. Use a simple preview in your editor or a quick command to spot lines that contain no meaningful data. In many tools, you can highlight lines with zero non-space characters or use a filter to show only empty lines. This quick audit helps you tailor your cleanup approach and avoids accidentally removing header rows or rows with subtle data.

Back up before you begin

Create a backup copy of the original CSV so you can revert if something goes wrong. Store the backup in a safe location and clearly label the file name to reflect its purpose (for example, original-2026-02-backup.csv). Backups are a best practice in data preparation, allowing you to experiment with filters and scripts without risking the source data. The MyDataTables team recommends this step as a non-negotiable safeguard.

Excel method: remove blank rows

In Excel, you can remove blank rows using a combination of selection, filtering, and deletion. Start by selecting the data range, then use the Go To Special feature to select blanks, which highlights empty rows. Right-click the selected rows and choose Delete, Entire Row. If your data has headers, ensure you preserve the first row by applying the filter to the data only. Finally, save as a new CSV to keep the cleaned version separate from the original.

Google Sheets method

Google Sheets provides similar capabilities via built-in filters and conditional formatting. Import the CSV, apply a filter to the data range, and filter out rows where all cells are empty or whitespace. Alternatively, use a script or add-on to remove blank rows in bulk. After cleaning, export the sheet back to CSV, making sure the header row remains intact. This approach is popular for collaborative workflows.

Python (pandas) method

Python with pandas offers a robust, repeatable way to remove blank rows. Load the CSV into a DataFrame, drop rows where all values are missing or whitespace, and write the result to a new CSV file. A compact script looks like:

import pandas as pd

df = pd.read_csv("file.csv") df = df.dropna(how="all") df = df[~df.apply(lambda r: r.astype(str).str.strip().eq("").all(), axis=1)] df.to_csv("file-clean.csv", index=False)

This method handles whitespace and missing data consistently, which is valuable for large datasets and automated pipelines. MyDataTables analysis indicates that script-based cleanup integrates well into data workflows.

Shell/command-line method

Command-line tools can remove blank lines quickly without opening a GUI. A common approach is to filter out lines that are empty or whitespace while preserving the header, for example: awk 'NR==1||NF{print}' file.csv > file-clean.csv

This keeps the header intact and drops rows that contain only whitespace. For very large files, consider streaming solutions to minimize memory usage. If your CSV uses a nonstandard delimiter, adjust the pattern accordingly.

Handling whitespace and quotes

Whitespace and quoted values can disguise blank-looking rows. After an initial cleanup, trim whitespace around each field and re-check the row content. In Python, use df.applymap(lambda x: x.strip() if isinstance(x, str) else x) to normalize values before applying dropna. In spreadsheets, use TRIM functions in helper columns to validate data consistency before final cleanup.

Validating the cleaned CSV

Validation confirms that cleaning didn’t alter essential structure. Check that header columns remain aligned and that the row count reflects the removal of blank rows. Compare before/after counts, review a random sample of rows, and re-run basic integrity checks in your ETL or analysis workflows. Consistency here reduces downstream surprises during joins or merges.

Automating cleanup with a small script

For recurring tasks, encapsulate the cleanup in a small script that can be run on new CSV files. A minimal approach uses a function that reads the file, removes blank rows, and writes the result to a new file. Script automation improves repeatability, reduces manual errors, and aligns with data governance practices. The MyDataTables team notes that automation is a key driver of reliable CSV data handling.

Final checklist before sharing the data

- Back up the original file and verify you can revert.

- Confirm the header row remains intact.

- Validate that no blank rows remain and that data alignment is preserved.

- Run a quick spot-check of a few critical columns to ensure values are intact.

- Document the method used and store the cleaned file with a clear name.

Tools & Materials

- CSV file (source data)(Your original data file; keep a backup before cleaning.)

- Backup copy(Create a safe copy in a separate location.)

- Spreadsheet software(Excel or Google Sheets for GUI workflows.)

- Text editor or CSV viewer(Useful for quick checks.)

- Python with pandas(Install if you plan to script the cleanup.)

- Command-line tools (awk/grep/sed)(Helpful for shell-based workflows.)

- Output path for cleaned CSV(Where to save the cleaned file.)

Steps

Estimated time: 30-60 minutes

- 1

Back up the original CSV

Create an exact copy of the CSV in a secure location before making any changes. This ensures you can revert if cleaning affects data unexpectedly.

Tip: Label the backup clearly and store it separately from the working file. - 2

Open the file in your chosen tool

Launch Excel, Google Sheets, Python, or your preferred shell environment and load the CSV. Make sure the delimiter is correctly detected so fields align as expected.

Tip: If you’re using a script, load the file from disk rather than a temporary path. - 3

Identify blank rows

Scan for lines with no meaningful data: completely empty lines or lines with only whitespace. Confirm that the header row is distinguishable and won’t be treated as data.

Tip: Use a quick preview to avoid removing headers by accident. - 4

Apply the cleanup method

Implement the chosen method to drop blank rows. Excel/Sheets: use Go To Special or filter blanks; Python: dropna(how='all') and a whitespace check; shell: filter out blank lines while preserving the header.

Tip: Test the method on a small sample first. - 5

Save the cleaned CSV

Save the result to a new file name that clearly identifies it as cleaned, to avoid overwriting the backup.

Tip: Prefer CSV with index off to avoid extra columns. - 6

Validate the cleaned data

Check that the header is intact, remaining rows align with columns, and key fields look sensible after cleaning.

Tip: Compare row counts and spot-check important columns. - 7

Handle whitespace and edge cases

Ensure there are no whitespace-only cells left and that quoted fields aren’t split incorrectly.

Tip: Trim whitespace where needed to ensure consistency. - 8

Test downstream processes

Run a quick import or join in your ETL or analysis workflow to confirm the cleaned file behaves as expected.

Tip: If issues arise, revert to the backup and re-evaluate the filter criteria.

People Also Ask

What is the difference between a blank row and a row with empty cells?

A blank row has no data at all, while a row with empty cells contains delimiters but no values. Cleaning logic should treat both as removable, but you may want to preserve headers or metadata.

A blank row has no data at all, while an empty cell has no value but still occupies a column. Cleaning should remove rows that are entirely empty, and handle header rows carefully.

Can I remove blank rows without losing the header row?

Yes. Most methods provide an option to exclude the first row from removal. In Excel, Google Sheets, and Python, apply filters or filters that ignore the header or explicitly keep the first row.

Yes. Most methods let you protect the header row, so you don’t accidentally remove column names.

Will removing blank rows affect downstream joins or merges?

If blank rows contain key identifiers, removing them could affect joins. Clean the data consistently and check any join keys after cleaning to ensure results remain correct.

Removing blank rows can affect joins if the blank rows held important keys. Always verify join keys after cleaning.

Which method is best for very large CSV files?

For large files, Python with pandas or command-line tools are typically more scalable than GUI tools. They minimize memory usage and can be automated in scripts. Start with a small subset to validate the approach.

For large files, script-based methods tend to be more scalable and reliable.

How do you handle whitespace in fields with quotes during cleaning?

When cleaning, trim whitespace only outside quoted fields. In Python, you can apply a trim across string values while preserving quotes, and in Excel/Sheets, use TRIM on helper columns before final cleanup.

Be careful to trim spaces outside quoted fields, so you don’t alter data inside quotes.

How can I automate blank-row cleaning in a data pipeline?

Wrap the cleaning steps in a script or workflow tool that runs on new CSV arrivals. Include validation steps and logging to track successes or failures for reproducibility.

Yes, you can automate this as part of a data pipeline, with checks and logs.

Watch Video

Main Points

- Back up before cleaning

- Preserve the header row during edits

- Use a tool-appropriate method for reliability

- Validate results with spot checks

- Automate for repeatable CSV cleaning