Load CSV to MySQL: A Practical Guide

Learn how to load CSV data into MySQL quickly and reliably with step-by-step methods, schema design, data cleaning, and troubleshooting tips.

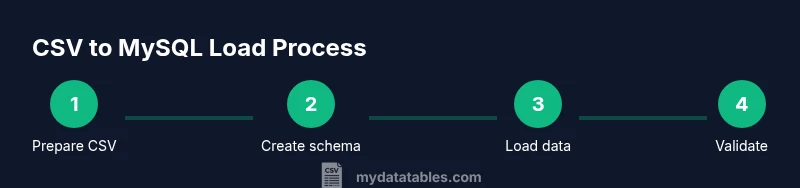

Using this guide, you will import data from a CSV file into MySQL by preparing the CSV, designing the target table, selecting an import method (LOAD DATA INFILE or a GUI tool), executing the load, and validating the results. The steps cover best practices, common pitfalls, and troubleshooting to ensure a reliable import.

Understanding the CSV to MySQL workflow

According to MyDataTables, the process begins long before you issue an import command. Effective CSV loading combines careful data preparation with a clear mapping between source fields and target columns. The workflow can be summarized in three phases: input validation, schema readiness, and data ingestion. In practice, you’ll verify the CSV encoding and delimiters, design a destination table that tolerates partial data, and then choose a loading strategy that fits the file size and the environment. This section sets the foundation by explaining how to align the CSV structure with your database schema and what success looks like at each step. You’ll also learn how to structure the import as a repeatable process, so audits and re-runs are straightforward. The MyDataTables team emphasizes documenting decisions (such as field mappings and encoding choices) to avoid ambiguity later.

Choosing the right loading method

There isn’t a single best method for every CSV import into MySQL. The choice hinges on file size, data complexity, and environment constraints. For simple, large files, LOAD DATA INFILE offers speed and efficiency when the server has file access. If the server cannot read client files, LOAD DATA LOCAL INFILE paired with a client tool or a GUI like MySQL Workbench is a solid alternative. For small datasets or data that requires transformation, a scripted INSERT-based approach can work, though it’s slower. Consider transaction wrapping for safety and enable/disable indexes strategically to improve performance during bulk loads. Finally, think about automation: scripts or ETL steps that can be replayed with the same parameters.

Preparing your CSV for import

Before you import, standardize the CSV to minimize surprises. Ensure UTF-8 encoding (prefer UTF-8 without BOM), consistent delimiters (comma is common), and uniform text qualifiers (often a double quote). Remove stray line endings or stray invisible characters. If your file includes a header row, you must map those headers to your table columns precisely or instruct MySQL to skip the header with IGNORE 1 LINES. Validate that numeric columns contain only digits and date fields follow an expected format. If needed, preprocess the file with a scripting language or a dedicated CSV cleaner to normalize whitespace and escape problematic characters.

Designing the target table schema

Designing a robust target table is critical. Start by listing the expected columns exactly as they appear in the CSV and assign appropriate data types (INT, BIGINT, DECIMAL, VARCHAR, DATE, etc.). Decide on nullability, default values, and whether to enforce constraints such as NOT NULL or CHECK. If you anticipate dirty data, consider a staging table that mirrors the eventual destination; perform type casting and cleansing there before moving rows to the final table. Use indexes judiciously to speed lookups, but avoid indexing during the initial bulk load to minimize overhead. Finally, ensure the character set and collation align with your CSV encoding (for example, utf8mb4_general_ci).

Importing data using SQL: example with LOAD DATA INFILE

To import, you’ll typically use a LOAD DATA INFILE statement that points to a CSV file and explicitly maps fields to table columns. For example:

LOAD DATA INFILE '/path/to/file.csv' INTO TABLE staging_csv FIELDS TERMINATED BY ',' OPTIONALLY ENCLOSED BY '"' LINES TERMINATED BY '\n' IGNORE 1 LINES (col1, col2, col3, col4);

If your server cannot access the file, use LOAD DATA LOCAL INFILE with the same options. You can adjust with REPLACE or IGNORE to handle duplicates. For large files, consider wrapping the operation in a transaction and validating counts after the load. This approach supports strict control over how data appears in the destination.

Using MySQL Workbench or command line utilities

GUI tools like MySQL Workbench simplify imports for users who prefer a graphical workflow. In Workbench, you can use the Table Data Import Wizard to map CSV columns to table fields, preview data, and generate the necessary SQL. Command line users can run the same LOAD DATA INFILE commands shown above, optionally piping a CSV file through a shell. Both approaches benefit from running the import inside a transaction, so failures don’t leave the database in an inconsistent state. Keeping a copy of the source CSV and a log of the import parameters makes audits reproducible.

Common issues and troubleshooting

CSV imports often fail due to mismatch between CSV fields and table columns, encoding mishaps, or server permissions. Common errors include data type mismatches (trying to store text in a numeric column), missing files (the server can’t access the path), and restricted file loading by the server’s secure_file_priv setting. Ensure your file is accessible to MySQL if you use LOAD DATA INFILE, or switch to LOCAL with appropriate client privileges. When in doubt, run a small test import with a subset of rows to isolate issues and gradually increase scope. Always verify that the number of loaded rows matches the source, and run spot checks on a few rows to confirm correctness.

Performance considerations: batching, indexing, and transactions

Performance matters for CSV imports, especially with very large datasets. Use batching and disable nonessential indexes during the bulk load to improve throughput, then rebuild indexes afterward. Wrapping the load in a single transaction ensures atomicity and easier rollback if errors occur. If you’re dealing with numeric precision or date parsing, consider transforming the data during the staging phase or in a pre-load step. Finally, keep the source CSV in a versioned location and log the import parameters so you can reproduce results exactly in future runs.

Authority sources

For further reading and official guidance, consult the MySQL documentation and credible data-loading references. The following sources provide authoritative details on loading CSV data into MySQL and related import techniques:

- https://dev.mysql.com/doc/refman/8.0/en/load-data.html

- https://dev.mysql.com/doc/refman/8.0/en/load-data-local.html

- https://docs.oracle.com/en/database/oracle/database/19.3/advjs/load-data.html

- https://en.wikipedia.org/wiki/CSV

These resources complement the practical guidance in this article and help you understand the underpinnings of data ingestion in MySQL.

Tools & Materials

- MySQL server (or MariaDB) with appropriate privileges(Version 5.7+ recommended; ensure you can run LOAD DATA INFILE or LOCAL INFILE)

- CSV file to import (UTF-8 encoded)(Should have a header row mapping to destination columns)

- Database user with INSERT/LOAD privileges(Access to target schema; if using LOCAL, permissions to read local files)

- MySQL Workbench or terminal client(Use to execute import commands or GUI steps)

- Text editor or scriptable CSV preprocessor(Helpful for cleaning and normalizing data)

- Optional: staging table(Helps validate data before final insert)

Steps

Estimated time: 60-90 minutes

- 1

Prepare the CSV

Verify encoding, delimiter, and header mapping. Clean stray characters and ensure numeric fields are consistent. Create a small test CSV to validate the import flow.

Tip: Check for BOM or unusual separators that can break parsing. - 2

Create or select target schema

Define the table with appropriate data types and constraints. Decide whether to use a staging table for cleansing.

Tip: Align column order to match the CSV header to avoid mapping errors. - 3

Choose the import method

Decide between LOAD DATA INFILE, LOAD DATA LOCAL INFILE, or an INSERT-based approach based on environment.

Tip: For large files, prefer LOAD DATA INFILE with minimal transformations. - 4

Write the import statement or script

Construct the SQL command with proper field and line terminators and skip header lines if needed.

Tip: Use IGNORE 1 LINES to skip header when your CSV has a header row. - 5

Execute the import inside a transaction

Begin a transaction, run the load, and commit if row counts match; roll back on error.

Tip: This prevents partial imports from leaving the database inconsistent. - 6

Validate loaded data

Run counts and spot-check several rows to ensure data type alignment and value accuracy.

Tip: Use a sampling approach to quickly verify correctness. - 7

Clean up and optimize

Index the final table if needed, remove the staging table, and archive the source CSV.

Tip: Document the mapping and keep a changelog for audits. - 8

Automate for future imports

Wrap the process into a script or stored procedure and schedule recurring imports if needed.

Tip: Include logging and error handling in every run.

People Also Ask

What is the easiest method to import a CSV into MySQL?

For many users, the simplest approach is LOAD DATA INFILE or LOAD DATA LOCAL INFILE, especially for large CSVs. GUI tools like MySQL Workbench can guide the steps visually. For small datasets, INSERT statements are feasible but slower.

Use LOAD DATA INFILE or a GUI tool for simple CSV imports, especially for larger files.

Do I need a staging table before loading?

A staging table helps validate and cleanse data before moving it to the final destination. It reduces risk by catching type mismatches and bad values early.

A staging table helps you validate data before final insertion.

How should I handle headers in the CSV during import?

Skip the header row and map columns explicitly using IGNORE 1 LINES or by listing target columns in the import statement.

Skip the header and map columns precisely.

What about UTF-8 encoding?

Ensure both the CSV and the MySQL client/server use utf8 or utf8mb4. Set names appropriately and avoid BOM if possible.

Use utf8 or utf8mb4 and set names to utf8mb4.

How can I import very large CSV files efficiently?

Use LOAD DATA INFILE with batching, drop or disable nonessential indexes during the load, and commit in chunks if supported.

Load in chunks and disable nonessential indexes during import.

Is there a way to automate CSV imports?

Yes. Use scripts (Python, Bash) or stored procedures and schedule them with cron or Task Scheduler; add logging for auditing.

Automate with scripts and scheduling; log runs.

Watch Video

Main Points

- Prepare your CSV with consistent encoding and headers

- Design a compatible target table before import

- Choose an import method suited to file size and needs

- Validate results with row counts and spot checks

- Automate with logging for repeatable imports