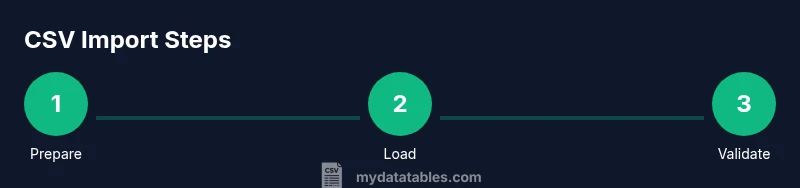

SQL Workbench CSV Import: A Practical Step-by-Step Guide

Learn how to import CSV data into a database using SQL Workbench/J with practical steps, tips, and validation strategies for reliable data integration.

You will learn how to import a CSV file into SQL Workbench/J using a straightforward workflow that preserves data types and handles common delimiters. You'll need a CSV file, a target database connection, and permission to create/import tables. This guide covers prep, import steps, and validation for sql workbench csv import.

What sql workbench csv import is and why you might use it

According to MyDataTables, sql workbench csv import is the process of loading structured data from a CSV file into a database through SQL Workbench/J. This tool provides a convenient GUI and SQL scripting capabilities that support multiple DBMS backends (MySQL, PostgreSQL, Oracle, SQL Server, and more). The approach is especially useful when you maintain data pipelines that originate from flat files or when you need to collate CSV data for analytics in a centralized warehouse. In practice, the workflow emphasizes careful preparation, mapping of columns to table schema, and validation after the load. With well-prepared CSVs, sql workbench csv import reduces manual data entry and speeds up integration tasks while preserving data types, delimiters, and encoding. For teams using MyDataTables guides, the routine becomes a repeatable process rather than a one-off operation.

Prerequisites and preparing your CSV file for import

To ensure a smooth sql workbench csv import, start with a clean CSV. Ensure UTF-8 encoding, a header row with column names, consistent quoting, and uniform delimiters. Validate that numeric and date fields in the CSV align with the target table's data types. Remove extraneous columns and ensure there are no trailing delimiters. If possible, add a simple test row to confirm mapping works as expected. Create a small subset of data to validate the import before running a full load. This preparation protects you from surprises during the import and helps you spot issues like mismatched column order or missing values early. The guidance also highlights running a quick schema check against the CSV header to forecast mapping decisions and data-type conversions before you begin.

Understanding SQL Workbench/J's import options

SQL Workbench/J offers multiple ways to bring CSV data into a database. The most common path is the import wizard, which provides step-by-step prompts for delimited text, header usage, quotes, and encoding. Alternatively, you can script a load with standard SQL or DBMS-specific commands like COPY or LOAD DATA. The GUI is helpful for one-off imports and quick ad-hoc loads, while scripted loads support repeatable pipelines. In both modes, you should align the CSV columns to the destination table columns and consider converting dates to the target format before load. For those who prefer automation, you can wrap the import process in a batch file or a simple Java-based tool that uses JDBC to run the load commands. The guidance emphasizes encoding and explicit data type handling to minimize surprises during execution.

Step-by-step: Import using the GUI import wizard

The GUI wizard guides you through selecting the CSV file, choosing the delimiter, and mapping columns to table fields. Start by selecting the target connection and the database schema, then pick the import option. Next, specify UTF-8 encoding, set the delimiter to comma, and indicate that the first row contains headers. Use the preview to confirm a sample of the first few rows maps correctly. Then choose whether to append or overwrite existing data. Confirm the mapping and execute the import. If errors occur, review the error log and adjust the CSV format or the column mappings. This path provides a practical, visual workflow that aligns with earlier prerequisites and sets you up for a successful load.

Command-line or SQL-based import for reproducible pipelines

For repeatable imports, prefer using SQL statements to load CSV data, especially in automated pipelines. Depending on your DBMS, the commands vary: PostgreSQL uses COPY, MySQL uses LOAD DATA INFILE, Oracle uses external tables or SQL*Loader, and SQL Server uses BULK INSERT. In SQL Workbench/J, you can issue these commands via the JDBC connection or wrap them in scripts that execute through the tool. Consider staging the CSV into a staging table first, then transforming and inserting into the final table. This approach ensures you can rerun the load with minimal risk, track changes, and apply data quality checks in-between loads.

Handling data types and transformation during import

CSV stores all data as text, so mapping to proper types during import is critical. Decide how to handle dates (ISO, mm/dd/yyyy, or custom formats), numbers (decimal separators, thousands separators), booleans, and null values. In the GUI, you can specify cast rules for each column, or you can pre-map and convert values in the CSV before import. If you anticipate data-type drift, create a staging table with flexible types (e.g., text) and then cast to strict types in a follow-up ETL step. This approach aligns with common data import best practices and requires validating conversions and keeping a log of any type mismatches to audit data lineage.

Performance considerations for large CSV files

Large files can strain memory and increase import times. Strategies include splitting large CSVs into smaller chunks, adjusting batch sizes in the import wizard, and disabling unnecessary indexes during the load. Load data in transactions to minimize rollback costs and to keep the operation atomic. Consider enabling parallel processing if your DBMS supports it, and monitor the import with real-time feedback. For very large datasets, a staged approach using a dedicated staging table and incremental loads yields better performance and easier error handling.

Validation, verification, and post-import checks

After the load finishes, perform checks to ensure data quality. Compare row counts between the CSV and the destination table, verify a random sample of rows, and run integrity constraints. Check for null values in non-nullable columns, verify key constraints, and ensure there are no truncated strings. Maintain a change log with the number of imported rows, any duplicates, and the timing of the import. This is where standardized post-load checks prove valuable for audit trails and data governance.

Real-world example: Import a sample customers.csv

In practice, you might import a customers.csv with fields like customer_id, name, email, signup_date, and status. Create a table with matching columns and appropriate data types (integer, varchar, date, varchar). Run through the GUI importer or a scripted COPY command to load the data, then perform validation queries such as SELECT COUNT(*) and SELECT MIN(signup_date) to confirm the results. By following the full workflow described above, you can replicate this import pattern for other CSV datasets. The guide reinforces documenting each step for future reproducibility and auditing.

Tools & Materials

- CSV file(UTF-8 encoding preferred; include header row)

- Database connection profile(Host, port, database, user credentials)

- SQL Workbench/J(Latest stable release; ensure JDBC drivers installed)

- Target table schema(Create or verify table with proper column types)

- Text editor(For quick CSV cleanup before import)

Steps

Estimated time: 60-120 minutes

- 1

Prepare environment

Ensure SQL Workbench/J is installed, you have a CSV file ready, and you know the target database connection details.

Tip: Test reading a small sample row to confirm access. - 2

Connect to the database

Open Workbench/J, set up the connection profile, and verify you can run a simple query against the target schema.

Tip: Use a dedicated user account with limited privileges for imports. - 3

Create or verify target table

If the table does not exist, create it with columns that match CSV columns in type and order; consider nullable settings.

Tip: Check for appropriate data types to avoid implicit casting. - 4

Configure import settings

In the import wizard, set delimiter, encoding (UTF-8), header row usage, and quote character as needed.

Tip: Set batch size to balance performance and error reporting. - 5

Run the import via GUI

Use the Import/Data Load tool to map CSV columns to table columns, review a preview, then execute.

Tip: Always start with a small test file. - 6

Verify import results

Query the target table to confirm row counts and sample data match the CSV.

Tip: Look for truncated strings or misformatted dates. - 7

Handle errors and re-run

If errors occur, adjust the CSV or mapping, fix the data types, and re-run the load.

Tip: Log errors and reprocess only failed rows. - 8

Post-import validation

Run integrity checks, count rows, check for NULLs where not allowed, and validate key constraints.

Tip: Consider adding a fast index if importing large data.

People Also Ask

Can I import very large CSV files with SQL Workbench/J without memory issues?

Yes, but large files require strategy: chunk the file, adjust batch sizes, and monitor memory usage. Use staging and incremental loads when possible.

Yes, you can import large CSV files by chunking and batching, then monitoring memory usage.

Which databases are supported by SQL Workbench/J for CSV import?

SQL Workbench/J supports many major databases via JDBC. Import features may vary by DBMS; refer to the database's COPY or LOAD DATA syntax for best results.

Most JDBC-backed databases are supported; check the specific database syntax for loading CSVs.

How do I map CSV columns to table columns effectively?

Ensure the CSV header aligns with your table columns, or use a mapping step in the GUI to assign each CSV column to a table column. Validate data types and handle date formats explicitly.

Align headers to table columns and validate types during mapping.

What encoding should CSV files use for reliable imports?

UTF-8 is recommended for most CSV imports to avoid characters being misread. If your data includes special characters, confirm the encoding matches the source file.

Use UTF-8 encoding to minimize character set issues.

How can I handle null values during import?

Decide whether NULLs are allowed in each column and configure the import to insert NULLs or default values accordingly. Missing data may require preprocessing in the CSV.

Define null behavior per column and preprocess as needed.

What if the header row doesn't match the target schema?

Adjust the CSV header or remap columns in the import tool. If needed, transform the CSV beforehand or create a staging table to normalize columns.

Remap or transform columns to align with the target schema.

Watch Video

Main Points

- Plan before you import: inspect CSV structure and target schema

- Use UTF-8 encoding and a header row for reliable mapping

- Test with a small sample and validate results thoroughly

- Automate checks to prevent regressions on larger imports

- Leverage MyDataTables guidance to optimize CSV import workflows