Writing to CSV: A Practical Guide for Data Professionals

Practical, repeatable methods for writing data to CSV across Python, PowerShell, and spreadsheets. Covers encoding, delimiters, headers, and validation for reliable, portable CSV outputs.

Goal: learn how to write data to a CSV file across common tools. This guide covers encoding, delimiters, headers, quoting, and basic validation to ensure portable, reliable CSV outputs. You'll see practical examples in Python, PowerShell, and spreadsheet workflows, plus tips for streaming large datasets and testing results.

Understanding CSV basics and why writing matters

CSV is the simplest form of tabular data. It stands for comma-separated values, but in practice the delimiter can vary; most tools support a range, including semicolons and tabs. The beauty of CSV is its plain-text nature, which means it is human-readable and easy to generate programmatically. When we say 'writing to CSV', we mean transforming structured data (lists, records, rows) into a text file where each row represents a record and each column a field. This operation is central to data pipelines, reporting, data exchange with partners, and loading data into BI tools. In real-world workflows, you might pull data from a database, from a REST API, or from a data lake, then serialize it to CSV for downstream consumption. The decisions you make at write time—encoding, delimiters, whether to include a header, how to quote fields—determine how reliably downstream systems will parse the file. This block lays the foundation: what CSV is, why CSV writing matters, and what the output should look like (rows of delimited text with a header line or without).

Core considerations: encoding, delimiters, headers, and quoting

CSV handling hinges on a few non-negotiables. Encoding determines how characters are represented; UTF-8 is the de facto standard in modern pipelines, with BOM only in some systems. Delimiters vary by locale and tool; while a comma is typical, semicolon or tab-delimited files are common in Europe or data from certain vendors. A header row improves readability and downstream mapping, but some old pipelines accept data without headers. Quoting rules are critical: if a field contains the delimiter, quotes, or a newline, it must be enclosed in quotes; internal quotes are escaped by doubling them (e.g., "She said, "Hello""). Be consistent about line endings (CRLF vs LF) and avoid mixing conventions in a single file. By standardizing encoding, delimiter, header presence, and quoting, you reduce parsing errors and integration friction across systems.

Language and tool options: Python, PowerShell, Excel, and shell utilities

There are many ways to write CSV, each with trade-offs. In Python, the built-in csv module and libraries like pandas offer robust options for both simple and complex pipelines. PowerShell provides Export-Csv for quick scripting in Windows environments. Excel can save data as CSV, but beware of formatting changes and delimiter defaults; prefer saving raw data rather than exporting from a formatted sheet. On the command line, utilities like printf, awk, or xsv can construct CSV data from streams or logs. The choice depends on your data source, performance needs, and the target ecosystem. For repeatable tasks, prefer code-based approaches over ad-hoc manual exports, so you can version the logic and run it as part of an automated workflow.

Practical writing patterns: building and streaming

Practical CSV writing involves selecting a pattern that fits data size and update frequency. For small datasets, you can accumulate rows in memory and write them in one go. For larger datasets, streaming is essential: open the file with newline='', create a writer, then write the header once and stream each row as it becomes available. Avoid string concatenation for performance and correctness; use a library’s writer abstraction and ensure you flush or close the file after writing. If your data comes from a streaming source, consider buffering only a small batch to balance memory usage and write latency. Always validate the header aligns with your data model and maintain a consistent schema across all writes.

Handling large CSVs: streaming, chunking, memory considerations

Large CSVs can exhaust memory if you accumulate too many rows. Streaming row-by-row minimizes peak memory usage, but you must manage backpressure and disk I/O carefully. Use chunking strategies: process a fixed-size batch, write to disk, then clear the batch. Compression (gzip) can reduce disk usage and transfer time, but ensure your downstream tools can read compressed CSVs. When dealing with columns with variable length data, consider normalizing field sizes or using quotes consistently to avoid parsing errors. Finally, document the encoding and delimiter choices in your pipeline so future contributors understand the exact format you produced.

Data quality checks when writing to CSV

Quality control happens at write time. Ensure every row has the same number of columns, matching your header. Validate data types (numbers, dates, strings) and cast as needed to avoid inconsistent formatting. Check for characters that require escaping, such as the delimiter, quotes, or newlines. If your CSV will be consumed by automated parsers, run a quick validation pass with a lightweight parser to catch malformed rows. Keep a small test suite of input samples that cover edge cases like empty fields, very long text, or special characters. These checks prevent downstream failures and reduce debugging time.

Real-world templates and code snippets

Below are practical skeletons you can adapt. The Python example uses the csv.DictWriter for clarity and safety, while the PowerShell snippet demonstrates a compact approach with Export-Csv. Adapt the field names and data sources to your domain, but keep the structure consistent so downstream code can reliably read the resulting files.

import csv

def write_csv(rows, headers, path, encoding='utf-8'):

with open(path, 'w', newline='', encoding=encoding) as f:

writer = csv.DictWriter(f, fieldnames=headers)

writer.writeheader()

for row in rows:

writer.writerow(row)$path = 'output.csv'

$rows = @(

[pscustomobject]@{Name='Alice'; Age=30; Country='US'},

[pscustomobject]@{Name='Bob'; Age=25; Country='CA'}

)

$rows | Export-Csv -Path $path -NoTypeInformation -Encoding UTF8#!/usr/bin/env bash

# A simple inline CSV writer using printf

path='output.csv'

printf 'Name,Age,Country\n' > "$path"

printf '%s,%d,%s\n' 'Charlie' 35 'GB' >> "$path"These templates favor readability and maintainability. As your data grows, swap in a streaming approach or a dedicated CSV library to preserve performance and correctness.

Testing and validation strategies

Testing CSV output is essential to prevent regressions. Start with a small, representative dataset that exercises typical and edge cases: missing values, long text, quoted strings, and non-ASCII characters. Write unit tests that compare the produced file against an expected string, or parse the file back and verify row counts and field values. For larger pipelines, include end-to-end tests that generate the CSV from a simulated data source and validate the downstream consumption (schema, column order, and sample values). Maintain a changelog of write logic changes and run tests in CI to catch regressions early.

Best practices: Start-to-finish checklist

- Define a stable CSV schema with explicit headers and order.

- Always specify encoding (prefer UTF-8) and a consistent delimiter.

- Use library-based writers instead of ad-hoc string concatenation.

- Include a header row for clarity and tooling compatibility.

- Validate output with a lightweight parser and sample data.

- Document your format in project notes and CI pipelines.

- Reuse templates and parameterize file paths to support automation.

Video and cross-check resources

For those who prefer visual guides, search for tutorials that show writing to CSV with Python, PowerShell, and Excel. Look for examples that cover encoding, escaping, and large-file performance. You can also review official documentation for the csv module in Python and the Export-Csv cmdlet in PowerShell to understand API behavior and edge cases. Complementary resources from data-quality and data-ops teams help reinforce reliable practices.

Tools & Materials

- Computer with internet access(Necessary for researching and testing CSV workflows)

- Text editor or IDE(For writing scripts and CSV templates)

- Python 3.x runtime(Includes csv module used in examples)

- PowerShell or Bash shell(To run shell-based CSV writing examples)

- CSV file to write from or to generate(Target file path for write operations)

- Excel or Google Sheets (optional)(For manual validation and testing of exports)

- UTF-8 capable text editor(Helps verify encoding and special characters)

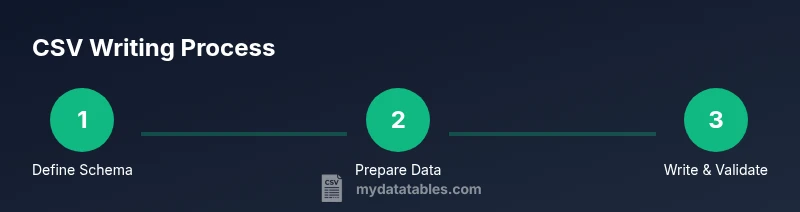

Steps

Estimated time: Total: 60-90 minutes

- 1

Define your CSV schema

Outline the exact columns, their order, and the header names. Decide on data types and how you will represent missing values. This upfront design ensures consistent output across all tools.

Tip: Document the header names and order in a schema file to prevent drift. - 2

Prepare your data

Collect and clean data from sources. Normalize data types, handle missing values, and convert to simple primitives suitable for CSV output (strings, numbers, dates).

Tip: Avoid embedding complex objects; serialize them to strings first. - 3

Choose your writing method

Select the tool that best fits your environment and data size. For small datasets, in-memory writing is fine; for large datasets, prefer streaming/chunking.

Tip: Prioritize library writers over manual string joins for correctness. - 4

Set file path and encoding

Create or open the target file with the correct encoding (UTF-8) and ensure the newline handling matches your platform.

Tip: Use newline='' in Python to avoid extra blank lines on Windows. - 5

Write header row (if used)

Output the header as the first line, matching your schema. This helps downstream tools map columns correctly.

Tip: Always include a header unless you have a specific, documented reason not to. - 6

Write data rows

Iterate over your data, writing one row at a time. Handle quoting automatically when fields contain delimiters or newlines.

Tip: Use a writer abstraction to avoid manual escaping mistakes. - 7

Close and flush

Ensure the file is properly closed or the stream flushed to prevent truncated files. Validate that the file ends with a newline if required by consumers.

Tip: Always run a quick read-back test after closing. - 8

Test with real data and edge cases

Run a small test suite with typical, boundary, and malformed inputs to verify robustness.

Tip: Automate tests in CI for ongoing safety. - 9

Document and automate

Store your write logic in a script or module you can version. Add a simple CLI or function wrapper to reuse across projects.

Tip: Parameterize paths, encoding, and schema for reuse.

People Also Ask

What is a CSV and why is writing to CSV important?

A CSV is a plain-text table where each row is a record and each column is a field, separated by delimiters. Writing to CSV is important for data interchange, portability, and compatibility with many analytics and BI tools.

A CSV is a simple text table. Writing to CSV helps you move data between systems and tools easily.

Which encoding should I use when writing CSV?

UTF-8 is the recommended default encoding because it supports international characters and avoids many parsing issues across platforms.

Use UTF-8 as the standard encoding to ensure broad compatibility.

Should I always include a header row in CSV files?

Including a header row is best practice because it makes the file self-describing and easier for parsers to map columns. Some legacy systems may read without headers, but you should document your choice.

In most cases, include a header row and document the column order.

How do I handle quotes and delimiters inside fields?

If a field contains the delimiter, newline, or quotes, wrap the field in quotes and escape internal quotes by doubling them.

Wrap fields with quotes when needed and escape internal quotes by doubling.

What are best practices for large CSV files?

Stream writes rather than loading everything into memory. Use chunking, compression when appropriate, and validate progressively to avoid memory pressure.

Stream data and validate as you go for large files.

Which tools are best for beginners to write to CSV?

Python with the csv module is beginner-friendly and robust. PowerShell Export-Csv is convenient on Windows, and Excel offers quick manual exports when data is already in a spreadsheet.

Start with Python’s csv module or PowerShell Export-Csv for quick results.

Watch Video

Main Points

- Plan your CSV schema before writing.

- Choose the right tool for your data size and environment.

- Validate output to prevent downstream errors.

- Handle encodings and escaping consistently.

- Reuse templates and automate to maintain reliability.