Excel vs CSV: A Practical Comparison for Data Analysts

An analytical comparison of Excel and CSV to help data analysts, developers, and business users decide when to use each format for modeling, interchange, and reporting.

Understanding the landscape: why excel vs csv matters in modern data workflows

The choice between Excel and CSV is not merely a format preference; it defines how data is manipulated, shared, and consumed across teams. CSV acts as a lingua franca for data interchange, while Excel serves as a rich environment for analysis, visualization, and iterative modeling. In practice, organizations often start with a CSV export or import and then use Excel for deeper analysis, formatting, and collaboration. According to MyDataTables, the decision often hinges on whether the primary goal is human-readable reports or machine-friendly data transfer. This distinction helps set expectations about fidelity, reusability, and automation across downstream pipelines.

Core differences in data structure and fidelity

Excel files (.xlsx) encapsulate a grid of cells with metadata: formulas, formatting, named ranges, and charts. CSV is a plain-text representation of table rows and columns, with no embedded formulas or styles. This fundamental difference affects data fidelity: CSV preserves raw values, but not how they are computed or displayed. As a result, data consumers must re-create formulas or formatting when moving from CSV to Excel, or rely on downstream logic to interpret numeric types, dates, and locale-specific formats. Data analysts should plan for potential type coercion and delimiter handling when migrating between these formats.

Interoperability and portability: when CSV shines

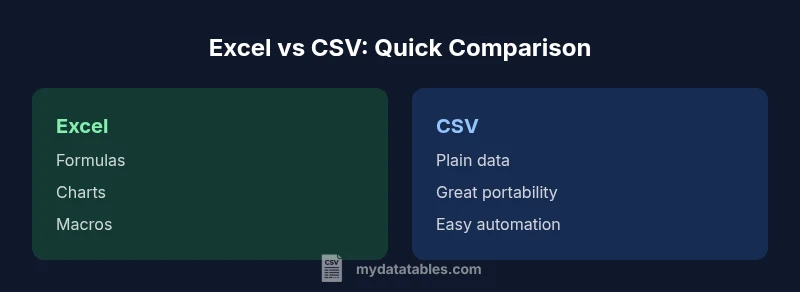

CSV’s strength is interoperability. Because it is plain text, CSV files are natively supported by almost every data tool, programming language, and database system. This makes CSV ideal for data ingestion pipelines, ETL processes, and cross-team handoffs where human readability is not the primary requirement. MyDataTables analysis highlights that CSV shines in automation contexts, where consistent parsing is needed across environments. However, CSV can introduce subtle issues around encoding, delimiter choice, and locale-specific interpretations of dates and numbers that teams must predefine and test.

Formula support, calculations, and features in Excel

Excel’s power lies in its ability to store and compute with formulas, produce dynamic charts, and apply formatting rules for presentation. Users can create macros, pivot tables, and advanced conditional formatting to drive insights directly within the workbook. This makes Excel the preferred tool for exploratory analysis, scenario planning, and storytelling with data. Yet these capabilities come with trade-offs: Excel files can be larger, susceptible to versioning conflicts, and less ideal for automated ingestion if downstream systems expect a flat, text-based input.

Encoding, delimiters, and regional considerations in CSV

CSV files rely on delimiters (commas, semicolons, or tabs) and a character encoding (often UTF-8). Different regions influence delimiter choice and numeric formats, which can lead to misinterpretation when files cross borders, software, or locales. In practice, teams should agree on a standard delimiter and encoding, embed a header row, and use a consistent date and number format. Handling Byte Order Marks (BOM) and escaped characters is also important to avoid data corruption during import/export.

Performance considerations and large datasets

As datasets grow, CSV remains lightweight and fast to parse because it is text-based and lacks embedded metadata. Excel workbooks, however, accumulate metadata and formatting that can slow down opening, saving, and version control in large files. For automated pipelines processing terabytes of data, CSV often provides more predictable performance. When using Excel for large datasets, consider breaking data into smaller sheets or using Power Query/Power BI to model the data and reduce the size of the loaded workbook.

Practical workflows: import/export between Excel and CSV

A common workflow starts with a CSV export from a database or application, followed by data cleaning and transformation in a scripting language, then a final pass in Excel for reporting. Conversely, analysts may author data in Excel and export to CSV to feed an automated pipeline or BI tool. Automating this handoff requires careful attention to headers, data types, and locale settings. Establish a repeatable process and validation checks to minimize drift between formats.

Common pitfalls and how to avoid them

Pitfalls include misinterpreted dates, truncated text due to column width, and loss of formulas when converting CSV to Excel. Delimiter mismatches and encoding issues can cause import errors, while regional settings may rename decimals or thousands separators. To avoid these problems, adopt a defined export/import convention, validate a sample of records after each conversion, and document the steps for team members. MyDataTables Analysis, 2026, emphasizes planning for edge cases and documenting expectations for data interchange.

Decision guidance by role and use case

For data scientists and analysts who need reproducible models, CSV is often preferred for its clarity and ease of automation. For business users who require dashboards, formatting, and exploratory analysis in one file, Excel remains the go-to. Data engineers should design pipelines that minimize format friction, using CSV for data transfer and Excel for end-user consumption when appropriate. In mixed environments, a hybrid approach—CSV for ingestion and Excel for reporting—balances portability with analytical power.