Is CSV or JSON Smaller? A Practical Size Comparison

A rigorous analysis of file size differences between CSV and JSON for flat vs. nested data, with practical guidance, compression impact, and decision criteria from MyDataTables.

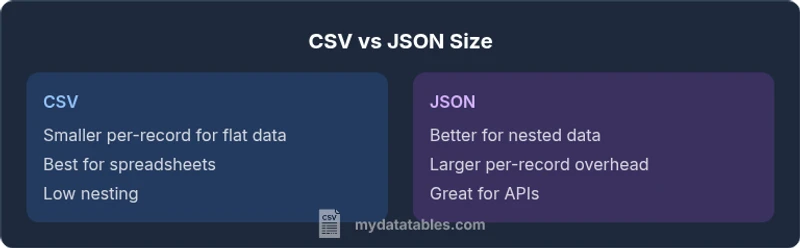

CSV is typically smaller than JSON for flat, tabular data because it stores values in a compact row/column format without repeating field names. JSON adds structural characters, braces, and repeated keys, which inflates size as nesting grows. If your data includes nested objects or arrays, JSON overhead may be justified for structure and parsing efficiency. So, is csv or json smaller? It depends on data shape and tooling.

Is CSV Smaller? Key Question

When you start analyzing data formats for efficiency, a central question emerges: is csv or json smaller? The answer hinges on how the data is shaped and how you plan to consume it. For strictly flat, tabular data where every row shares the same columns, CSV normally beats JSON in raw size because it avoids repeating field names and structural tokens. MyDataTables observations confirm that the compactness of CSV scales with the number of columns and rows when data values are primarily numbers or short strings. However, the border between CSV and JSON can blur if you tilt toward encoding choices, like using a more verbose JSON with whitespace or employing a minified representation. The bottom line is that size is not static—it shifts with data shape, encoding, and the reader’s tooling.

How File Size Depends on Data Shape

The key to understanding size differences is data shape. CSV shines when data is truly tabular: consistent columns, fixed schema, and minimal need for hierarchical representations. Each row becomes a line of values separated by a delimiter, often with a single header line. JSON, by contrast, carries keys with every object, braces to denote structure, and quotation marks for all string values. When you introduce nesting, arrays, or optional fields, JSON’s per-record overhead rises quickly. This is where the practical trade-off shows up: for nested data or irregular schemas, the size delta narrows, but for uniform, flat data, CSV tends to stay smaller. MyDataTables analysis highlights how structure grows the footprint in JSON even before user data is encoded, which helps explain why CSV often remains preferable for size.

Per-Record Size vs Structural Overhead: A Side-by-Side Look

Consider a tiny sample with five fields per record. A CSV line might store 20-40 bytes of payload plus delimiters, depending on field length and quoting. JSON would repeat keys like "name" and "value" for every object, plus separators and braces. The cumulative effect compounds as you scale to thousands or millions of records. In flat data, CSV demonstrates a clear advantage in per-record size. When you add optional fields, nulls, or varying schemas, JSON’s overhead can be offset by its ability to represent complex objects in a single, compact structure, but the size advantage may shift toward CSV only if the nesting is limited.

Compression and Its Size Impact

Compression changes the game. Text-based formats—CSV and JSON—benefit from gzip, zstd, or similar algorithms, which exploit recurring patterns. In practice, CSV often compresses extremely well because numeric data and consistent field patterns produce uniform byte sequences. JSON can also compress efficiently, especially when nested structures are repetitive or when you minify the data to eliminate whitespace. The compression advantage can narrow or widen the raw-size gap, depending on data repetition, field names, and the chosen compression method. MyDataTables notes emphasize testing with your actual data and intended pipeline to estimate compressed sizes accurately.

Real-World Scenarios Where Size Matters

In data pipelines, the cost of storage and transfer can be non-trivial. In batch exports from databases, CSV frequently wins on size for simple tables, making it ideal for downstream analytics in spreadsheets or BI tools. For APIs and configuration data that include nested objects, JSON becomes more convenient despite potential size overhead, as it aligns with modern programming languages’ native data models. When speed matters, consider whether you can stream JSON with minimal formatting or if a compact CSV export with selective columns better fits the throughput needs. The MyDataTables team asserts that choosing the format should reflect data shape, tooling, and long-term maintenance, not size alone.

Techniques to Minimize Size in CSV and JSON

To maximize efficiency, apply best practices. For CSV, keep a consistent schema, remove unnecessary columns, and avoid quoting of numeric values when your parser supports it. For JSON, prefer compact representations: minify output, avoid non-essential whitespace, store numbers as numbers (not strings) where appropriate, and consider binary encodings or schema-based serializers for large datasets. In both formats, using compression at rest and in transit dramatically reduces perceived size, so pair a sensible file format with effective compression strategies to optimize storage and bandwidth.

Common Pitfalls that Inflate Size in Both Formats

Whitespace in JSON, extra headers in CSV, and inconsistent quoting are common culprits that inflate size. Nested data in JSON can explode the footprint if not carefully designed, while CSV can bloat when dozens of optional fields are present in every row. Null handling also matters: JSON may store null explicitly, CSV may omit missing fields or use placeholders. Finally, file integrity practices like including metadata or verbose schemas can add to the header or top-level structure, increasing the overall size. Being mindful of these pitfalls helps you keep size under control across formats.

Quick Rules of Thumb and a Lightweight Decision Checklist

- If data is flat and stable, CSV is usually smaller and easier to edit in spreadsheets.

- If data includes nested objects or arrays, JSON offers better structure despite potential size overhead.

- For large-scale pipelines, measure raw and compressed sizes with representative samples.

- Prioritize tooling compatibility and parsing speed alongside size when deciding.

- Consider hybrid approaches (e.g., CSV for tabular dump, JSON for API payloads) to balance size and usability.

Comparison

| Feature | CSV | JSON | |

|---|---|---|---|

| Typical per-record size impact | Smaller per record for flat data | Larger per record due to keys and braces | |

| Overhead per file | Lower overhead (no repeated keys) | Higher overhead (repeated keys, structure) | |

| Support for nested structures | Poor native support; requires encoding workarounds | Native support; great for nested data | |

| Read/write tooling friendliness | Excellent with spreadsheets; simple tooling | Excellent for programming; JSON parsers widely available | |

| Compression friendliness | Excellent when patterns are strong; gzip/zstd can drastically reduce size | Good, especially with minified JSON and repetitive keys | Best for illustrating the contrast rather than giving exact numbers |

| Best-for scenario | Flat tables, data exports, quick analysis in spreadsheets | Hierarchical or API-oriented data, config files, logs |

Pros

- CSV generally yields smaller file sizes for flat, tabular data, and is easy to edit in spreadsheets

- JSON preserves structure and supports nested data without layout constraints

- CSV has minimal parsing overhead for simple datasets and streams well in row-oriented pipelines

- JSON mapping aligns with many programming languages and data models, reducing transformation steps

- Compression dramatically improves both formats and often makes the size difference less relevant in practice

Weaknesses

- CSV can balloon with wide schemas or inconsistent rows due to quoting and escaping

- JSON incurs overhead from keys and braces, especially with nested structures

- CSV lacks native support for hierarchy without encoding tricks, which can complicate processing

- JSON can be verbose for simple tabular data and may require extra tooling for efficient editing

CSV is generally smaller for flat data; JSON is better for nested or complex data regardless of the raw size.

For simple tables, choose CSV to minimize size and simplify spreadsheets. If your data has nested objects or variable schemas, JSON’s structure often justifies its overhead. Test with your dataset to confirm the expected savings in raw and compressed forms.

People Also Ask

Is CSV always smaller than JSON in raw size?

Not always. For flat data, CSV usually has a smaller raw size due to no repeated keys. For nested data, JSON can become comparable or larger, depending on schema and escaping. Consider the data shape and tooling before deciding.

CSV is typically smaller for flat data, but nesting in JSON can change the size dynamics.

How does compression affect the size difference?

Compression, such as gzip or zstd, reduces both formats considerably. CSV often benefits from uniform patterns, while JSON benefits from minified output and repeated keys. The relative saving depends on data redundancy and chosen compressor.

Compression can greatly reduce both formats; run tests with your actual data.

What scenarios favor JSON over CSV for size reasons?

JSON is advantageous when data needs nested structures, arrays, or schemas that evolve. If size is a concern, the difference may be small after compression, but readability and structure often justify JSON in APIs and configurations.

JSON shines when you need structure, not just space savings.

Can I mix CSV and JSON to balance size and usability?

Yes. Use CSV for tabular exports and JSON for API payloads or configuration data. This approach can optimize both storage and ease of use across different parts of your workflow.

Use CSV for tables and JSON for structured data to balance size and usability.

Are there formats even smaller than CSV/JSON for certain use cases?

Other formats like binary CSV variants or columnar formats (e.g., Parquet) can be smaller for large datasets with specific access patterns. They require different tooling but can dramatically reduce I/O in big-data pipelines.

Other formats exist, but they require different tooling and use cases.

How should I estimate size before exporting?

Estimate raw size by counting bytes of the data payload and add a small overhead for headers or keys. Then, test with real samples and measure compressed size to capture realistic storage and transfer costs.

Use sample data to estimate both raw and compressed sizes before exporting.

Main Points

- Choose CSV for flat table data to minimize raw size

- Prefer JSON when data contains nesting or complex structures

- Compression can level size differences; always test with real data

- Minify JSON and avoid whitespace to improve size efficiency

- When in doubt, measure both formats on representative samples